AI is no longer a side project for product teams. In our study of 2,747 people across approximately 1,100 companies, most already use AI every day and say it helps them work faster and produce better work. Many report saving several hours each week. At the same time, a third still have no structured training, which slows progress and creates uneven results.

This article explains the adoption levels used in the report and outlines the process for transitioning from one level to the next. If you just finished the quiz, you will find a concise checklist for your result under each level. Everything here is written for busy leaders who want clear language and useful actions.

What an adoption level is, and why it matters

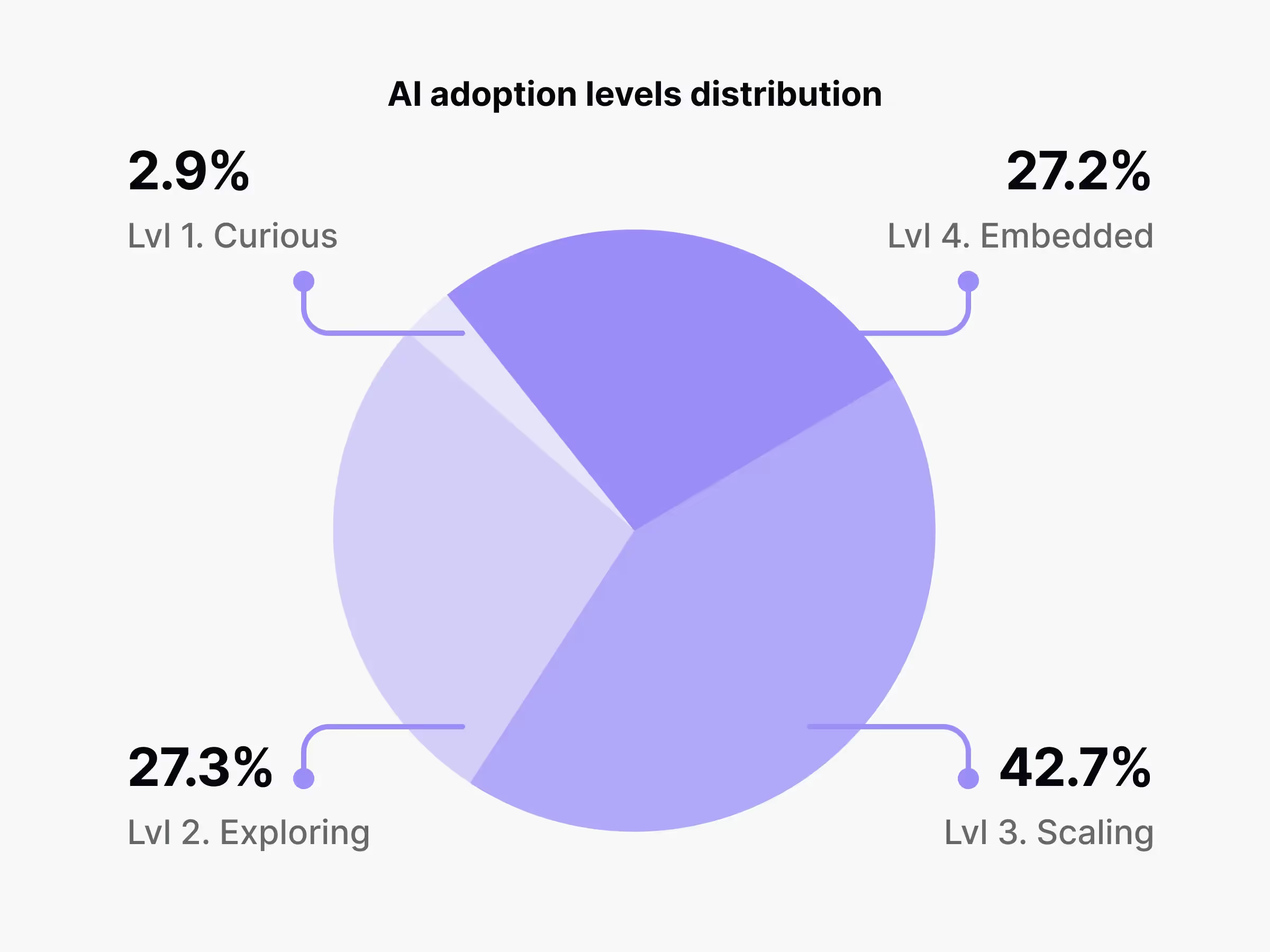

An adoption level describes how deeply AI is part of daily work. Think of it as a simple ladder with four rungs: Curious, Exploring, Scaling, and Embedded. Knowing your place on that ladder helps you set expectations, choose the next step, and compare your situation with peers.

Behind the scenes, we evaluate adoption by using five signals: your organization’s stance on AI, the breadth of approved tools, how often teams use them, whether training exists, and whether a named leader sponsors the effort. It is a descriptive index. It shows where you are today and what typically improves when teams climb to the next rung.

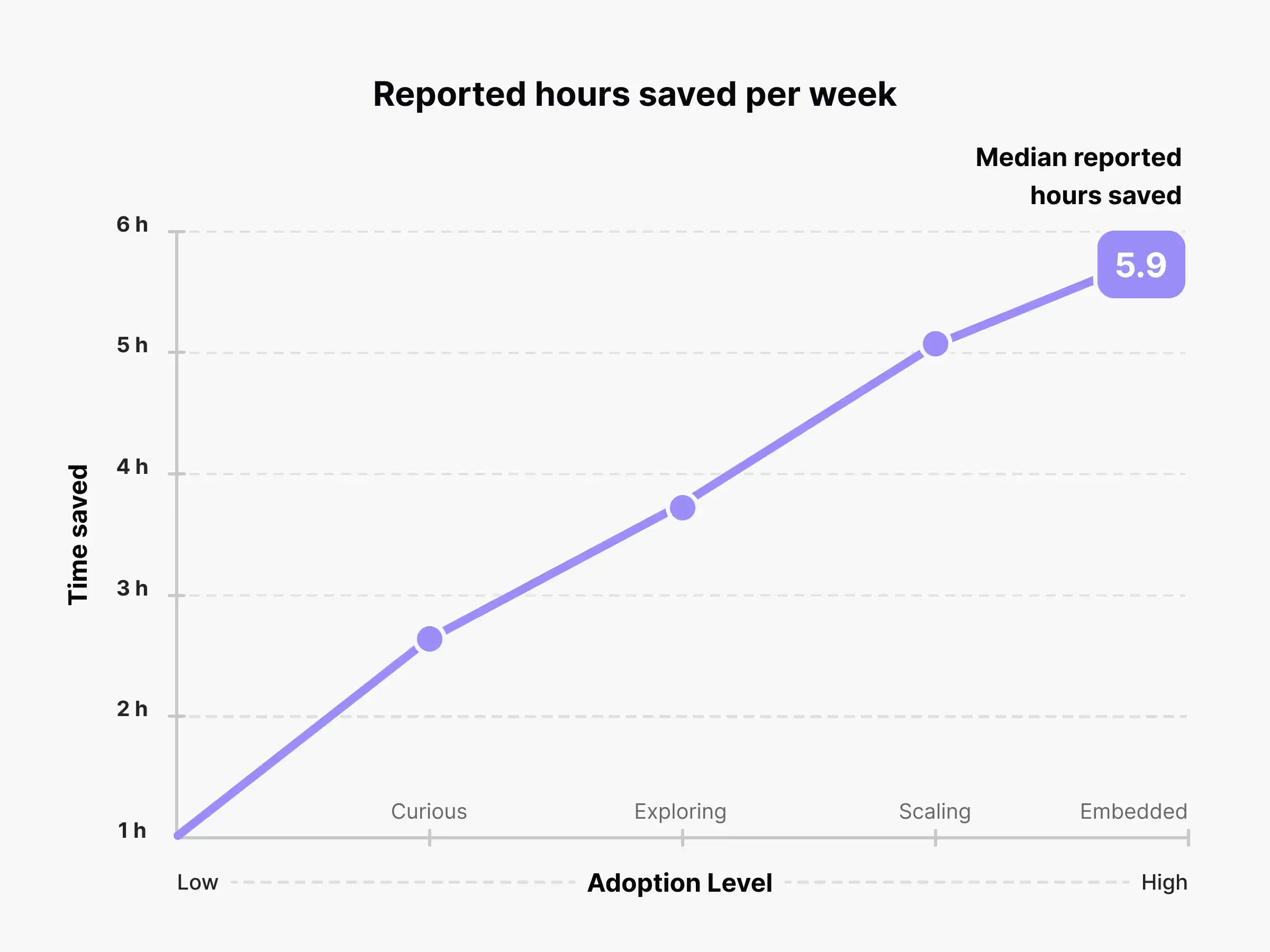

Across the dataset, the pattern is consistent. As teams move up the ladder, reported productivity rises, and weekly hours saved increase. The biggest jump usually happens when a team moves from scattered pilots to live use with light governance and basic training.

Level 1: Curious

AI is not on the roadmap yet. A few people test tools on their own, but there is no shared guidance, budget, or security review. Wins are isolated and hard to repeat. People are unsure what is allowed and worry about risks. Managers are interested, but nothing formal exists.

How to move forward

Start small and make it safe. Choose a single low-risk, high-volume task such as idea drafts, meeting notes, or research summaries. Give a small pilot team access to one approved chat tool and set a short time box. Publish a one-page guardrail that covers sensitive data, good use cases, and where to ask questions. Nominate two champions who collect examples and tips in a shared space. After two weeks, show before-and-after samples and a simple estimate of hours saved.

Level 1 cheat sheet

What success looks like

People know what they can and cannot do. A short pilot shows time saved on a real task. This should give the leaders enough data to fund the next step.

Level 2: Exploring

Pilots are running in pockets of the company. Volunteers share prompts and findings in docs and chats. Some teams see strong gains while others do not. Tool choices multiply, and confusion grows about what to use. Training is ad hoc.

How to move forward

Turn the best pilot into a repeatable workflow. Pick one use case with clear value, then publish a short playbook with a checklist and a template inside the tool people already use. Approve a short tool list so every team has one default chat assistant and, where relevant, one research or analysis tool. Appoint a product or design owner who can say, “This is how we do it here.” Start a monthly lunch-and-learn, record it, and fund a short course for the core team.

Level 2 cheat sheet

What success looks like

A live workflow exists with a named owner, a basic tool budget, and starter training. Most of the pilot team uses the flow each week and can point to specific time savings or quality gains.

Level 3: Scaling

AI is now part of live work for one or more teams. Licences are issued, connectors are set up, and light governance exists. People receive basic training. The conversation shifts from “can we use this?” to “how do we make it consistent and fast?”

How to move forward

Standardise and spread. Create an internal AI Guild with a short charter that covers patterns, reviews, and shared assets. Move playbooks into templates and snippets inside your design, research, and writing tools so people do not need to hunt for guidance. Expand training beyond early adopters and add a small assessment to check understanding. Add simple review checkpoints inside the existing workflow so quality and safety are part of the routine. Tie one outcome to a team OKR, for example, faster research synthesis or quicker copy iteration.

Level 3 cheat sheet

What success looks like

Several teams use the same patterns, cycle times improve, and new hires learn the basics during onboarding. Leaders can now explain where AI helps and where it does not.

Level 4: Embedded

AI is a routine step in multiple processes. Standards and data rules are clear and used. Results are tracked. Hiring, onboarding, and reviews include AI skills. Teams keep improving the practice and share stories of what works.

How to move forward

Focus on quality, deeper integration, and business outcomes. Run quarterly audits of AI-assisted work with a small review panel, which will help you spot drift and keep standards high. Extend training to managers so they know when to trust outputs and when to escalate. Add a simple path for proposing new use cases that captures benefits and risks in a single page. Integrate AI steps directly into the tools where people already work. Share case studies internally and, where appropriate, externally to attract talent and partners. Track outcomes such as faster release cycles, wider research coverage, improved conversion, or fewer reworks.

Level 4 cheat sheet

What success looks like

Teams plan and ship with AI in mind. The company can point to measurable outcomes, not only time savings. New people learn the basics quickly. Quality stays high as usage grows.

How adoption connects to outcomes

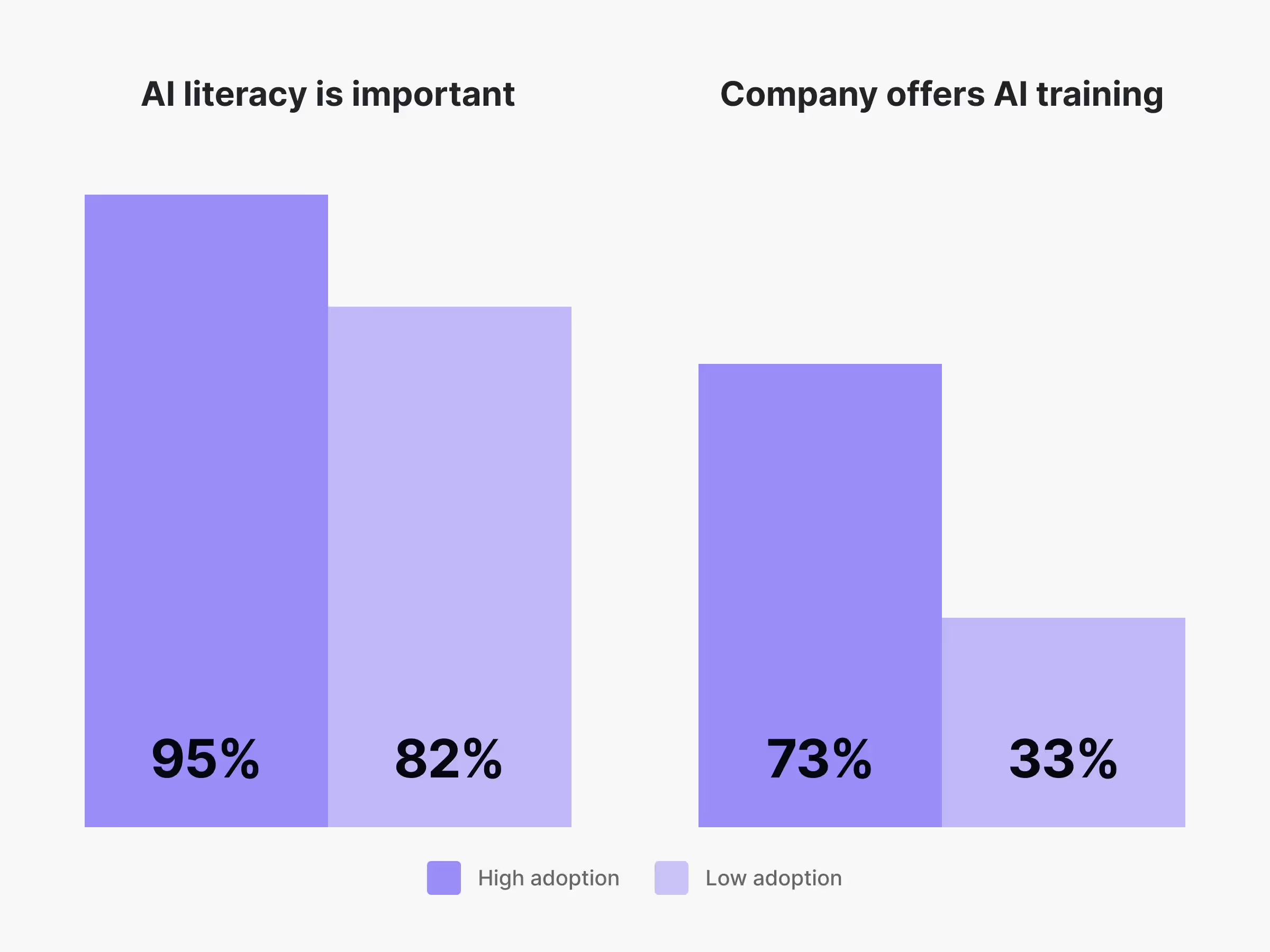

The link between adoption and results is steady. Productivity scores climb at each step of the ladder, and the median answer shifts from “slightly more productive” to “much more productive” once teams reach Scaling. Weekly time saved follows a similar line. Curious and Exploring teams usually report a few hours back. Scaling and Embedded teams often report about twice that. Support grows with adoption, too. Almost everyone believes AI literacy matters, yet training access lags in earlier stages. The largest change in support happens when teams move from Exploring to Scaling, which is also where many teams stall. Small, formal training and clear guidance often make the difference here.

Method note

The figures in this article come from the same survey that powers the report. We asked about role, region, industry, company size, usage, training, leadership support, and outcomes such as productivity and time saved. The adoption index combines those inputs into one score. We use straightforward statistics and clear cuts by company size, industry, and region.

Where to go next

Here's how you can take it further

- Measure your level with the quiz and get a short action plan that matches your result.

- Download the full report to see how teams like yours compare and to explore the charts in detail.

- Connect with us to see how Uxcel helps your product team level up AI and cross-functional skills fast. Let’s talk.