Most motion designers hit the same wall at some point in time. You spend hours perfecting a hover animation in After Effects, export it, hand it to your developer, and then watch them rebuild the interaction logic from scratch in code. The animation looks right. But it doesn't respond to anything. It just plays.

That disconnect between what you design and what actually ships has been one of the most persistent friction points in UI animation. And it's gotten worse, not better, as product teams expect motion to do more than decorate. In 2026, motion is the interface. Users expect buttons that compress on press, cards that slide with inertia, and loading states that morph into success confirmations. Static screens feel broken by comparison.

Lottie Creator, a web-based animation tool built by LottieFiles, is tackling this gap with 3 features that shift how motion gets made. Not just how it looks, but how it behaves, scales, and ships. I spent time exploring the Physics Simulator, State Machines (including the AI-powered Prompt to State Machines), and Motion Tokens to understand what they actually change about the day-to-day workflow for motion designers working on real products.

Here's what stood out, and why LottieFiles built a short certification course that walks you through all of it.

Why does motion still feel like an afterthought in most products?

Before getting into the features, it helps to understand the gap they’re designed to close.

Design systems have matured significantly over the past few years. Teams document colors, typography, spacing, and component libraries with obsessive detail. Figma variables let you swap between themes in seconds. But in most organizations, motion is still the wild west. Every animator does their own thing. Every screen feels slightly different. There’s no shared vocabulary for how elements should move, bounce, or respond.

The result is an inconsistency that users feel, even when they can’t name it. A dropdown that eases in with one curve on the settings page and a completely different curve on the dashboard. A toggle that springs on iOS but snaps rigidly on web. These are small differences on paper. But they erode the sense that a product was built by people who actually talk to each other.

Cognitive science backs this up. Human brains are prediction machines, constantly forecasting where objects should go, how fast they should move, and how they should respond to force. When a digital interface respects those predictions, the experience feels effortless. When it violates them, something feels off, and you might not be able to articulate why.

The reason this inconsistency feels wrong comes down to how we experience the physical world. Every object you've ever interacted with follows predictable rules. Heavy things take effort to move. Fast things slow down gradually, not instantly. Surfaces push back when you press them. Your brain has spent your entire life learning these patterns, and it applies them to screens even though screens aren't physical objects.

When a notification slides in and stops dead without any deceleration, it feels jarring. Not because you consciously think about physics, but because nothing in your lived experience stops that abruptly. When a sidebar opens at a perfectly constant speed from start to finish, it feels mechanical. Real doors accelerate as they swing open and slow before they hit the wall. When you tap a card and it just appears in a new position without any transition, your brain loses spatial context. Where did the old card go? Where did this one come from?

These aren't design preferences. They're perceptual expectations baked into how human cognition works. And they're exactly what the Physics Simulator in Lottie Creator is built to address: giving your motion the weight, friction, and responsiveness that users unconsciously expect from every interaction.

How does the Physics Simulator change the way you animate?

If you’ve ever spent 45 minutes tweaking easing curves to make a falling object look natural, the Physics Simulator will feel like relief.

The feature lets you add gravity, friction, bounce, and spring behavior directly to animation layers inside Lottie Creator. It is absolutely easy to use, giving you full confidence as you work. No manual keyframing for every frame of a collision. No guessing at deceleration curves. You adjust properties in real time, control movement, refine keyframes, preview the result, and iterate fast.

The controls worth knowing

The Physics Simulator works at two levels:

- Global properties control the environment: edge boundaries that define where objects can exist, gravity strength, simulation duration, keyframe reduction settings, and a wireframe mode for visual debugging. Think of these as the "world rules" for your animation scene.

- Per-element body properties control how individual layers behave within that world: mass, initial force, friction, air friction, and bounciness. A heavy element with low bounciness will thud to a stop. A light element with high bounciness will ping around like a rubber ball. You can also set a "destroy on collision" behavior that removes objects on contact, useful for particle-style effects or playful interactions where elements scatter and disappear.

The combination of global and per-element controls gives you a surprising amount of precision without touching a single keyframe. You're describing behavior, not drawing motion curves frame by frame.

Where this fits in real product work

The use case that clicks fastest for most product designers: onboarding animations. Think of a welcome screen where elements drop into place with natural weight, or a success animation where confetti scatters with realistic physics. Without the simulator, you’d keyframe each piece individually, tweaking curves for hours. With it, you set the physics rules and let the simulation generate the motion.

A smart workaround from the LottieFiles team: when animating text or image layers, use a simple shape as a proxy. Apply the Physics Simulator to the proxy, then parent your text or image layer to it. The physics drives the proxy, and your visual follows. You get predictable collisions and cleaner motion without fighting the simulator’s behavior on complex layers. I found this particularly useful for text that needs to “land” on screen with a bounce, something that’s surprisingly fiddly to keyframe manually but trivial with the proxy approach.

The broader point here is more important than any single feature. Physics-based motion looks better because it is better. Nothing in the physical world moves at constant speed. Apples accelerate as they fall. Balls decelerate as they bounce. Users intuitively expect digital objects to follow these same rules. When they do, the interface feels grounded and polished. When they don’t, something feels cheap, even if the user can’t explain why.

If you want to experiment, LottieFiles has several remix examples you can fork and modify directly: an astronaut animation, a parachute drop, a bird animation, and a filter effect. Remixing is a fast way to understand how the physics controls interact before building your own animations from scratch.

What are State Machines, and why do they matter for UI components?

Physics-based motion handles the “how things move” problem. State Machines handle the “how things respond” problem. And the response layer is what separates a polished animation from a truly interactive experience.

Here’s the core concept: your animation can exist in one of several defined states (idle, hovered, pressed, active, loading, complete), and transitions move it between those states when specific triggers occur. A click. A hover. A data update. The animation doesn’t just play. It reacts.

Think of a button. It can be in a “play” state or a “pause” state. When you tap it, it transitions between the two, changing both its icon and behavior. Now imagine adding more states like buffering, loading, or disabled. Each user action or system event triggers a shift between these states. That’s a state machine in action.

Lottie Creator also enables teams to collaborate in real-time on animation design, allowing multiple users to work together, share feedback instantly, and accelerate the creative process. You can easily share your animations with stakeholders or clients via a web link, making it simple to gather feedback and maintain alignment throughout the project.

How the workflow actually plays out

The workflow makes more sense when you see it as two phases rather than a step-by-step checklist.

- Phase one is preparation: Setting up what the state machine needs to work with. You start by breaking your animation timeline into named segments, where each segment represents a distinct visual state. For a button, that might be an Idle loop on frames 0-10, a Hover transition on frames 11-20, and a Pressed effect on frames 21-35. Then you open the State Machine editor and register each segment as a formal state with its own playback rules (does it loop? play once and hold? reverse?). Finally, you create the inputs: variables like a boolean isHovered, a counter clickCount, or an event trigger. These act as the state machine's memory, tracking what's happened so it knows what to do next.

- Phase two is wiring: Connecting everything together. You draw transition lines between states and set the conditions that trigger them. Idle moves to Hover when isHovered equals true. Hover moves to Pressed on Mouse Down. You can stack multiple conditions on a single transition for more complex logic. The last step connects real user actions to your inputs: Mouse Enter sets isHovered to true, Mouse Leave sets it to false, Mouse Down increments clickCount. Once those connections are made, the state machine responds to live interaction.

The critical part that changes everything for the design-to-dev handoff: all of this logic gets embedded directly in the exported .lottie file. When a developer drops that file into their project using a dotLottie player, it works out of the box on web, iOS, and Android. No reimplementing animation logic in code. No miscommunication between design and engineering. No back-and-forth Slack threads trying to describe how a hover animation should behave.

I want to be specific about why that matters in practice. Traditionally, a designer would create an animation, export it as a video or Lottie JSON file, and then a developer would need to code all the interactive behavior separately. The animation was decorative. The interaction was programmatic. They lived in different worlds. State Machines collapse those worlds into one file.

Prompt to State Machines: building interaction logic with plain language

Building complex state machine graphs from scratch can feel daunting when you’re new to the concept. You need to create inputs, define transitions, double-check every interaction path, and make sure the whole thing actually works before exporting. That’s a steep learning curve if you’ve never worked with state-based logic before.

Lottie Creator’s Prompt to State Machines feature addresses this directly. It’s an AI assistant that builds state machines from natural language descriptions. Lottie Creator also offers robust support, including technical help and user guidance, making it a reliable choice for both beginners and experienced users.

After creating your animation segments (the AI needs segments to work with), you describe the interaction you want in plain text. Something like, “When the user hovers, play the hover segment. On click, play pressed and return to idle.” The AI generates a complete state machine, including nodes, transitions, triggers, and conditions, as a visual node map you can review and refine. The platform also includes AI-powered tools like Motion Copilot, which helps users generate smooth keyframes based on text descriptions of desired motion.

Where State Machines show up in real product work

The use cases span wider than you might expect. Here are some examples:

- Interactive UI components are the obvious starting point. Buttons with idle, hover, pressed, and success states. Toggles that animate between on and off. Tabs that slide content in when selected. Accordions that expand and collapse smoothly. Input fields that shift between empty, focused, filled, and error states.

- Product onboarding flows benefit from step-by-step animations that react to user progress rather than playing on a fixed loop. When a user completes step two, the animation advances to step 3. When they skip ahead, it catches up.

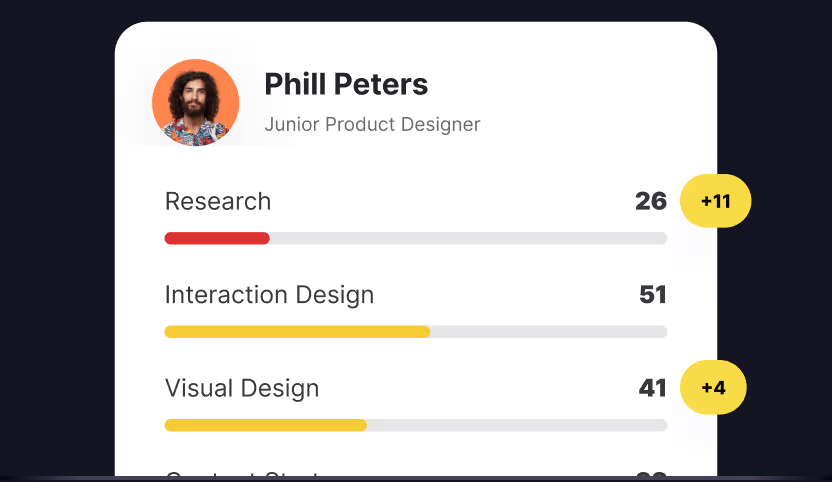

- Gamified interactions become straightforward to build visually: collect states, level-up animations, progress feedback, "unlock" moments. Any product with achievement mechanics can use state machines to make those moments feel responsive instead of canned.

- Responsive storytelling is also possible. Animations that branch based on user choice, all without writing code. Think of an interactive product tour where different user paths trigger different animation sequences.

When passive animation does the job

Not every animation needs a state machine. An easy test the LottieFiles team suggests: if you remove a particular animation and the interface breaks cognitively (users lose context, miss feedback, or can't track what changed), that animation was doing real UX work and might benefit from state-based interactivity. If removing it changes nothing about comprehension, it was visual garnish, and a simple playback animation is probably fine. That distinction keeps you from over-engineering. State Machines are powerful, but you don't need them for a simple loading spinner that loops.

How do Motion Tokens bring system-level thinking to animation?

State Machines and the Physics Simulator solve interaction and motion-quality problems. Motion Tokens solve the consistency problem.

If you’ve used Figma variables, the concept is familiar. You define a token, bind it to a property, and change the token to update everything that references it. One file. Infinite variations. Motion Tokens bring that same logic to animation.

After setting up your themes and token management, you can export animations in various formats including Lottie JSON, dotLottie, GIF, MP4, MOV, and WebM, making sharing and integration easy.

What can be tokenized

Motion Tokens cover a wide range of animation properties:

- Text properties: content, font, size, and alignment.

- Colors: solid fills, strokes, and multi-stop gradients.

- Transforms: position (X and Y coordinates), scale (vertical and horizontal), rotation (0-360 degrees), opacity, skew, and skew axis.

You create a token for any of these properties in Lottie Creator, and that property becomes editable at runtime through the Slots API. The designer defines what's changeable. The developer connects it to live data.

What this looks like in practice

In Lottie Creator, you access tokens through a small dropdown chevron that appears next to each property when hovered. Give the token a descriptive name so you can identify it later. After creating tokens, you manage them through the Motion Tokens panel on the floating toolbar.

Then comes the part that makes this genuinely useful for production work: themes. Group your tokens into preset collections (light mode, dark mode, a client's brand variant) and switch between them instantly. Themes can trigger at runtime or through State Machines. You can see this in action with a live CodePen demo that cycles through color and text token themes using a state machine.

LottieFiles also added an AI feature for theming: describe a theme in plain text, and the AI generates the token values. You fine-tune manually from there. Not perfect every time, but a solid starting point that saves considerable setup time.

Once you export the animation to your workspace, you can preview it exactly as it will appear in production and update token values in real time to test every variant, without affecting the source file. Click "Reset all" to undo changes and return to the original values.

Why this changes the conversation with developers

Under the hood, Motion Tokens use the Slots API in dotLottie. Developers connect tokenized properties to live data at runtime using straightforward API calls. A score that updates in real time. A loading bar that reflects actual progress. A drag-and-drop layer tied to live position data.

The designer sets up the tokens. The developer connects the data. The animation never needs to be re-exported.

For design system teams, this solves a problem that’s been annoying for years. Instead of maintaining 10 versions of the same animation for 10 different contexts (light theme, dark theme, brand A, brand B, English, Spanish, Japanese), you maintain one file. The tokens handle the variation. Colors adapt to theme. Text adapts to language. Transforms adapt to screen size. One animation file scales across your entire product, across every platform.

Building a portfolio of interactive Lottie animations helps showcase your skills and achievements to potential clients or employers, making you stand out in the job market. You can also easily publish your polished animations to a community platform, exposing your work to a broader audience and enhancing your professional portfolio.

Combine Motion Tokens with State Machines and the leverage multiplies. You can switch between themes as part of an interaction. Light mode, dark mode, multiple brand variants, all from one file, without rebuilding anything.

Text layers are where all three features intersect naturally. A headline that bounces into view uses the Physics Simulator. A button label that changes on hover uses State Machines. A CTA that swaps language based on locale uses Motion Tokens. Lottie Creator lets you animate text properties like gradients, masks, and multi-stop color transitions directly, with instant previews as you build.

How does Lottie Creator MCP connect animation to real product logic?

Motion Tokens make animations adaptable. State Machines make them interactive. Lottie Creator MCP (Model Context Protocol) adds another layer by turning animation into something that can respond to context, automate repetitive work, and align with larger systems. Instead of building every motion detail manually, MCP allows Lottie Creator to connect with external tools, AI workflows, and structured design data to help generate animation logic faster.

In practice, this means MCP can assist with repetitive animation tasks that usually take time to build by hand, like generating repeated shapes, creating patterned motion, spacing elements consistently, or producing variations of the same interaction. Rather than manually animating every layer, designers can describe intent and let MCP help construct the animation framework. It can also read inputs from an existing design system, such as spacing rules, color tokens, typography, or component structure, and generate animations that feel consistent with the product’s visual language. The result is motion that not only reacts to interaction and live data, but also fits naturally within the broader system it belongs to. MCP shifts animation from a standalone asset into something that can be assisted, automated, and context-aware from the start.

How does Lottie Creator fit with the tools you already use?

Lottie Creator works seamlessly across devices, including smartphones, tablets, and desktops, giving you the flexibility to animate wherever you are. However, for the most robust and feature-rich experience, using Lottie Creator on a desktop environment is optimal for creating and editing animations.

If you’re already working in After Effects, Lottie Creator doesn’t ask you to abandon that workflow. Many designers create their core animations in After Effects and then bring them into Lottie Creator to layer on interactivity. You get the precision and expressiveness of After Effects for crafting motion, combined with Lottie Creator’s State Machine and Motion Token capabilities for making that motion responsive.

For teams working in Figma, LottieFiles offers a Figma plugin that supports interaction export. As of April 2026, the plugin can detect triggers like click, tap, hover, mouse enter/leave, press and hold, and after delay, then map them automatically to State Machine logic. You design the interaction in Figma’s prototyping tools, and the plugin translates that into dotLottie state machine format on export.

You can also import SVGs, Lottie JSON, and dotLottie files directly into Lottie Creator. Additionally, you can upload various media files, making it easy to bring in assets for animation.

Export options include dotLottie, Lottie JSON, optimized Lottie, GIF, MP4, MOV (up to 4K), and WebM. CDN sharing links are available for quick embedding. Users can also download finalized animations in their preferred format for sharing or integration.

The key thing to note is that Lottie Creator is where your animation transforms from a passive clip into an interactive experience, regardless of where the raw animation originated. And everything exports as a single .lottie file that works across web, iOS, and Android through the dotLottie player.

Should you take the Lottie Creator certification course?

All 3 features covered in this post (and more) are taught in the Lottie Creator certification course. It’s a short self-paced program split across 6 sections and 12 lessons. You build two fully animated projects from scratch: a logo animation and an app-ready UI component. Then you turn them into interactive animations using state machines, make them scalable with motion tokens, and package everything for developer handoff.

The course structure covers the full workflow:

- Introduction (2 lessons, ~3 min): Lottie Creator’s interface and core concepts.

- Creation (2 lessons, ~13 min): Building your first animation, plus interactive motion with AI tools (Prompt to Vector, Motion Copilot) and the Physics Simulator.

- Interactivity (4 lessons, ~16 min): Segments and states in State Machines, building a state machine with interactions, building state machines with AI (Prompt to State Machines), and exporting interactive animations.

- Motion Tokens (2 lessons, ~8 min): Introduction to tokens and what they enable in real products.

- Handoff (1 lesson, ~2 min): Developer handoff workflow.

- Final challenge and certification (1 lesson, ~2 min): Submit an animation assignment for review.

You’ll also engage with friends and peers in a supportive learning environment, sharing feedback and collaborating on projects.

To earn the certification, you submit a project that the LottieFiles team reviews. It’s a real credential, not just a badge for watching videos. Ready to jump in and try it out? Try it out now for free.

Who gets the most value from this

Motion designers and animators who want to go beyond timeline animation and ship interactive components that developers can use directly. If you’re tired of the “design it, hand it off, watch it get rebuilt in code” cycle, this course addresses that gap head-on.

Lottie Creator allows users to create, edit, and manage animations all in one place, making it user-friendly for both beginners and experienced designers. Animation tools like Jitter and Lottie Creator let users create animations directly in their web browsers, providing instant previews and high-quality exports in various formats, including GIF and MP4. Lottie animations are widely used in web design and marketing to enhance user engagement and storytelling across various platforms. Their lightweight nature allows for quick loading times and smooth performance, making them ideal for mobile applications and websites.

Developers and design system contributors who want to understand how tokenized, interactive Lottie animations are structured. Knowing what’s inside a .lottie file with embedded state machine logic and motion tokens makes handoff smoother for everyone.

Product designers who are curious about motion but haven’t had a structured way to learn it. The short time commitment is low-risk, and you’ll walk away with concrete projects.

What if you need the theory behind the practice?

The Lottie Creator course teaches you how to build interactive motion. But knowing which components benefit most from that motion, and how they should behave across states like hover, focus, pressed, and disabled, requires solid UI component knowledge.

Uxcel's Core UI Components course covers exactly that ground. It breaks down buttons, forms, cards, selection controls, menus, and modals, with a focus on interaction states and the reasoning behind each design decision. If you're going to animate a button's hover and pressed states in Lottie Creator, understanding how those states should look and function from a UI perspective makes your motion work sharper.

The Advanced UI Components course goes further into the components where motion plays a particularly visible role: tabs, search interfaces, tooltips, breadcrumbs, tables, and navigation patterns. These are the elements where well-crafted transitions and state changes make the difference between an interface that feels polished and one that feels flat.

Both courses are interactive, built around real design decisions rather than passive video lectures, and you can practice in five minutes a day. Pairing component design knowledge with Lottie Creator's tooling gives you a practical advantage: you'll know not just how to build interactive animations, but where they add the most value in a product interface.

In a nutshell

Lottie Creator’s Physics Simulator, State Machines, Motion Tokens, none of them are individually earth-shattering. But together they address a huge problem in motion design: the moment you export an animation, you lose control of it. A developer has to pick up where you left off, reconstruct the logic you had in your head, and hope the result matches what you built.

With this workflow, that handoff gets a lot quieter. The file carries the behavior with it. There's less to explain, less to reconstruct, less to get wrong. That's a genuinely better way to work. And the know-how is available right now, for free. Take the Lottie Creator certification course and build your next realistic interactive animation today.