You have spent weeks preparing for your UX research job interview. You have polished your portfolio, rehearsed your case studies, and memorized definitions of qualitative versus quantitative research. But when the job interview begins, the interviewer asks something you did not expect: “Walk me through how you would design a study to reduce churn by 15%.” Suddenly, the frameworks you memorized feel useless. You stumble through an answer, knowing it is not landing.

This scenario plays out constantly. The interview process for UX research roles has evolved. Hiring managers no longer just want to hear that you know what a usability test is. They want to see you think on your feet. They want evidence that you can connect research to business outcomes, navigate stakeholder politics, and make methodological trade-offs under pressure. The gap between what candidates prepare for and what interviewers actually ask has never been wider. Understanding the hiring process, including multiple interview rounds, research challenges, and evaluation criteria, has become essential for success.

The problem is that most interview prep resources are outdated. They cover the basics but miss the strategic and technical questions that separate candidates who get offers from those who do not. They ignore the growing expectation that researchers can work with analytics, understand experimental design, and communicate findings that drive real decisions.

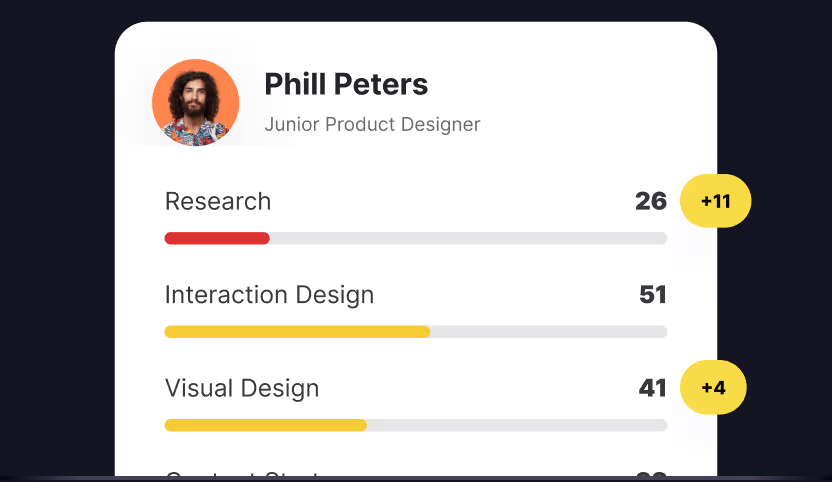

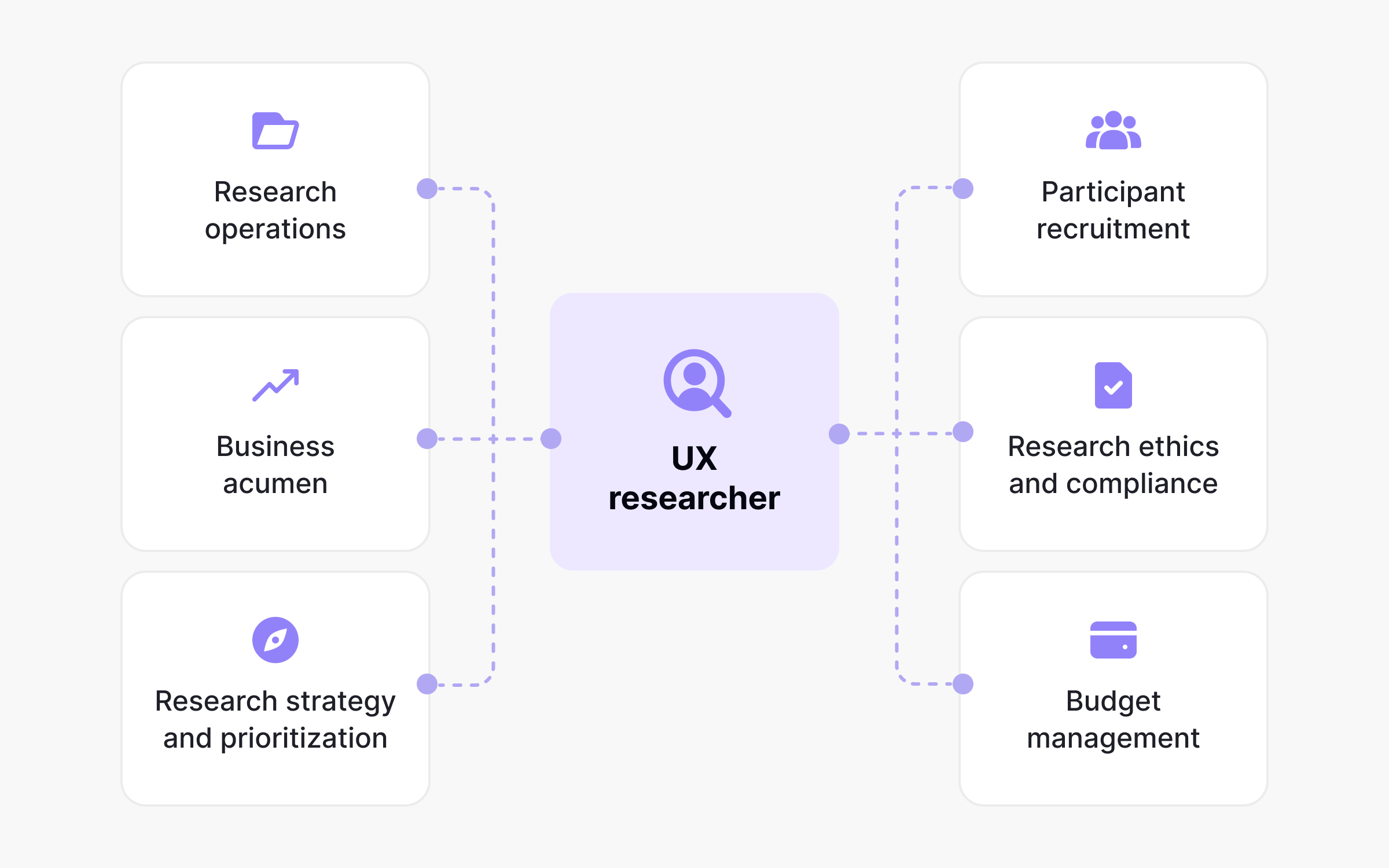

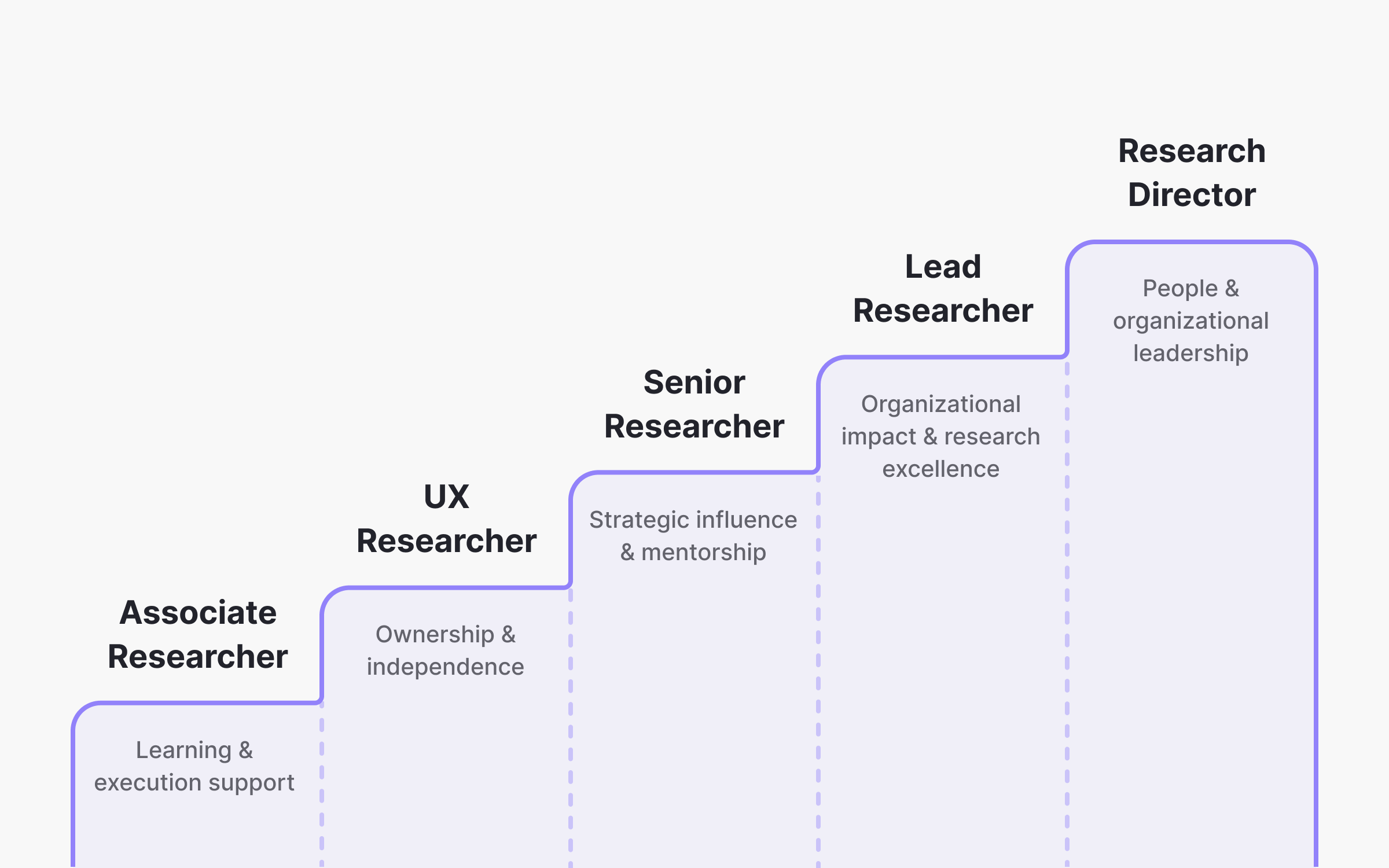

This guide closes that gap. It covers 56 UX researcher interview questions across the user researcher career path, from foundational concepts for entry-level positions and roles to strategic leadership questions for senior positions. Many of these questions are drawn directly from Uxcel Pulse, our skills assessment that provides tailored evaluation of your capabilities based on your specific role and experience level within product teams. Pulse measures the competencies that actually matter for researchers, which means these questions reflect what hiring managers genuinely care about.

You will find questions a UX research methods guide, stakeholder communication, analytics interpretation, ethics, and the methodological trade-offs that come up in every real interview. Each question includes a strong example response and insight into why interviewers ask it, so you understand the thinking behind the answer, not just the words.

Who this guide is for

Early-career researchers (0–2 years experience)

- Topics: Core research methods, usability testing basics, qualitative vs. quantitative research

- Question types: Definitional, scenario-based, methodology selection

- This guide is especially helpful for candidates preparing for entry level positions in UX research, offering targeted interview questions and tips to help you demonstrate your potential, experience, and soft skills during interviews.

Intermediate Researchers (2–5 years experience)

- Topics: Research planning, stakeholder management, synthesis and analysis, mixed methods

- Question types: Behavioral, case study walkthroughs, trade-off discussions

Senior and Lead Researchers (5+ years experience)

- Topics: Research strategy, team leadership, organizational influence, research operations

- Question types: Strategic, leadership scenarios, cross-functional alignment

UXR interview questions at a glance

💡Pro tip: Practice answering questions out loud. The difference between knowing an answer and articulating it clearly under pressure is significant. Record yourself if possible and review for filler words and clarity.

General UX researcher interview questions

Every UX research interview starts here. These questions appear regardless of whether you are applying for a junior role or a director position, and they set the tone for everything that follows. In a typical ux research job interview, the answering process is critical; interviewers look for candidates who can clearly articulate their thinking and technical knowledge. Providing the best answers, with structured examples and frameworks, helps you stand out from other candidates. Interviewers use these questions to assess not only how you think about the discipline, but also your technical proficiency, behavioral history, and your ability to drive business impact through user insights. A strong answer to “What is UX research?” signals that you understand research as a strategic function. A weak answer suggests you see it as a checkbox. Nail these fundamentals and you build credibility for the harder questions ahead.

1. What is UX research and why does it matter?

How to answer: UX research is the systematic study of users and their needs to inform product and UX design decisions, ultimately improving user experience. It combines qualitative methods like interviews and usability testing with quantitative approaches like surveys and analytics analysis. The goal is to reduce assumptions and build products that actually solve user problems. Without research, teams end up designing based on internal opinions rather than external reality, which leads to features nobody uses and problems nobody asked to be solved.

Why They Ask This: Interviewers want to confirm you understand UX research beyond surface-level definitions. They are checking whether you see research as a strategic function that drives business outcomes or just a checkbox activity.

2. How do you decide which research method to use?

Strong answer: Method selection depends on three factors: the research question, the stage of product development, and available constraints like time and budget. Demonstrating a solid understanding of user research methods is essential, as interviewers often ask technical knowledge questions to assess your ability to select and apply the right techniques. If I need to understand why users behave a certain way, I lean toward qualitative methods like contextual inquiry or in-depth interviews. If I need to measure how many users experience a problem or validate a hypothesis at scale, quantitative methods like surveys or A/B testing work better. Early in the product lifecycle, generative research helps identify opportunities. Later, evaluative research validates solutions. I also consider practical constraints. A diary study provides rich longitudinal data but takes weeks. A usability test can deliver actionable insights in days.

Why They Ask This: This reveals your methodological range and strategic thinking. Interviewers want researchers who choose methods based on research goals, not personal preference or habit.

3. Describe a research project you are particularly proud of.

What a good response sounds like: I led a mixed-methods study to understand why onboarding completion rates had dropped 15% over two quarters. Drawing on my research skills, I started with analytics to identify where users were abandoning the flow, then conducted 12 user interviews to understand the underlying friction. The data revealed that a recent feature addition had made the process feel overwhelming for new users. I synthesized findings into a prioritized recommendation framework and presented to stakeholders. The team implemented a progressive disclosure approach, and completion rates recovered within six weeks. Discussing past projects like this allows me to demonstrate my research skills and decision-making process. Including metrics in my portfolio, such as the improvement in completion rates, helps show the tangible impact of my research on user engagement and product success. What made this project meaningful was connecting quantitative signals to qualitative understanding and seeing the direct impact on metrics.

Why They Ask This: Interviewers assess your ability to scope projects, choose appropriate methods, synthesize findings, and drive outcomes. They also gauge your communication skills and how you measure success.

4. How do you handle situations where stakeholders disagree with your research findings?

Example response: First, I try to understand their perspective. Sometimes disagreement comes from additional context I do not have, and sometimes it reflects organizational priorities I need to navigate. I ask questions to understand their concerns, keeping in mind that different stakeholders, such as product managers, engineers, and designers, may have unique priorities and perspectives. Stakeholder dynamics can affect how these groups interact with the UX research team, so I adapt my approach accordingly. If they question the methodology, I walk through my approach and sample selection. If they question the interpretation, I show the underlying data and invite them to draw their own conclusions. I make sure to communicate my findings clearly to different stakeholders, adapting my communication style as needed to ensure everyone understands the insights. Often, presenting raw quotes or video clips is more persuasive than my summary. If disagreement persists, I document the findings clearly and recommend revisiting the decision if certain conditions occur. Research is about informing decisions, not dictating them.

5. What is the difference between qualitative and quantitative research?

Your answer might sound like: Qualitative research explores the why behind behavior through methods like interviews, observation, and diary studies. It generates rich, contextual insights but with smaller sample sizes. Quantitative research measures the what and how much through surveys, analytics, and experiments. It provides statistical confidence but less depth. The most effective research programs use both. Qualitative findings can generate hypotheses that quantitative methods validate at scale. Quantitative data can surface patterns that qualitative research explains. They are complementary, not competing approaches.

Why They Ask This: This foundational question reveals whether you understand research fundamentals and can articulate them clearly. Weak answers suggest limited methodological understanding.

6. How do you ensure research findings lead to action?

Here’s how to approach it: Actionability starts before the research begins. I ensure a clear understanding of research goals and stakeholder needs by involving stakeholders early in the process. I start with decision driven research questions, so the research is directly tied to the decisions the organization needs to make and leads to actionable outcomes. During synthesis, I focus on implications rather than just observations. Instead of saying “users struggle with navigation,” I frame findings as “users need clearer wayfinding, which could be addressed through persistent breadcrumbs or simplified information architecture.” I prioritize recommendations by impact and effort, and I follow up after presenting to understand barriers to implementation. Sometimes findings do not lead to action because of competing priorities, and that is okay. My job is to ensure the insights are available and understood when the timing is right.

Why They Ask This: Interviewers want researchers who drive outcomes, not just produce reports. This question separates researchers who see their job as delivering insights from those who see it as enabling better decisions.

7. How do you stay current with UX research trends and methods?

A solid response: I follow several approaches. I read publications like Nielsen Norman Group articles and research-focused newsletters. I participate in communities like ResearchOps and Mixed Methods where practitioners share challenges and solutions. I attend conferences when possible, though I find smaller workshops often provide more practical value. I also prioritize professional development by taking online courses and a UX research certificate to stay current with new trends and methods, which employers value as a sign of ongoing learning. I also learn by doing. When I encounter a method I have not used, I look for opportunities to pilot it on lower-stakes projects. Recently, I have been exploring how AI tools are changing research workflows, particularly for synthesis and transcription.

Why They Ask This: The field evolves constantly. Interviewers want researchers who invest in their own development and can bring new approaches to the team. Employers often prefer candidates who demonstrate dedication to professional development and have researched the employer.

Beginner UX researcher interview questions

If you are interviewing for your first UX research role or transitioning from a related field like design or product management, expect these questions to dominate your interviews. For those seeking entry-level positions, it's important to demonstrate your potential by highlighting relevant experience, UX research skills, and your ability to learn quickly. Hiring managers want to know that you understand core methods well enough to execute them independently. They are not looking for perfection. If you have a background in UX design, emphasize how your experience in designing solutions, conducting usability tests, and applying research findings can be leveraged in UX research to enhance user experience and improve products. They are looking for evidence that you can run a usability test without hand-holding, write a screener that actually filters participants, and synthesize findings into something useful. Show that you have done the work, even if it was in a class project or freelance context, and explain your reasoning clearly.

8. What is a usability test and how do you run one?

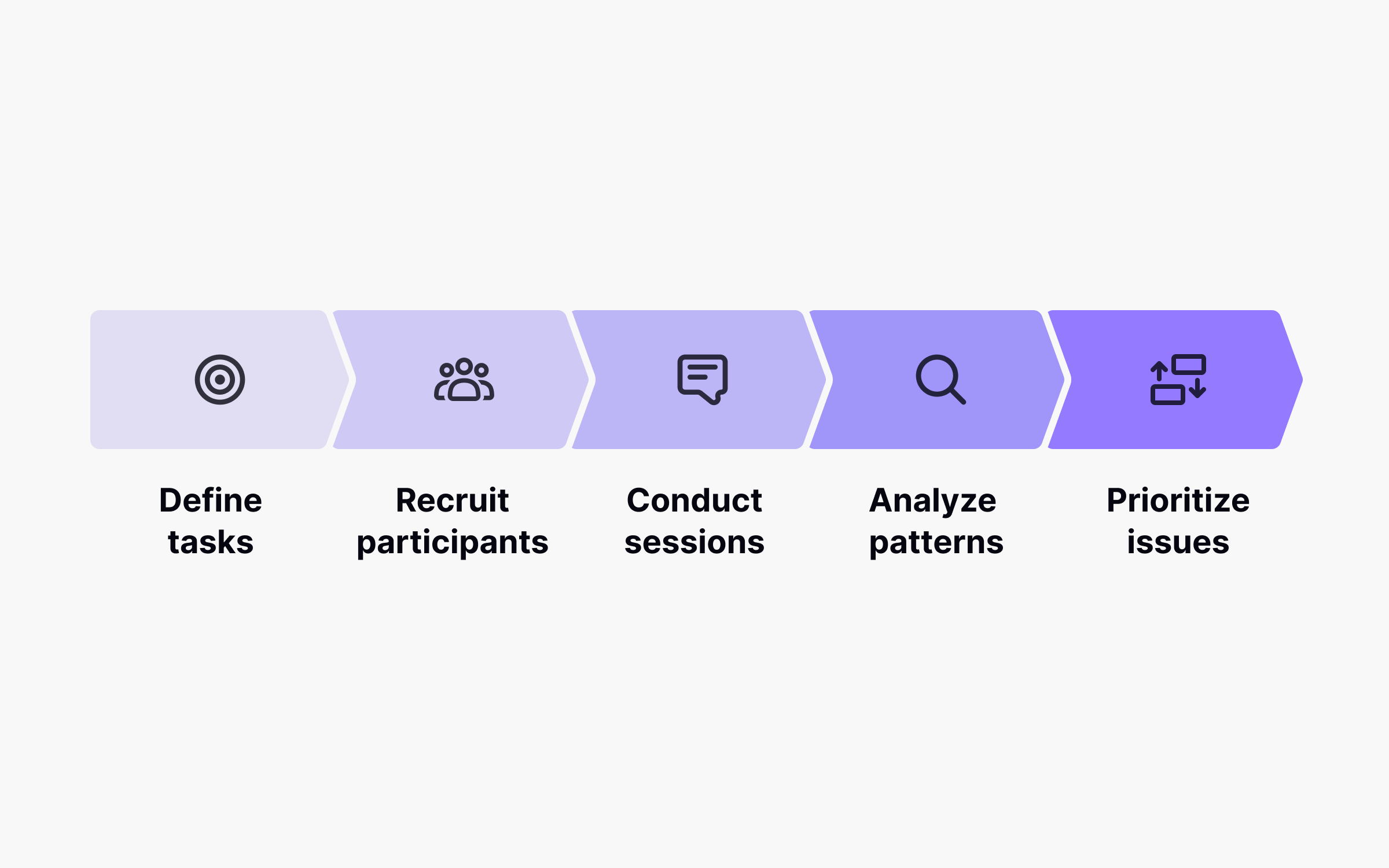

How to answer: A usability test observes real users attempting to complete tasks with a product to identify friction points and improvement opportunities. User testing is a key part of conducting research in the UX design process, as it helps evaluate system performance and gather actionable insights. To run one, I start by defining specific research questions and the tasks that will answer them. I recruit participants who match the target user profile, typically five to eight for qualitative insights. During sessions, I give participants tasks without leading them toward specific actions and observe where they struggle. I encourage thinking aloud so I understand their mental model. Afterward, I analyze patterns across sessions and prioritize issues by severity and frequency.

Why They Ask This: Usability testing is a core UX research method. Interviewers want to confirm you understand the mechanics and can execute independently.

9. What is the difference between generative and evaluative research?

Strong answer: Generative research happens early in the design process to discover opportunities and understand user needs before solutions exist. Methods include contextual inquiry, interviews, and diary studies. Evaluative research assesses specific designs or prototypes to determine whether they solve the intended problem. Methods include usability testing, A/B testing, and surveys. A research program needs both. Generative research ensures you are solving the right problem. Evaluative research ensures you are solving it effectively.

Why They Ask This: This tests your understanding of research's role across the product lifecycle. Researchers who only know evaluative methods limit their strategic value.

10. How many participants do you need for a usability study?

What a good response sounds like: For qualitative usability studies, five to eight participants typically reveal 80% or more of significant usability issues, based on well-established research by Nielsen and Landauer. However, the right number depends on user segment diversity. If I am testing with three distinct user types, I might need five per segment. For quantitative usability studies measuring task success rates or time-on-task with statistical confidence, I need larger samples, often 30 or more participants. The key is matching sample size to research goals rather than applying a universal rule.

Why They Ask This: This reveals whether you understand sample size rationale beyond memorizing a number. Interviewers want researchers who can justify methodological decisions.

11. What should you avoid when preparing a prototype for usability testing to ensure unbiased results?

Example response: The most important thing is avoiding hints or leading instructions that guide participants toward expected behaviors. If I tell someone "click the blue button to see your dashboard," I have eliminated the chance to learn whether they would find that button naturally. I also avoid testing only features I expect users to like, which creates confirmation bias. I do not provide detailed explanations of how the prototype works because that removes the opportunity to observe learnability. And I never test exclusively with team members, even for quick feedback, because they have context that real users lack. The goal is observing authentic behavior, and anything that shapes that behavior compromises the research.

Why They Ask This: This question from Uxcel's prototyping assessment tests whether you understand that methodology affects validity. Interviewers want researchers who can run studies that produce reliable, actionable insights.

12. What is a research screener and why is it important?

Example response: A screener is a questionnaire used to qualify potential research participants before including them in a study. It ensures you recruit people who match your target user profile and filters out professional survey takers or people who do not meet criteria. Good screeners include qualifying questions disguised among neutral ones so respondents cannot guess the "right" answer. For example, instead of asking "Do you use project management software?" I might ask "Which of the following tools do you use regularly?" with project management tools mixed among other options. Screeners directly impact research validity. Wrong participants produce misleading insights.

Why They Ask This: Recruitment quality determines research quality. Interviewers want to know you understand this and can design effective screeners.

13. How do you take notes during user research sessions?

Your answer might sound like: I use a structured approach that separates observations from interpretations. During sessions, I capture verbatim quotes, specific behaviors, and timestamps for key moments. I avoid writing interpretations in the moment because they can bias what I notice next. I use shorthand and abbreviations to keep pace with conversation. When possible, I record sessions so I can return to specific moments during analysis. If I have a note-taker, I brief them on what to capture and review notes together immediately after sessions while memory is fresh.

Why They Ask This: Note-taking quality affects synthesis quality. Interviewers assess whether you have a systematic approach or rely on memory and general impressions.

14. What is the difference between an interview and a contextual inquiry?

Here’s how to approach it: An interview is a conversation where I ask questions and the participant responds, typically in a neutral setting. Using open-ended questions in interviews allows participants to share their motivations and experiences in UX research, which is especially important when answering uxr background questions during interviews. A contextual inquiry observes participants in their natural environment while they perform real tasks, with questions woven into the observation. Contextual inquiry reveals behaviors people cannot articulate in interviews because they do not consciously notice them. For example, a user might say they check email “a few times a day” in an interview, but contextual inquiry might reveal they check it 30 times. I use interviews when I need to explore attitudes, motivations, and past experiences. I use contextual inquiry when I need to understand actual behavior and workflow.

Why They Ask This: This tests methodological understanding and your ability to match methods to research goals.

15. How do you write effective research questions?

A solid response: Effective research questions are specific, answerable, and actionable. I start by understanding what decisions the research needs to inform, then work backward to questions that would provide the necessary insight. When crafting research and interview questions, I consider users' mental models to ensure the questions align with how users perceive and process information. I avoid questions that are too broad, like “What do users think of our product?” Instead, I aim for questions like “What factors influence a user’s decision to upgrade from free to paid?” To illustrate, I often use a specific scenario from past projects to show how I tailored questions to address real-world challenges and decision-making. I also distinguish between research questions, which guide the study, and interview questions, which I ask participants. Research questions can be abstract. Interview questions must be concrete and conversational.

Why They Ask This: Research question quality determines study quality. Interviewers want researchers who can scope effectively.

16. What is the primary use of analytics tools like Mixpanel or Google Analytics in UX research?

How to answer: The primary use is tracking user behavior and measuring product performance. These tools show me what users actually do, not just what they say they do. I use them to identify patterns like which features get used most, where users drop off in flows, and how behavior differs across segments. I organize research data from analytics tools into actionable insights that inform product improvements and design decisions. But I treat analytics as a starting point, not an answer. High bounce rates or low feature adoption tell me something is wrong but not why. That is where qualitative research comes in. The most effective researchers combine behavioral analytics with direct user feedback to build complete understanding.

Why They Ask This: This question from Uxcel’s analytics tools assessment checks whether you understand the role of quantitative data in research. Interviewers want researchers who can work with product data, not just conduct interviews.

17. How do you recruit research participants?

Strong answer: Recruitment strategy depends on the target audience. For existing users, I work with product or customer success teams to identify candidates from the user base, often using behavioral criteria from analytics. For prospective users or specific segments, I use recruitment platforms like User Interviews, Respondent, or specialized panels. For B2B research, LinkedIn outreach and customer referrals often work better than panels. I always over-recruit by 20–30% to account for no-shows. The screener is critical regardless of source to ensure participants actually match criteria.

Why They Ask This: Recruitment is often the hardest part of research. Interviewers want to know you can solve this problem independently.

18. What is the most important component of an effective user persona?

What a good response sounds like: The most important component is goals, motivations, and pain points that drive user behavior. Demographics like age and location provide context, but they do not explain why users make decisions or what problems they need solved. A persona that tells me "Sarah is 34 and lives in Chicago" is less useful than one that tells me "Sarah needs to complete expense reports quickly because delays affect her team's budget approval." I focus personas on behavioral patterns and decision drivers because those directly inform design. The fictional name and photo help stakeholders remember and empathize with the persona, but the behavioral insights are what make it actionable.

Why They Ask This: This question from Uxcel's personas assessment tests whether you understand what makes research artifacts useful. Interviewers want researchers who create deliverables that drive decisions, not just documentation.

19. What is affinity mapping and what is its main purpose?

What a good response sounds like: The main purpose of affinity mapping is to find common themes among research findings. I use it as a synthesis technique where I group related observations, quotes, or data points into clusters that reveal patterns. I typically use sticky notes or digital equivalents to capture individual data points, then iteratively group them based on relationships that emerge. It works well for making sense of qualitative data from interviews or usability tests when patterns are not obvious upfront. The process is collaborative, so I often involve team members to reduce individual bias in interpretation. Affinity mapping helps move from raw data to themes that can inform design decisions.

Why They Ask This: This question from Uxcel's research analysis and synthesis assessment tests whether you have methods for making sense of qualitative data. Synthesis is where research value is created.

20. What is evolutionary prototyping and how does it relate to research?

Here's how to approach it: Evolutionary prototyping is the practice of developing a system incrementally, using feedback from each iteration to shape the next version until it becomes the final product. Unlike throwaway prototyping where you build something quick to test and discard, evolutionary prototypes grow into the actual product. For researchers, this means research is continuous, not a one-time activity. Each prototype version gets tested, findings inform the next iteration, and the cycle repeats. This approach requires researchers to deliver insights quickly enough to inform rapid development cycles. It also means accepting that some research will validate decisions rather than inform them, because the team has already moved forward.

Why They Ask This: This question from Uxcel's prototyping assessment tests whether you understand how research integrates with modern product development. Interviewers want researchers who can work in iterative environments, not just waterfall processes.

21. When is it most appropriate to create a high-fidelity prototype for research?

Example response: High-fidelity prototypes are most appropriate when demonstrating the full functionality of the final product, typically in later stages of design when core concepts have been validated. I would not use high-fidelity prototypes during initial brainstorming or for gathering early-stage feedback because they take significant time to create and can anchor stakeholders and participants on visual details rather than core functionality. The investment in high-fidelity prototypes makes sense when you need to test realistic interactions, validate visual design decisions, or present to stakeholders who struggle to imagine the final experience from wireframes. For early concept testing, lower-fidelity prototypes allow faster iteration and focus feedback on the right questions.

Why They Ask This: This question from Uxcel's prototyping assessment tests whether you understand how to match prototype fidelity to research goals. Interviewers want researchers who choose methods strategically, not by default.

Intermediate UX researcher interview questions

At this level, interviewers assume you can run studies. Now they want to know if you can run the right studies. These questions probe your ability to prioritize competing requests, manage stakeholders who disagree with your findings, synthesize messy data into clear insights, and measure whether your research actually changed anything. The shift from beginner to intermediate is less about methods and more about judgment. Can you make good decisions when there is no obvious right answer? Can you adapt when timelines compress or stakeholders push back?

Collaboration is also a key focus at this stage, as UX researchers are expected to work closely with other team members such as designers, product managers, engineers, and stakeholders to integrate research insights into the broader project workflow. Interviewers often include collaboration questions to assess how well you work with cross-functional teams, including designers and product managers. Be prepared to showcase your ability to collaborate with various teams and demonstrate empathy, communication, and strategic partnership during interviews. Your answers should demonstrate that you have navigated these situations before and learned from them.

22. How do you prioritize research requests when you have limited capacity?

Example response: I evaluate requests against three criteria: strategic alignment, decision urgency, and feasibility. Strategic alignment means the research supports key product or business objectives. Decision urgency means a real decision depends on this research and will happen soon. Feasibility considers whether I can deliver quality insights within the timeline. I also look for opportunities to combine requests. If three teams need to understand the same user segment, one well-designed study might serve all of them. When prioritizing, I consider the needs of different stakeholders, such as product managers, designers, engineers, and users, to ensure research efforts address their unique goals and challenges. I also use decision driven research questions to make sure research priorities are directly aligned with upcoming organizational decisions. When I cannot accommodate a request, I try to offer alternatives like lightweight methods, existing research that partially answers the question, or guidance for the team to conduct basic research themselves.

Why They Ask This: Resource constraints are universal. Interviewers want researchers who can make strategic trade-offs rather than simply saying yes to everything or no without alternatives.

23. Describe your approach to research synthesis.

Your answer might sound like: My synthesis process has four phases. First, I immerse myself in the data by reviewing all notes, recordings, and artifacts without trying to draw conclusions. Second, I code the data by tagging observations with descriptive labels. Third, I identify patterns by grouping related codes and looking for themes that appear across multiple participants or data sources. Fourth, I develop insights by asking what these patterns mean for the product and users. I distinguish between observations, which are factual, and insights, which interpret meaning. Throughout, I look for disconfirming evidence that challenges emerging patterns. The strongest insights hold up even when I actively try to disprove them. It's essential to maintain a clear understanding of the data and research context during synthesis to ensure findings are accurate and actionable.

Why They Ask This: Synthesis quality separates valuable research from data collection. Interviewers assess whether you have a rigorous approach.

24. What is the most strategic outcome of applying rigorous research methods?

Here’s how to approach it: The most strategic outcome is generating actionable insights that reduce risk, validate decisions, and guide impactful product strategy. Rigorous research not only informs decisions but also helps solve problems by uncovering root causes and demonstrating your problem solving abilities in real-world scenarios. Research that produces detailed reports but lacks strategic clarity fails this test. I have seen research teams gather large volumes of data without connecting findings to decisions the business actually needs to make. The best research does three things: it reduces uncertainty about user needs and behaviors, it validates or challenges assumptions before expensive development work, and it provides a foundation for prioritization. When I evaluate my own research, I ask whether it changed anything. If a study confirms what everyone already believed and leads to no new actions, its strategic value is questionable.

Why They Ask This: This question from Uxcel’s research methods assessment tests whether you understand research as a strategic function. Interviewers want researchers who connect methodology to business outcomes.

25. How do you measure the impact of UX research?

Here’s how to approach it: I track impact at multiple levels. At the project level, I document which decisions research informed and what happened as a result. Did the team ship something different because of the research? Did metrics improve? For existing products, I measure how usability testing and research have led to enhancements that increase user engagement or retention, demonstrating ongoing product optimization. At the program level, I track research utilization, meaning how often findings get referenced in design reviews, roadmap discussions, and strategy documents. I also gather feedback from stakeholders on research quality and usefulness. Quantifying research ROI precisely is difficult because research is one input among many. But tracking decisions influenced and outcomes achieved builds a compelling case over time.

Why They Ask This: Research teams face pressure to demonstrate value. Interviewers want researchers who think about impact, not just activity.

26. How do you handle research when stakeholders want answers faster than good research allows?

A solid response: I start by understanding what is driving the timeline. Sometimes urgency is real, and sometimes it reflects discomfort with uncertainty. If the timeline is fixed, I adjust scope and methodology to deliver useful insights faster. A five-participant usability test with rapid synthesis can happen in a week. I might use unmoderated testing instead of moderated sessions. I am transparent about trade-offs. Faster research often means fewer participants, less depth, or lower confidence. I present options and let stakeholders decide. Sometimes the right answer is to proceed without research and plan a study to validate post-launch.

Why They Ask This: Real-world research involves trade-offs. Interviewers want pragmatic researchers who can adapt without abandoning rigor.

27. What is triangulation in research and why does it matter?

How to answer: Triangulation means using multiple data sources or methods to study the same question. If interview findings align with survey data and behavioral analytics, I have more confidence in the conclusions than if I relied on one source alone. Triangulation reduces the risk that findings reflect methodological artifacts rather than genuine patterns. For example, users might say they want a feature in interviews, but analytics might show they rarely use similar existing features. The contradiction prompts deeper investigation. I aim to triangulate whenever the stakes are high enough to justify the additional effort.

Why They Ask This: This tests methodological sophistication. Interviewers want researchers who understand validity threats and how to address them.

28. What can go wrong when research is biased, and how do you prevent it?

Strong answer: When research is biased, teams build features users do not need or value. I have seen entire roadmaps built on research that confirmed what stakeholders already believed rather than challenging assumptions. Bias can enter at every stage: leading questions in interviews, unrepresentative participant samples, selective attention during synthesis, or presenting only findings that support a preferred direction. I prevent bias by writing neutral discussion guides and having colleagues review them. I recruit participants who represent the actual user base, not just the easiest to reach. During synthesis, I actively look for disconfirming evidence. And I present findings before recommendations so stakeholders can draw their own conclusions from the data.

Why They Ask This: This question from Uxcel's user research assessment tests whether you understand that research quality depends on rigor, not just activity. Biased research is worse than no research because it creates false confidence.

29. How do you present research findings to different audiences?

Strong answer: I tailor format, depth, and framing to the audience. For executives, I lead with implications and recommendations, keep it brief, and connect findings to business outcomes. For product managers and designers, I provide more detail on user needs and behaviors with clear design implications. For engineers, I focus on specific issues and include technical context when relevant. I make sure to have a clear understanding of the needs and priorities of different stakeholders, so I can communicate findings in a way that resonates with each group. I use video clips and direct quotes liberally because hearing users directly is more persuasive than my summary. I always provide access to detailed findings for those who want to go deeper while ensuring the core story is accessible to those who only have five minutes.

Why They Ask This: Research impact depends on communication. Interviewers want researchers who can translate findings for diverse stakeholders by demonstrating a clear understanding of their unique needs and expectations.

30. What does a high churn rate indicate about your product, and how would you investigate it?

Example response: A high churn rate indicates users are discontinuing use, possibly due to dissatisfaction or unmet needs. But churn is a symptom, not a diagnosis. I would investigate by first segmenting the data to understand which users are churning, when in their lifecycle, and whether there are patterns by acquisition channel, feature usage, or user type. Then I would combine quantitative analysis with qualitative research. Analytics might show that users who do not complete onboarding churn at 3x the rate. Interviews with churned users would reveal why onboarding failed for them. The goal is to move from "users are leaving" to "users are leaving because X, which we can address by Y."

Why They Ask This: This question from Uxcel's product usage analytics assessment tests whether you can connect metrics to research opportunities. Interviewers want researchers who can translate business problems into research questions.

31. Describe a time when research findings surprised you. How did you handle it?

What a good response sounds like: I was researching why users abandoned a checkout flow and expected to find usability issues. Instead, the research revealed that users were intentionally adding items to cart to save them for later because we did not have a wishlist feature. The "abandonment problem" was actually users adapting the product to unmet needs. I had to revise my initial framing and present findings that challenged the team's assumptions. I led with the evidence, showed video clips of users explaining their behavior, and reframed the opportunity from "fix checkout abandonment" to "support save-for-later behavior." The team built a wishlist feature, and cart abandonment improved as a secondary effect.

Why They Ask This: This tests intellectual honesty and adaptability. Interviewers want researchers who follow evidence even when it challenges assumptions.

32. How do you balance depth and breadth in a research program?

Example response: It depends on organizational maturity and product stage. Early-stage products often need breadth to identify opportunities and understand the landscape. Mature products benefit from depth on specific problems. I think of research as a portfolio. Some studies go deep on narrow questions with methods like ethnography or longitudinal research. Others go broad with surveys or analytics analysis. The mix should align with strategic priorities. I also build depth iteratively. A broad discovery study might identify three opportunity areas. Subsequent studies go deeper on each one. Over time, the organization develops rich understanding without any single study trying to cover everything.

Why They Ask This: Research strategy involves trade-offs. Interviewers assess whether you can think at the program level, not just the project level.

33. What is a research repository and how do you use one?

Your answer might sound like: A research repository is a centralized system for storing and retrieving research findings, making institutional knowledge accessible over time. I use repositories to document study details, key findings, participant information, and supporting artifacts. Good repositories enable searching across studies to answer questions like "What do we know about onboarding?" without re-reading every study. I tag findings consistently so they are discoverable and include enough context that someone unfamiliar with the original study can understand and use the insights. Repositories also prevent redundant research by making past work visible.

Why They Ask This: Research operations matter at scale. Interviewers want researchers who think about knowledge management, not just individual studies.

34. How do you involve stakeholders in the research process?

Here’s how to approach it: I involve stakeholders at multiple points. During planning, I collaborate on research questions with other team members and different stakeholders, such as designers, product managers, and engineers, to ensure findings will address their real decisions. During research, I invite them to observe sessions when possible because firsthand exposure to users is more powerful than any report. During synthesis, I sometimes run collaborative sessions where stakeholders help identify patterns, which builds ownership of findings. During readout, I tailor presentations to their context and leave time for discussion. Involvement builds trust and increases the likelihood that findings lead to action. It also educates stakeholders about research, making future collaboration smoother. Showcasing collaboration with cross-functional teams in your portfolio is important, as it demonstrates your teamwork skills to potential employers.

Why They Ask This: Stakeholder engagement drives research impact. Interviewers want researchers who build relationships, not just deliver reports.

35. What is the primary role of a workshop facilitator in UX research?

Strong answer: The primary role is to schedule and lead activities that help the group do their best thinking together. A facilitator is not there to present their own ideas or evaluate participants' suggestions against organizational goals. They create the conditions for productive collaboration by setting clear objectives, managing time, ensuring everyone contributes, and guiding the group toward actionable outcomes. Good facilitation requires preparation, including defining the workshop goal, selecting appropriate activities, and anticipating potential conflicts. During the session, I focus on process rather than content, asking questions that move discussion forward without steering toward my preferred conclusions. The best workshops feel like the group reached insights themselves, even though the facilitator structured the path.

Why They Ask This: This question from Uxcel's workshop facilitation assessment tests whether you can lead collaborative research sessions effectively. Interviewers want researchers who can facilitate group activities, not just conduct individual interviews.

Technical UX researcher interview questions

The days when UX researchers could ignore data are over. Modern research roles expect you to interpret analytics, design valid experiments, and understand the statistics behind your findings. In interviews, it's important to demonstrate your technical knowledge and be ready for technical knowledge questions, especially those related to user research methods. These questions test whether you can partner effectively with data scientists and product managers who speak in metrics, or whether you will be limited to qualitative-only work. You do not need to be a statistician, but you do need to know what statistical significance means and when it matters, how to design an A/B test that produces trustworthy results, and how to spot flawed data before it leads to bad decisions. If analytics fluency is a gap for you, address it before your interview.

36. What does funnel analysis help you identify, and how do you use it in research?

A solid response: Funnel analysis helps identify where users drop off across a flow, which is essential for prioritizing research focus. If I see that 60% of users abandon checkout at the payment step, I know that is where qualitative research should dig deeper. But funnel analysis is just the starting point. It tells me what is happening but not why. I use funnels to generate hypotheses, then validate them through interviews or usability testing. I also look beyond simple drop-off rates. Are certain user segments completing at different rates? Do users who drop off ever return? Combining funnel data with session recordings or user interviews transforms metrics into actionable understanding.

Why They Ask This: This question reflects Uxcel’s analytics tools assessment focus on practical application. Interviewers want researchers who can work across qualitative and quantitative domains, using analytics to focus research rather than treating them as separate disciplines.

37. What makes a good A/B test?

How to answer: A good A/B test has a clear hypothesis, adequate sample size, controlled variables, and meaningful success metrics. The hypothesis should specify what change you expect and why. Sample size must be large enough to detect the effect size you care about with statistical confidence. Controlled variables mean the only difference between variants is the thing you are testing. Success metrics should align with business goals, not just be easy to measure. I also consider test duration to account for day-of-week effects and novelty bias. Finally, a good test has a plan for what happens regardless of outcome. If the test wins, what ships? If it loses, what do you learn?

Why They Ask This: Experimentation is increasingly important in UX. Interviewers assess whether you understand experimental design principles.

38. Why is randomization critical in A/B testing?

Strong answer: Randomization ensures that both test groups are statistically comparable at the start, so any difference in outcomes can be attributed to the treatment rather than pre-existing differences between groups. Without randomization, you might accidentally assign more engaged users to one variant, contaminating results. For example, if users who visit on weekdays differ systematically from weekend users, and your test runs asymmetrically across those periods, results become unreliable. Proper randomization distributes both known and unknown confounding variables evenly across groups, establishing the causal inference that makes experiments valuable.

Why They Ask This: This question appears in Uxcel's A/B testing assessment because it tests understanding of experimental validity. Interviewers want researchers who understand why methods work, not just how to execute them.

39. Why is it important to include a control group in experiments?

Example response: A control group isolates the effect of the tested change. Without a control, you cannot know whether observed outcomes result from your treatment or from external factors like seasonality, marketing campaigns, or general user behavior trends. The control group experiences everything the test group experiences except for the specific change you are testing. This creates the baseline comparison that makes causal inference possible. For example, if conversion increases 10% for users who see a new checkout flow, but conversion also increased 8% for the control group due to a holiday shopping surge, the actual treatment effect is only 2%. Without the control, you would have overestimated impact dramatically.

Why They Ask This: This question from Uxcel's experimentation design assessment tests fundamental understanding of experimental methodology. Interviewers want researchers who can design valid experiments.

40. How do you determine sample size for a survey?

What a good response sounds like: Sample size depends on the analysis I plan to conduct and the precision I need. For population estimates like "what percentage of users want feature X," I use standard sample size calculators based on confidence level, margin of error, and population size. For surveys that will be segmented, I need sufficient sample within each segment. If I plan to compare three user types, I might need 100+ respondents per segment for reliable comparisons. I also consider response rate when determining how many invitations to send. A 10% response rate means I need to invite ten times my target sample. Underpowered surveys waste resources by producing unreliable data.

Why They Ask This: Quantitative literacy matters. Interviewers want researchers who can design methodologically sound surveys.

41. What is statistical significance and what are its limitations?

Example response: Statistical significance indicates that an observed result is unlikely to have occurred by chance, typically at the p < 0.05 threshold meaning less than 5% probability. However, significance has limitations. It does not indicate practical importance. A result can be statistically significant but too small to matter for business decisions. It is also sensitive to sample size. With enough data, trivially small differences become significant. I always consider effect size alongside significance and think about whether the magnitude of difference justifies action. Statistical significance also does not prove causation outside experimental designs with proper controls.

Why They Ask This: This tests quantitative reasoning. Interviewers want researchers who understand statistics beyond buzzwords.

42. What issues can result from misinterpreting statistics in research?

Your answer might sound like: Misinterpreting statistics leads to drawing incorrect conclusions, which causes poor decisions and wasted resources. I have seen teams invest heavily in features because a survey showed "80% of users want this," when the sample was self-selected power users who do not represent the broader base. Common misinterpretation errors include confusing statistical significance with practical significance, cherry-picking favorable numbers while ignoring contradictory data, and overgeneralizing from small or biased samples. I prevent these issues by always examining sample composition, looking at effect sizes alongside p-values, and presenting confidence intervals that communicate uncertainty. When presenting to stakeholders, I try to make limitations as clear as the findings themselves.

Why They Ask This: This question from Uxcel's statistics assessment tests whether you can critically evaluate quantitative findings. Interviewers want researchers who recognize the limitations of data, not just present numbers uncritically.

43. How would you design a study to understand why users are churning?

Your answer might sound like: I would use a mixed-methods approach. First, I would analyze behavioral data to identify churn patterns. When do users churn? What actions do they take, or not take, before churning? Are there user segments with higher churn rates? This generates hypotheses. Then I would conduct interviews with recently churned users to understand their experience and decision to leave. I would also interview users who considered leaving but stayed to understand what retained them. I might add a survey to churned users for broader quantitative data on reasons. The combination provides both pattern identification and deep understanding of underlying motivations.

Why They Ask This: This tests your ability to design research for complex business problems. Interviewers assess methodological range and strategic thinking.

44. What is the difference between correlation and causation?

Here's how to approach it: Correlation means two variables move together, either in the same direction or opposite directions. Causation means one variable actually causes changes in another. Correlation does not imply causation because a third variable might cause both, or the relationship might be coincidental. For example, ice cream sales and drowning deaths correlate because both increase in summer, not because one causes the other. Establishing causation requires controlled experiments where you manipulate one variable and measure the effect while holding others constant. Observational research can identify correlations that suggest hypotheses, but experiments are needed to confirm causal relationships.

Why They Ask This: Misinterpreting correlation as causation leads to bad decisions. Interviewers want researchers who understand this fundamental distinction.

45. How do you handle missing or incomplete data in research?

A solid response: My approach depends on why data is missing and how much. If missingness is random and limited, I might proceed with analysis noting the limitation. If certain questions have high non-response, I investigate whether the question was confusing or sensitive. For survey data, I examine whether respondents who skipped questions differ systematically from those who answered, which might indicate bias. I avoid imputing missing values without clear justification. In qualitative research, I note when participants could not answer questions and consider whether that itself is a finding. Transparency about data limitations is essential when presenting findings.

Why They Ask This: Real-world data is messy. Interviewers want researchers who can handle imperfection thoughtfully.

46. What is the most ethical approach when implementing user behavior tracking that collects sensitive data?

How to answer: The most ethical approach is implementing tracking with clear opt-in consent and transparent explanation of data usage. Users should understand exactly what data you collect, why you collect it, and how it will be used before they agree. This goes beyond simply offering an opt-out buried in settings. I advocate for consent flows that are genuinely informative, not designed to maximize opt-ins through dark patterns. Beyond consent, I follow principles of data minimization, collecting only what is necessary, storing it securely, anonymizing when sharing, and deleting when no longer needed. Ethics is not just compliance with regulations like GDPR. It is ongoing consideration of how research affects the people who participate in it.

Why They Ask This: This question from Uxcel's ethics assessment reflects growing industry focus on privacy and user autonomy. Interviewers want researchers who see ethics as foundational to practice, not an afterthought or compliance checkbox.

47. How should you approach a feature that is usable but not accessible?

Example response: The right approach is to delay or revise features that conflict with accessibility best practices. Accessibility is not a nice-to-have that you address based on user complaints or high demand. It is a fundamental requirement for inclusive design. I advocate for building accessibility into research from the start, including participants who use assistive technologies, testing with screen readers, and evaluating against WCAG guidelines. When trade-offs arise, I help teams understand that accessibility issues often indicate broader usability problems that affect everyone. A button that is hard for a screen reader user to find is probably hard for sighted users to find too. Launching inaccessible features creates technical and design debt that becomes harder to fix later.

Why They Ask This: This question from Uxcel's accessibility assessment tests whether you understand accessibility as a core requirement rather than an optional enhancement. Interviewers want researchers who advocate for inclusive design.

48. How do you approach research for AI or ML-powered features?

Strong answer: AI features require research attention to transparency, user control, and appropriate trust calibration. Users should understand when they are interacting with AI-generated content or recommendations and have some sense of why the system behaves as it does. I research how much explanation users actually want and what formats work. For recommendation systems, I explore whether users understand why items are suggested and whether they can provide feedback to improve relevance. I also test edge cases where AI might fail and ensure the experience degrades gracefully. When predictions are uncertain, the UX should allow users to verify or override rather than forcing them to accept all system outputs without question.

Why They Ask This: AI products are increasingly common. Interviewers want researchers who understand the unique UX challenges they present.

Senior and Leadership UX researcher interview questions

Senior and lead researcher interviews focus less on what you can do and more on what you can build. Can you create a research practice where none exists? Can you align research priorities with business strategy in a way that earns executive buy-in? Can you develop junior UX researchers into independent contributors? These questions assess whether you think at the organizational level, not just the project level. Your answers should demonstrate strategic thinking, comfort with ambiguity, and a track record of influence beyond your immediate team. If you have led research initiatives that changed how your company makes decisions, these are the stories to tell.

49. How do you build a research practice or team from scratch?

What a good response sounds like: I start by understanding organizational context. What decisions need research input? Where are the biggest knowledge gaps? Who are the key stakeholders? Then I prioritize high-impact, high-visibility projects that demonstrate research value quickly. Early wins build credibility and demand for more research. Simultaneously, I establish basic infrastructure: research templates, participant recruitment channels, a repository for findings. As demand grows, I make the case for additional headcount by documenting research requests I cannot fulfill and connecting research to business outcomes. Culture-building matters too. I advocate for research in forums where decisions happen and coach product teams on when and how to involve researchers.

Why They Ask This: Building research capability is a leadership skill. Interviewers assess whether you can think beyond individual projects to organizational change.

50. How do you align research priorities with business strategy?

Example response: Alignment starts with understanding strategy deeply. I attend planning meetings, read strategy documents, and build relationships with leadership to understand where the business is headed. Then I map research opportunities to strategic priorities. If expansion into a new market is a priority, research into that market's user needs moves up the backlog. I frame research proposals in strategic terms, connecting studies to business outcomes rather than just user understanding. I also proactively identify strategic questions that lack good answers and propose research to address them before someone asks. The goal is positioning research as a strategic function rather than a service function.

Why They Ask This: Senior researchers must operate strategically. Interviewers want researchers who can connect user insights to business outcomes.

51. How do you develop other researchers on your team?

Your answer might sound like: Development happens through projects, feedback, and mentorship. I assign projects that stretch capabilities while providing support. A researcher ready to grow might lead their first stakeholder presentation with me observing and providing feedback afterward. I give specific, actionable feedback frequently, not just in formal reviews. I create learning opportunities like study clubs where we discuss methodologies or review each other's work. I also help researchers identify their development goals and find projects aligned with those goals. For researchers interested in leadership, I gradually delegate leadership responsibilities while remaining available for guidance.

Why They Ask This: People leadership is distinct from individual contribution. Interviewers assess whether you can grow and retain talent.

52. How do you handle situations where product decisions contradict research findings?

Here's how to approach it: This happens regularly and is not necessarily a problem. Research provides input, but product decisions involve many factors: business constraints, technical feasibility, strategic priorities, stakeholder commitments. My job is ensuring research findings are understood, not that they determine every decision. When decisions contradict findings, I ensure the trade-off is explicit. I might say, "The research suggests users struggle with this approach, but I understand the business reasons for proceeding. Let us plan to monitor these metrics post-launch and revisit if we see the issues the research predicted." Documentation protects the research function's credibility. When predicted issues materialize, I can point to the finding without saying "I told you so."

Why They Ask This: Mature researchers understand organizational dynamics. Interviewers want researchers who can maintain influence without being adversarial.

53. How do you scale research impact across a large organization?

A solid response: Scaling requires moving beyond researcher-led studies to research-informed culture. I focus on three approaches. First, democratization through enablement. I create templates, guides, and training so product teams can conduct basic research themselves, freeing researchers for complex work. Second, strategic placement of researcher time on highest-impact questions rather than trying to research everything. Third, knowledge infrastructure through repositories and communication channels that make insights discoverable and reusable. I also identify research champions in each team who can advocate for research practices and escalate questions to researchers when needed.

Why They Ask This: Research demand always exceeds capacity. Interviewers want leaders who can multiply impact beyond their direct work.

54. Describe your philosophy on research operations.

How to answer: Research operations is the infrastructure that enables researchers to focus on research rather than logistics. Good ResearchOps covers participant recruitment, tool management, incentive processing, repository maintenance, and compliance. My philosophy is investing in operations proportional to team size and research volume. A solo researcher might handle operations themselves. A team of ten needs dedicated support. I prioritize automating repetitive tasks, standardizing processes that benefit from consistency, and maintaining flexibility where research needs vary. Operations should be invisible when working well. Researchers should not spend significant time on logistics.

Why They Ask This: Operations thinking distinguishes leaders from practitioners. Interviewers assess whether you can build sustainable research programs.

55. How do you measure and communicate research team performance?

Strong answer: I track metrics at multiple levels. Activity metrics include studies completed, participants recruited, and stakeholders served. These measure output but not value. Impact metrics track decisions informed, recommendations implemented, and outcomes improved. These are harder to measure but more meaningful. I also gather qualitative feedback from stakeholders on research quality and usefulness. For communication, I share regular updates with leadership connecting research work to strategic priorities and business outcomes. I maintain a portfolio of impact stories showing specific examples where research influenced important decisions. Narrative and examples are often more compelling than metrics alone.

Why They Ask This: Leaders must justify resources and demonstrate value. Interviewers want researchers who can articulate and prove impact.

56. Where do you see UX research evolving in the next few years?

What a good response sounds like: Several trends are shaping the field. AI is transforming research workflows through faster transcription, synthesis assistance, and pattern identification, though human judgment remains essential for interpretation. Research is becoming more integrated with product analytics training, requiring researchers to be fluent with quantitative data. The rise of continuous discovery means research is shifting from project-based to ongoing, embedded in product teams. Research democratization continues with more non-researchers conducting basic studies, which elevates the specialized researcher role toward complex, strategic work. Finally, research ethics is gaining prominence as companies face privacy regulation and public scrutiny of data practices.

Why They Ask This: Senior researchers should have perspective on the field's direction. Interviewers assess strategic thinking and awareness of industry trends.

How to prepare for a UX researcher interview

Preparation separates candidates who get offers from those who receive generic rejection emails. Here is how to approach it strategically.

- Review your own work first. Before memorizing frameworks, revisit two or three research projects you have led. For each one, be ready to explain the business context, your methodology choices, key findings, and what happened as a result. Interviewers care less about perfect process and more about your reasoning and impact. Be prepared to discuss how you approach UX research, including your methodologies and problem-solving strategies, as this is a common focus in interviews.

- Practice thinking out loud. UX research interviews often include live exercises where you design a study on the spot or critique a research plan. The interviewer wants to hear your thought process, not just your conclusion. Practice verbalizing your reasoning, even when you are uncertain. Saying “I am weighing two approaches here” is better than sitting in silence.

- Research the company’s product. Use it if possible. Identify one or two areas where research could improve the experience and be ready to discuss them. This shows initiative and gives you concrete examples to reference during the conversation.

- Prepare questions that reveal research culture. Ask how research findings typically get used in product decisions. Ask about the ratio of generative to evaluative research. Ask what the biggest research challenge is right now. These questions demonstrate strategic thinking and help you assess whether the role is right for you.

- Know your gaps honestly. If you have never run a diary study or worked with analytics tools, do not pretend otherwise. Interviewers respect self-awareness. Explain what you do know about the method and how you would approach learning it.

When preparing, pay special attention to the first interview, as it often sets the tone for the rest of the process. In later stages, many interviewers may be involved, sometimes asking you to develop research plans or solve hypothetical problems, so be ready for a variety of scenarios.

How to answer UX researcher interview questions

Strong answers share a few characteristics regardless of the specific question. In UX researcher interviews, demonstrating a clear answering process and showcasing your technical knowledge are key to providing the best answers that set you apart from other candidates.

- Lead with the answer, then explain. Do not build up to your point with lengthy context. State your position or approach clearly, then provide the reasoning behind it. Interviewers often have limited time and appreciate directness.

- Use specific examples. Generic answers like “I would conduct user interviews” are weak. Specific answers like “I would conduct 8–10 interviews with users who churned in the last 30 days, focusing on their experience in the first week” demonstrate real competence.

- Acknowledge trade-offs. Every methodological choice involves trade-offs. Showing that you understand them, such as “Surveys give us scale but miss the nuance we would get from interviews,” signals mature thinking.

- Connect to outcomes. Whenever possible, tie your answer back to business or user impact. Interviewers want researchers who think beyond methodology to results.

- Structure behavioral answers with STAR. For questions about past experiences, use the Situation, Task, Action, Result framework. Describe the context briefly, explain what you were responsible for, walk through what you actually did, and share the outcome. Keep it concise. Two minutes is usually enough.

- Ask clarifying questions when appropriate. If a question is ambiguous, ask for clarification. This is not a sign of weakness. It shows you think carefully before acting, which is exactly what good researchers do.

Why upskilling matters for UX Researchers

You can prepare thoroughly for interviews and still hit a ceiling. The researchers who keep advancing are not just good at interviewing. They are good at growing.

Here is an uncomfortable truth: most UX researchers plateau. They master usability testing and interviews, then stop growing. Meanwhile, the field keeps evolving. Hiring managers now expect researchers to interpret analytics, design experiments, and connect findings to business metrics. The researchers who get promoted, and who land senior roles at competitive companies, are the ones who keep expanding their skills. Professional development is a key factor in career growth for UX researchers, as it demonstrates dedication to ongoing learning and signals to employers a commitment to staying current in the field.

The data backs this up. According to Uxcel’s Impact Report, professionals who actively upskill see a 68.5% higher promotion rate than their peers. The average salary increase among learners is $8,143.. These are not abstract numbers. They reflect the career impact of closing skill gaps before they become career blockers.

What makes the difference? Three things. First, methodological range. The more approaches you can draw from, the better you can match methods to research questions. Interviewers notice when candidates can only talk about one or two methods. Second, data fluency. Product managers and engineers respect researchers who can speak their language, whether that means understanding statistical significance, interpreting funnel analytics, or knowing how A/B testing infrastructure works. Third, strategic thinking. Senior roles require connecting research to business outcomes, not just delivering insights.

Uxcel offers a practical way to build these skills. The platform’s interactive, gamified approach achieves completion rates of 48–50%, compared to the industry standard of 5–15% for online courses. That matters because unfinished courses do not build skills. Uxcel’s bite-sized lessons fit into busy schedules, and the skill mapping feature shows exactly where your gaps are, across both research methods and the product management knowledge that makes researchers more effective partners.

Long story short

Preparing for UX researcher interviews requires more than memorizing definitions. Interviewers want to see how you think through problems, make methodological decisions, navigate stakeholder dynamics, and drive outcomes. The questions in this guide cover the range you will encounter, from foundational concepts for entry-level roles to strategic leadership questions for senior positions.

Focus your preparation on areas where you feel least confident. Practice articulating your thinking out loud because clear communication matters as much as correct answers. And remember that interviews are conversations. The best interviews feel like discussing research with a thoughtful colleague rather than reciting answers to a test.

Commonly asked questions about UX Researcher interviews

How long should I prepare for a UX researcher interview?

Most candidates benefit from one to two weeks of focused preparation, though this varies based on experience level and familiarity with the company's domain. Beginners should allocate more time to solidifying foundational concepts. Experienced researchers should focus on preparing specific stories and examples that demonstrate impact.

What portfolio materials should I bring to a UX research interview?

Prepare two to three case studies that showcase different research methods, demonstrate end-to-end process from planning through impact, and highlight your specific contributions. When presenting your portfolio, include examples of your past projects and use a structured format to make them more accessible and engaging. Include artifacts like research plans, discussion guides, synthesis frameworks, and deliverables. Be ready to discuss methodology decisions and what you would do differently in hindsight.

How do I answer questions about methods I have not used?

Be honest about your experience while demonstrating knowledge of the method. Explain your understanding of when and why to use it, how you would approach learning it, and any adjacent experience that would transfer. Interviewers respect self-awareness over false confidence.

What questions should I ask the interviewer?

Ask about the research team's structure and how it integrates with product teams. Ask about current research challenges and priorities. Ask about how research findings typically get used in decisions. These questions demonstrate strategic thinking and help you assess whether the role is right for you.

How important are technical skills like SQL for UX researchers?

Importance varies by organization. Product-led companies with strong data cultures increasingly expect researchers to query databases and analyze behavioral data independently. Design agencies and early-stage startups may emphasize qualitative skills more heavily. Technical knowledge, such as proficiency with research methods, tools, data analysis, and problem-solving, is often crucial for handling research challenges and making informed decisions, though the specific requirements depend on the organization. Review job descriptions and ask about expectations during interviews.

How do I demonstrate impact if my research recommendations were not implemented?

Implementation depends on many factors beyond research quality. Focus on the quality of your insights, how well you communicated them, and what you learned about driving change. Be sure to mention instances where your research led to improvements in existing products, such as through usability testing that uncovered opportunities for enhancement. Additionally, highlight how your work increased user engagement or retention. You can also discuss follow-up conversations where you advocated for findings or situations where recommendations were implemented later when circumstances changed.

Where do you go from here?

If you are not sure where to start, Uxcel's UX Researcher career path provides a structured learning sequence from foundational skills through a mix of lessons and projects. It covers research methods, analytics, ethics, accessibility, stakeholder communication, and the strategic skills that separate senior researchers from junior ones. For interview preparation specifically, Uxcel Pulse provides a tailored assessment of your capabilities based on your role and experience level, identifying exactly which competencies need work before you walk into that interview room.

Create an account on Uxcel and get one step closer to your dream UX researcher job today.