A clearly defined problem does not automatically mean a valuable opportunity. Product teams face more good problems than they can realistically address, which makes evaluation and prioritization essential.

Opportunity assessment is about deciding where effort will have the most meaningful effect. This requires looking beyond the existence of a problem and considering its impact on users, its relevance to business goals, and the size of the space it affects. Some problems feel urgent but influence only a small group. Others affect many users but offer limited strategic value. Strong product decisions recognize these differences.

Every opportunity is shaped by constraints. Market size, technical capacity, regulatory boundaries, and timing all influence what is feasible. Ignoring these factors often leads to recommendations that sound reasonable but cannot be executed or sustained.

In case studies, opportunity assessment shows mature product judgment. It explains why one problem deserves attention over others, how trade-offs were considered, and how expected user outcomes connect to business results.

Assessing defined problems for a case study

A well-defined problem is only a starting point. Opportunity assessment asks whether solving this problem would create meaningful change for users and for the product. Not every clear problem justifies investment, even if it sounds reasonable or familiar.

A strong opportunity affects users in a noticeable way. This includes how often the problem occurs, how many users experience it, and how much effort or risk it creates in their journey. A problem that happens rarely or only in edge cases may still matter, but its impact is different from one that blocks progress for many users.

Opportunity assessment also considers whether solving the problem leads to measurable outcomes. Improvements that reduce time, effort, cost, or errors are easier to justify than changes that only feel helpful. Clear outcomes help teams reason about value without jumping into solutions too early.

Before moving forward, it is important to ask whether the problem has enough weight to influence product direction. This step filters out issues that are valid but not significant enough to compete with other opportunities.[1]

Estimating potential impact on users versus reach

Impact and reach are often confused, but they describe different aspects of opportunity. Impact reflects how much a problem affects an individual user when it occurs. Reach reflects how many users experience that problem at all.

Some problems have a high impact but a low reach. They may strongly affect a small group of users, such as power users or specific segments. Other problems have a wide reach but low impact, causing minor friction for many users without blocking progress.

Opportunity assessment compares these dimensions instead of favoring one by default. A problem with moderate impact and wide reach can be as valuable as a severe problem with limited reach. The key is understanding the trade-off and making it explicit.

This comparison helps prevent biased decisions. Teams often overvalue problems that feel dramatic or personally familiar, while overlooking quieter issues that influence overall product performance. Separating impact from reach brings balance and clarity to prioritization discussions.

Gauging market size at a high level in case studies

Market sizing in case studies is not about precision or financial forecasting. Its role is to test whether an opportunity is large enough to justify attention compared to other possible problems. A rough but well-reasoned estimate is more valuable than a detailed but fragile calculation.

High-level market sizing usually starts by defining the relevant audience. This means identifying who actually experiences the problem, not everyone who could theoretically use the product. Narrowing the group based on behavior, context, or needs helps avoid unrealistic assumptions.

From there, the estimate focuses on the order of magnitude. Instead of exact numbers, teams reason in ranges and proportions. For example, whether the opportunity affects thousands or millions of users, or whether it represents a small slice or a meaningful share of the existing user base. This level of detail is often enough to compare opportunities.

Market size should also be interpreted alongside impact. A large audience with low motivation to change may represent a weaker opportunity than a smaller group with a critical need. The goal is not to defend a number, but to show why the problem is or is not strategically significant.[2]

Pro Tip! If assumptions feel shaky, explain how better data could change the estimate instead of forcing confidence.

Identifying business constraints that shape feasibility in a case study

Constraints determine whether an opportunity is realistic under current conditions. A problem can be valuable and well-scoped, yet still be impractical if key limits are ignored.

Business constraints often come from capacity, timing, and strategy. Limited engineering resources may prevent big architectural changes. Fixed deadlines can make long-term improvements risky. Strategic focus also matters. An opportunity that improves retention may lose priority if the business is under pressure to grow revenue or enter a new market.

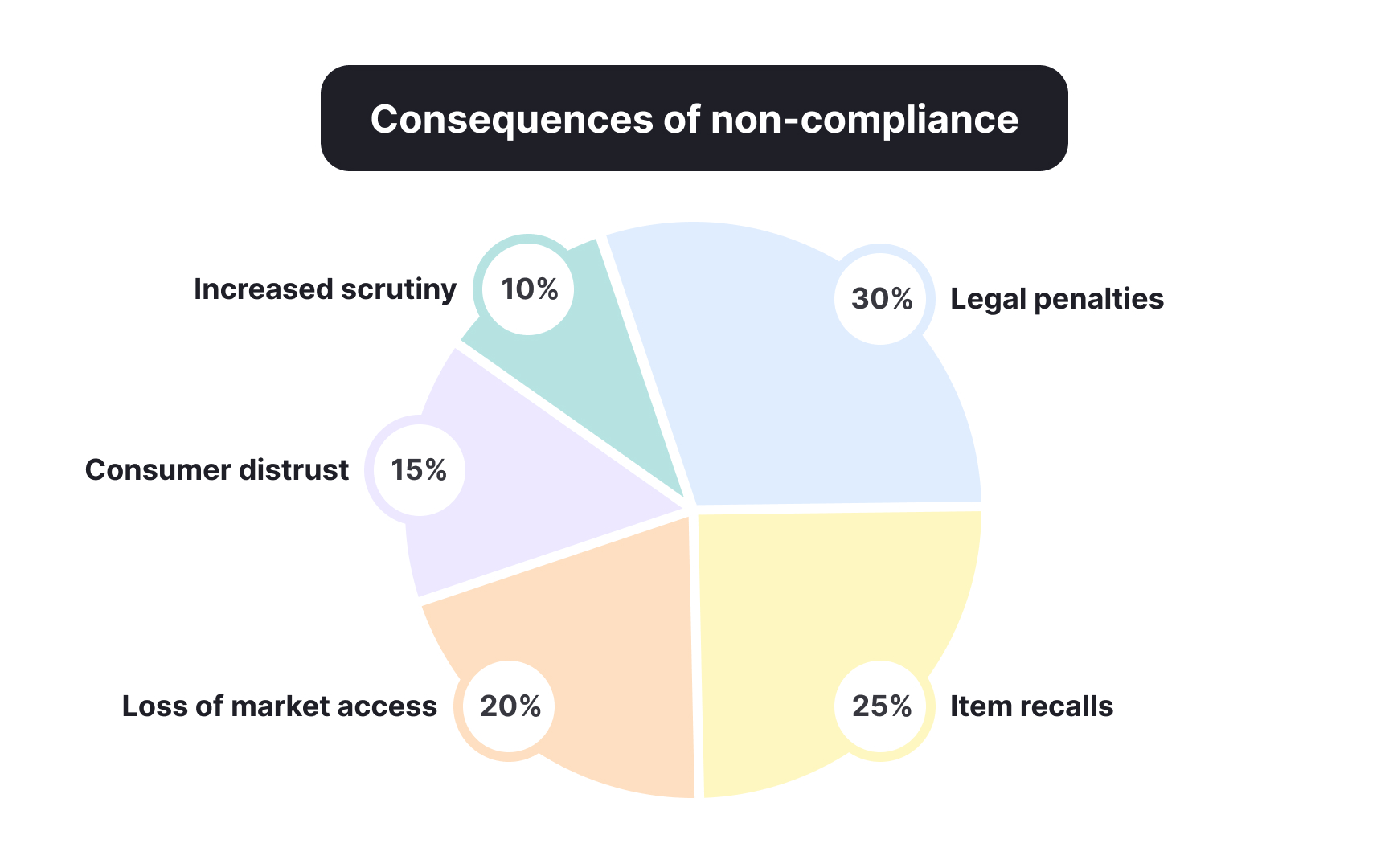

Regulatory constraints shape what is permitted and how long delivery may take. Products that process personal data face rules around storage, consent, and access. In regulated industries such as finance, healthcare, or education, compliance reviews, audits, or certifications can add significant effort and delay.

Constraints do not invalidate opportunities, but they change how they should be evaluated.[3]

Pro Tip! If constraints mainly affect delivery speed, the opportunity may still be valid but better timed later.

Recognizing operational constraints in a case study

Operational constraints describe what the organization can realistically support on a day-to-day basis. Even when a problem is valuable and compliant, it may exceed current operational capacity.

Common operational constraints include team skills, system maturity, and ongoing maintenance needs. For example, an opportunity may require frequent data updates, manual reviews, or customer support involvement. If these processes are not already in place, the real cost of the opportunity increases beyond initial development.

Dependencies also introduce limits. Products often rely on internal teams, vendors, or partners to function correctly. If a solution depends on data quality, supplier reliability, or cross-team coordination, weak links can slow delivery or reduce consistency.

Operational constraints do not mean an idea is wrong. They explain effort, risk, and sustainability. An opportunity that creates strong user value may still be postponed if it introduces complexity that the organization cannot absorb yet.

In case studies, recognizing operational constraints signals practical judgment. It shows awareness that shipping a feature is not the finish line. Supporting it over time matters just as much.[4]

Comparing multiple problems competing for attention in a case study

Product teams rarely evaluate a single problem in isolation. More often, several valid opportunities compete for the same time and resources. Opportunity assessment helps compare them using shared criteria rather than personal preference or urgency.

A useful comparison looks at user impact, reach, effort, and risk side by side. One opportunity may affect fewer users but solve a critical problem. Another may reach many users but offer smaller gains. Seeing these differences clearly helps avoid defaulting to the loudest or most familiar issue.

Comparison also surfaces opportunity cost. Choosing one problem means delaying or rejecting others. Making that trade-off explicit shows mature product thinking. It demonstrates awareness that prioritization is not about finding a perfect option, but about choosing the most defensible one under current conditions.

In case studies, comparing opportunities strengthens the narrative. It explains why one direction was chosen over alternatives, instead of presenting the decision as obvious or inevitable.

Deciding what makes a problem worth solving now in a case study

Not every high-priority problem should be addressed immediately. Timing plays a critical role in opportunity assessment. Deciding what to solve now means considering readiness, momentum, and expected return in the current context.

A problem is often worth solving now when its impact aligns with immediate product goals. This could include reducing churn, unlocking growth, or removing friction in a core workflow. Even strong problems may wait if they support long-term value but do not influence near-term outcomes.

Timing is also shaped by confidence and constraints. Problems backed by recent data, clear signals, or repeated user feedback are safer to act on than those built on assumptions. Availability of resources and focus further influence whether now is the right moment.

In case studies, explaining why a problem is tackled now strengthens the recommendation. It shows awareness that prioritization is not only about importance, but about sequencing decisions over time.

Linking expected user outcomes to business metrics

An opportunity becomes actionable when user outcomes connect to business results. Improving an experience is not enough on its own. Opportunity assessment requires clarity on how that improvement supports product or company goals.

User outcomes describe what changes for users when the problem is solved. This may include completing tasks faster, making fewer errors, or feeling more confident using the product. Business metrics reflect how these changes affect the product, such as higher retention, increased activation, reduced support costs, or improved conversion.

The connection between the two should be logical, not assumed. For example, reducing friction in onboarding links to activation and early retention. Improving reliability in a core workflow can reduce churn or support volume. Clear links help avoid vague claims about value.

In case studies, this connection shows product thinking maturity. It explains not just why users benefit, but why the business should invest. When user outcomes and metrics align, opportunity assessment moves from intuition to evidence-based reasoning.[5]

Articulating a clear opportunity rationale in a case study

Opportunity assessment only matters if it can be explained clearly. A strong opportunity rationale summarizes why a specific problem deserves focus, based on impact, feasibility, and value.

A clear rationale brings together several threads. It restates the problem briefly, highlights the affected users, and explains the expected outcome. It also acknowledges key constraints and trade-offs, showing why this opportunity was chosen over others.

Good rationales avoid sounding defensive or absolute. They do not claim certainty. Instead, they show structured judgment and awareness of risk. This makes the recommendation easier to trust, even when assumptions are involved.

In case studies, the opportunity rationale is often the moment where evaluators decide whether the thinking holds together. Clarity here turns analysis into a convincing product direction.

Pro Tip! If the rationale needs many qualifiers to sound reasonable, the opportunity may not be strong enough yet.