In product case studies, solutions are not judged by how impressive they look but by how clearly they connect back to real user needs. Reviewers look for evidence of reasoning: why a solution exists, what alternatives were considered, and how constraints shaped the final direction. A feature list alone rarely answers these questions.

Strong case studies demonstrate a deliberate solution process. Ideas start from validated problems, expand into multiple options, and are narrowed down through clear criteria. This approach helps avoid feature-led narratives, where solutions appear arbitrary or driven by assumptions. Instead, solutions are framed as responses to specific user goals and behaviors.

Equally important is acknowledging limits. Feasibility, edge cases, and constraints reveal how realistic the thinking is. They show that the solution was not imagined in isolation, but shaped by technical, business, and contextual realities. When users can clearly explain not only what they built, but why this solution made sense over others, their case studies feel grounded, credible, and senior.

Rewriting feature descriptions as problem-driven solutions in a case study

Many case studies begin their solution section with a feature list. This often weakens the story, even when the product work itself was strong. Features describe what was built, but they do not explain why the work mattered to users or what problem triggered the decision. As a result, reviewers are left guessing about the reasoning behind the solution.

A stronger approach starts by stepping back from the feature and reframing it as a response to a user problem. Instead of presenting a capability, the case study should first clarify what users were struggling with and why that struggle mattered. This shift helps separate real user needs from surface-level requests or internal assumptions. It also reduces the risk of feature creep, where solutions grow without a clear connection to value.

When rewriting a feature description, focus on intent rather than implementation. Describe what users were trying to achieve, what was blocking them, and how the solution addressed that gap. The feature then becomes evidence of problem-solving, not the centerpiece. This makes the solution feel deliberate and grounded rather than decorative or trend-driven.[1]

Pro Tip! If a solution sounds impressive but could fit any product, it is probably missing a clear user problem.

Connecting user insights to solution directions in a case study

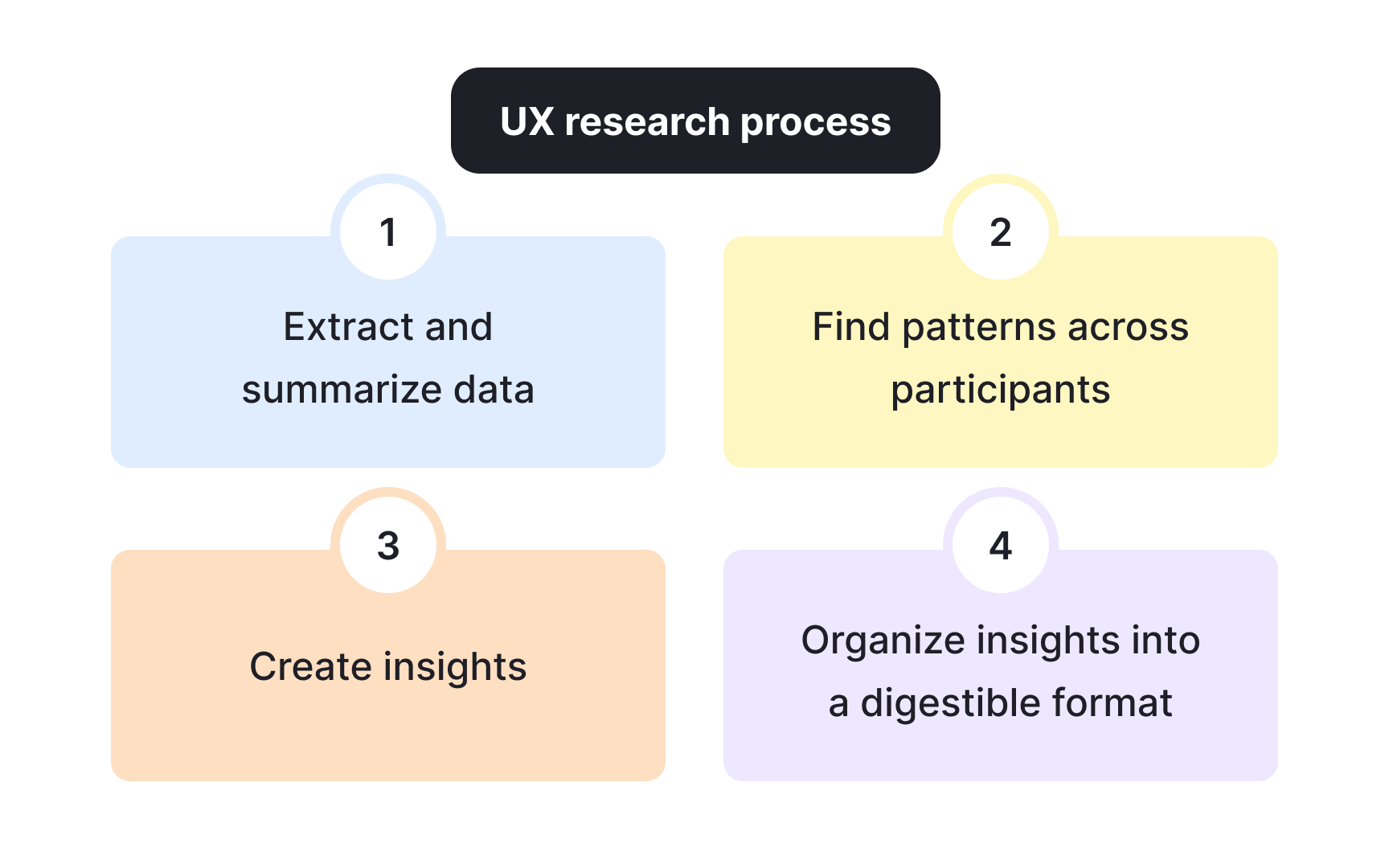

User research often appears in case studies as a separate section that never fully connects to the solution. Insights are listed, then the narrative jumps straight to what was built. This gap makes the solution feel assumed rather than reasoned, even when the research was thorough.

A strong case study creates a visible link between insights and solution direction. Each major solution choice should be traceable back to something users said, did, or struggled with. This does not mean mapping every feature to a quote. It means showing how patterns in behavior or feedback shaped the direction of the solution space.

To do this well, focus on synthesis rather than raw data. Group insights into themes that explain user intent, constraints, or motivation. Then show how those themes narrowed the range of possible solutions. This approach demonstrates that the solution was not chosen because it felt right, but because it addressed a clearly defined opportunity supported by evidence.

When this connection is clear, the case study reads as a sequence of decisions instead of a collection of artifacts. Reviewers can follow the logic, understand trade-offs, and trust that the solution emerged from learning rather than guesswork.[2]

Pro Tip! If an insight does not influence a decision, consider removing it or explaining why it did not change the direction.

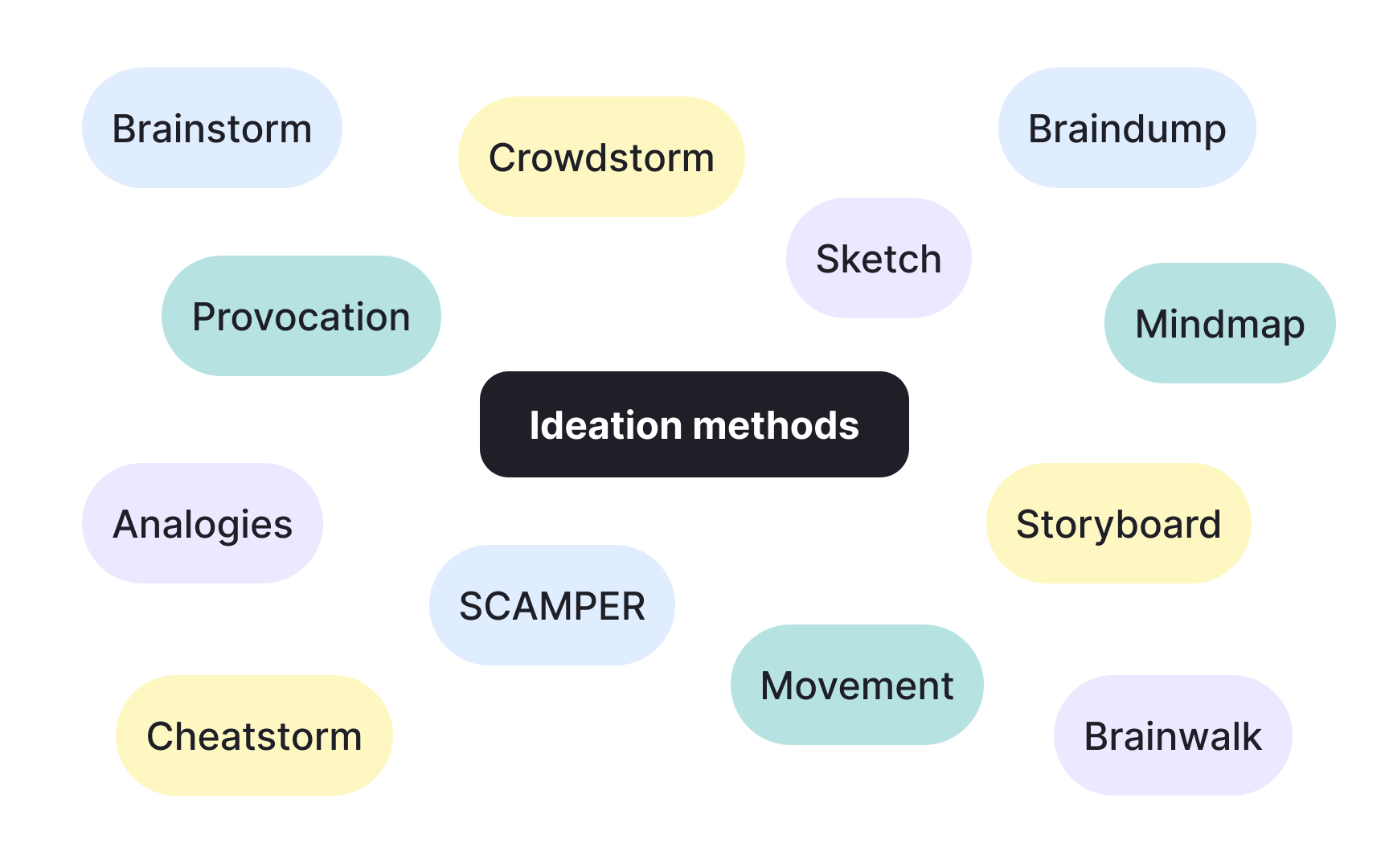

Using ideation frameworks to show range, not chaos in a case study

When case studies mention ideation without showing how ideas were generated, solutions can feel accidental. A short, structured example helps reviewers see that exploration was intentional, not random. Ideation frameworks are useful here because they guide thinking while keeping the solution space readable.

Consider a team working on a drop-off problem during onboarding. Instead of jumping straight to adding tooltips, the team frames the problem around what users are trying to achieve in their first session. Using a simple structured brainstorm, ideas are generated across different angles: reducing steps, changing defaults, delaying complexity, or shifting guidance to later moments. Each idea addresses the same user goal but in a different way.

In a case study, this example does not need diagrams or method names. What matters is showing that ideas were explored across distinct directions before narrowing down. This signals that the final solution was chosen after comparison, not because it appeared first or felt familiar. A small set of clearly different ideas communicates range without overwhelming the narrative.[3]

Pro Tip! If every idea improves the same thing in the same way, ideation likely started too close to a solution.

Comparing alternative solutions in a case study

When a case study jumps straight to a final solution, the decision often feels obvious in hindsight. Including a short comparison of realistic alternatives helps show how uncertainty was handled and why one direction made sense.

Imagine a team working on low engagement with a reporting feature. One option is to add deep customization so users can tailor reports. Another is to introduce ready-made presets based on common use cases. A third is to improve onboarding for the existing reports without changing the feature itself. Each option addresses the same problem in a different way.

A strong case study briefly explains the trade-offs. Customization increases flexibility but adds complexity and cost. Presets reduce effort for most users and are faster to ship. Better onboarding improves understanding but does not change the core value. The chosen solution is justified through user impact, feasibility, and expected outcomes rather than personal preference.

This comparison makes the solution feel chosen, not assumed, and helps reviewers trust the reasoning behind the decision.[4]

Avoiding feature-led storytelling in a case study

Feature-led storytelling happens when a case study explains progress through what was built instead of what was solved. The narrative often follows a timeline of releases, screens, or capabilities, while the underlying user problem fades into the background. Even strong solutions can appear shallow when the story centers on delivery rather than reasoning.

A more effective case study keeps the problem visible throughout the solution section. Instead of saying what was added or changed, the story explains how each decision moved users closer to a desired outcome. This shift helps reviewers understand intent and prevents the solution from looking like a collection of disconnected features.

Feature-led storytelling is especially risky when teams respond directly to requests or stakeholder input. Without reframing those inputs as problems to solve, the case study can read like order-taking. Clear problem framing keeps the focus on impact and shows that decisions were driven by user needs rather than convenience or pressure.[5]

Framing solutions as hypotheses, not final answers in a case study

In many case studies, solutions are described as if the team were certain from the start. This rarely matches reality. Most product decisions are made with partial data, time pressure, and open questions. When a case study hides this uncertainty, the work can feel less credible.

A more realistic approach is to describe the solution as a belief the team chose to test. For example, a team might believe that simplifying a complex setup flow will help users reach their first successful action faster. The solution is then presented as one way to test that belief, not as the only possible answer.

This framing makes the reasoning clearer. It explains what the team expected to change and why this direction was chosen over others. It also creates space to talk about what was measured, what worked, and what needed adjustment. Instead of sounding defensive or polished, the case study reflects how product decisions actually happen.

Framing solutions this way signals confidence without pretending certainty. It shows that progress came from learning and iteration, not from guessing right on the first try.[6]

Pro Tip! If the case study cannot explain what would have proven the idea wrong, the hypothesis is missing.

Addressing feasibility in solution explanations

Some case studies describe solutions that sound ideal but ignore whether they could realistically be built. This creates doubt, even when the idea itself is strong. Reviewers often look for signs that feasibility was considered early, not discovered too late.

A solid case study briefly explains how feasibility shaped the solution. This might include technical limits, team capacity, data availability, or dependencies on other systems. The goal is not to list constraints, but to show how they influenced decisions. For example, a team may choose a simpler approach first because it can be delivered with existing infrastructure, even if a more advanced idea exists.

Including feasibility in the solution narrative shows practical judgment. It explains why certain options were postponed, simplified, or rejected. This makes the solution feel grounded in reality and helps reviewers trust that the work could succeed outside a hypothetical setting.

Pro Tip! Mention feasibility only where it changed a decision, not as a separate checklist.

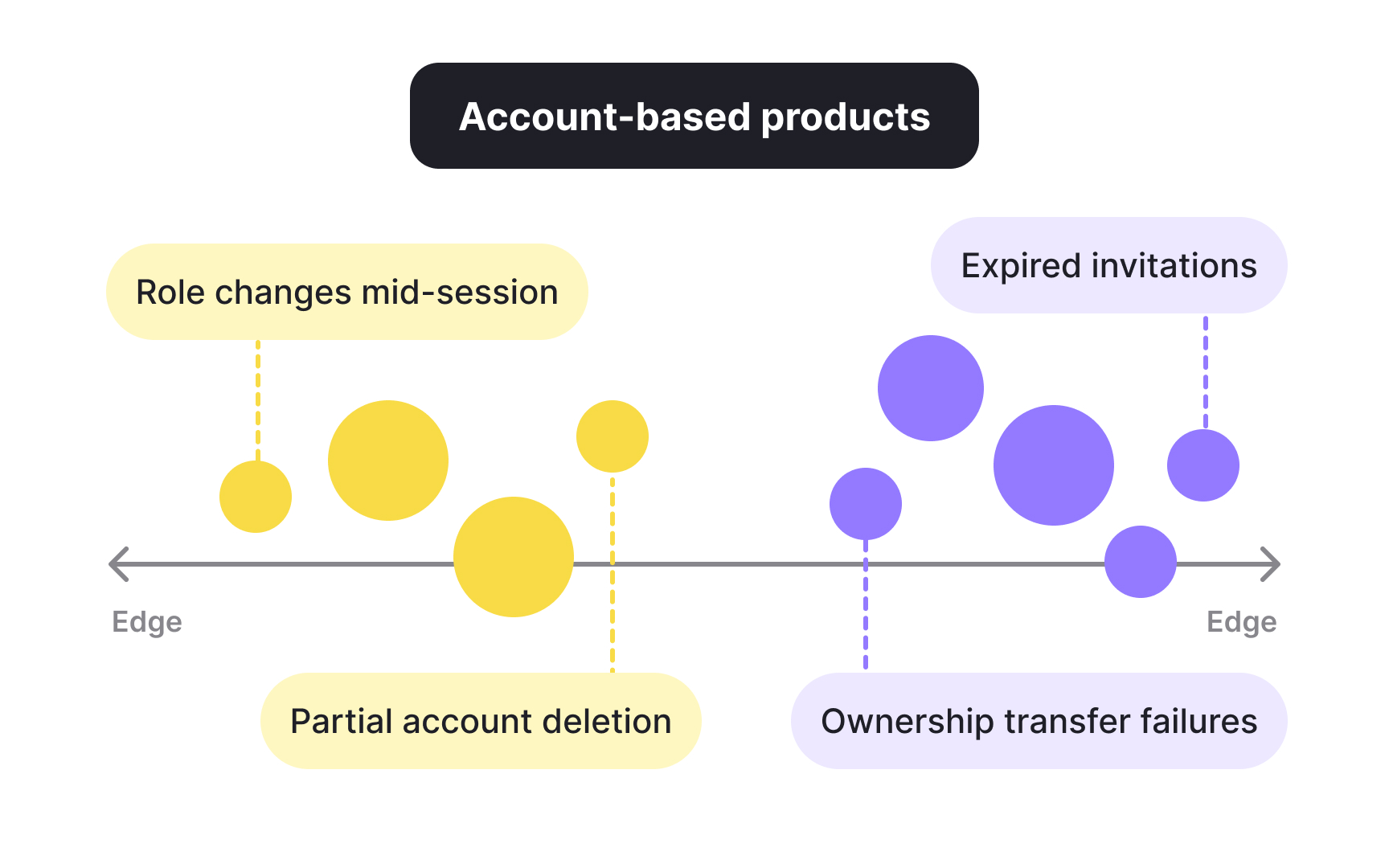

Handling edge cases that influence the final solution

Edge cases are often treated as afterthoughts, yet they can change a solution in meaningful ways. When case studies ignore them, solutions may appear fragile or overly optimistic. Addressing edge cases shows depth of thinking and awareness of real-world use.

A strong case study focuses on edge cases that affect the solution direction, not every rare scenario. For example, users with incomplete data, interrupted flows, or limited permissions may require changes to how a feature behaves or how success is defined. These cases often reveal hidden assumptions in the original idea.

By explaining how edge cases were handled, the case study shows that the solution was tested against more than a happy path. It also demonstrates restraint by acknowledging limits without expanding scope unnecessarily. This balance signals maturity and helps reviewers see the solution as robust rather than idealized.

Pro Tip! If an edge case changes the main flow, it deserves mention. If it does not, it likely does not.

Balancing desirability, feasibility, and viability in case study decisions

In real projects, product decisions often pull in different directions. Users may want one thing, the team may be able to build another, and the business may need something else. Case studies that acknowledge this tension feel more honest and easier to trust.

Consider a team responding to a strong request from power users for advanced reporting controls. Research shows that these users rely heavily on reports, which makes the solution highly desirable. However, engineering explains that the feature would require major changes to data models and ongoing support, which lowers feasibility. At the same time, the business sees limited revenue impact because only a small segment would use it, which weakens viability.

A clearer alternative might offer simplified presets that address the most common needs. This option is still desirable for many users, feasible within current systems, and viable because it benefits a larger segment at less cost. In a strong case study, the team explains this trade-off openly and shows why the second option was chosen at this stage.

Making these dimensions visible helps reviewers understand not just what decision was made, but why it made sense in context.

Pro Tip! Labeling each trade-off in plain language often clarifies decisions more than naming the framework itself.

Topics

References

- How to translate

- Product discovery methods every PM should know | Plane Blog | Plane

- Product Ideation: How to Keep Your Feature Ideas Flowing | ProdPad | ProdPad

- Making The Shift From Feature-Based to Outcome-Led Roadmaps — Steve Forbes - Innovation | Product Development | Strategy | Steve Forbes - Innovation | Product Development | Strategy

- Avoiding Feature Creep: Tips to Keep Your Product Focused

- Product discovery methods every PM should know | Plane Blog | Plane