Strong case studies rarely fall apart because teams lack ideas. They fall apart when prioritization decisions are unclear, weakly justified, or disconnected from real constraints. When everything looks important, credibility comes from showing how choices were made and what was intentionally set aside.

Prioritization in product work sits at the intersection of user value, business viability, delivery effort, and timing. Case studies that communicate this well surface the tension between these forces instead of hiding it. They show how frameworks like RICE, MoSCoW, or impact versus effort helped structure decisions, while making it clear that numbers and labels alone were not enough.

Clear prioritization stories explain why certain features shaped the MVP, why others were excluded, and how competing requirements were handled under pressure. They reveal how teams protected long-term product direction while responding to stakeholder and sales demands. This level of clarity signals strategic thinking, maturity, and ownership of decisions, rather than a focus on outcomes alone.

Framing prioritization as a case study decision

Prioritization in a case study is not a background activity. It is a visible decision point that explains why the product took its final shape. A strong case study does not jump from problem to solution. It pauses at the moment where multiple ideas compete and shows how the team decided what deserved attention first.

This framing starts by clarifying the constraints that made prioritization necessary. These can include limited engineering capacity, delivery deadlines, technical risk, or strategic focus. By anchoring prioritization in real limits, the case study avoids sounding hypothetical or idealized.

Clear prioritization framing also highlights consequences. When one feature is chosen, another is delayed or dropped. Making these trade-offs explicit helps readers understand that decisions were intentional, not accidental. This turns prioritization into evidence of judgment rather than a hidden step in the process.[1]

Choosing a prioritization framework that fits the story of a case study

Prioritization frameworks support decisions only when they match the situation. In case studies, their role is to clarify trade-offs, not to demonstrate tool knowledge. The framework choice signals what mattered most when decisions had to be made.

Different frameworks highlight different dimensions of value and cost. Selecting one reflects the nature of the decision rather than personal preference:

- RICE supports comparisons when estimating relative impact across a broader user base, which helps rank competing initiatives.

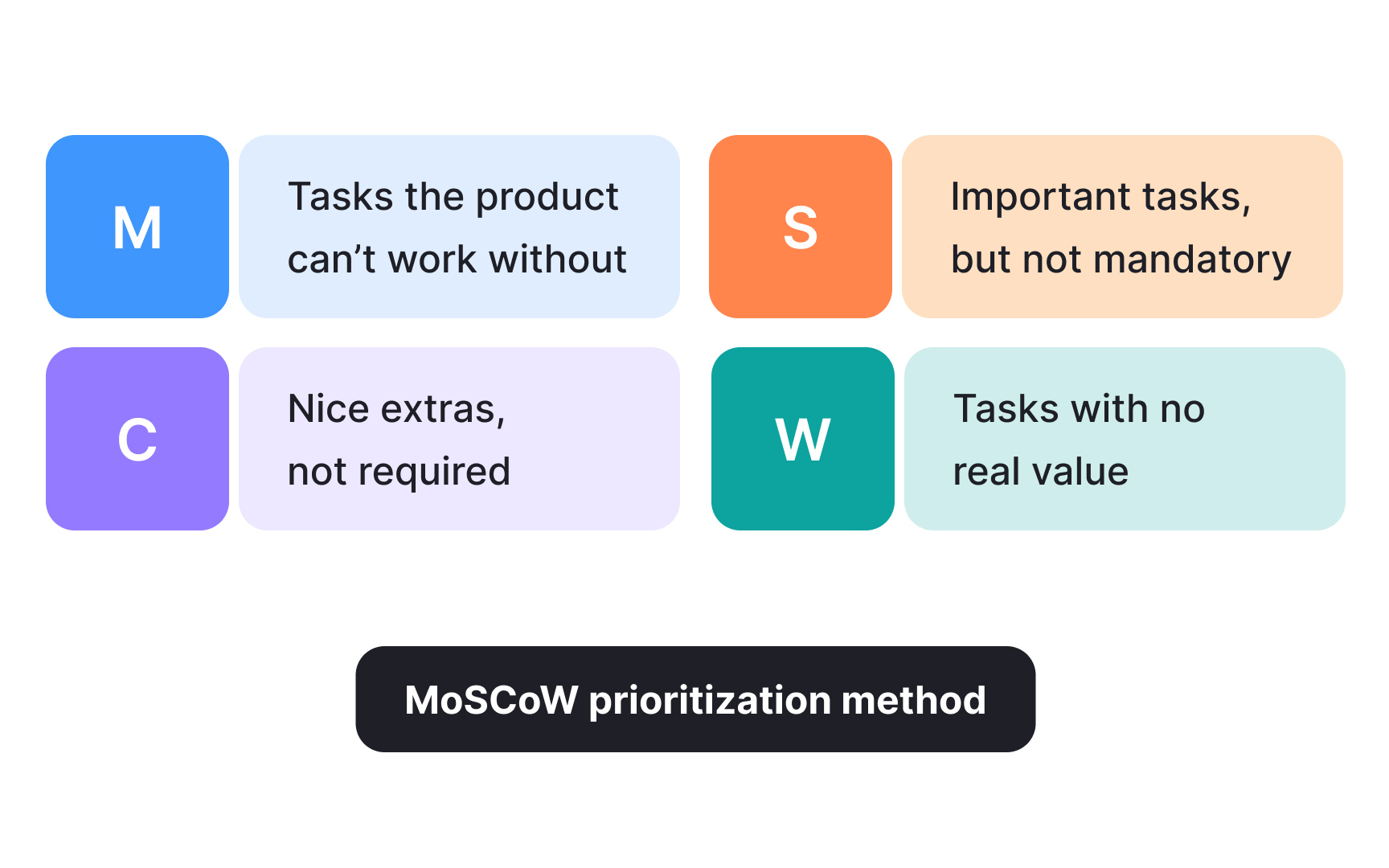

- MoSCoW helps reduce scope when a release must ship under fixed time or capacity limits.

- Impact versus effort offers a fast way to balance expected value against delivery cost during early alignment.

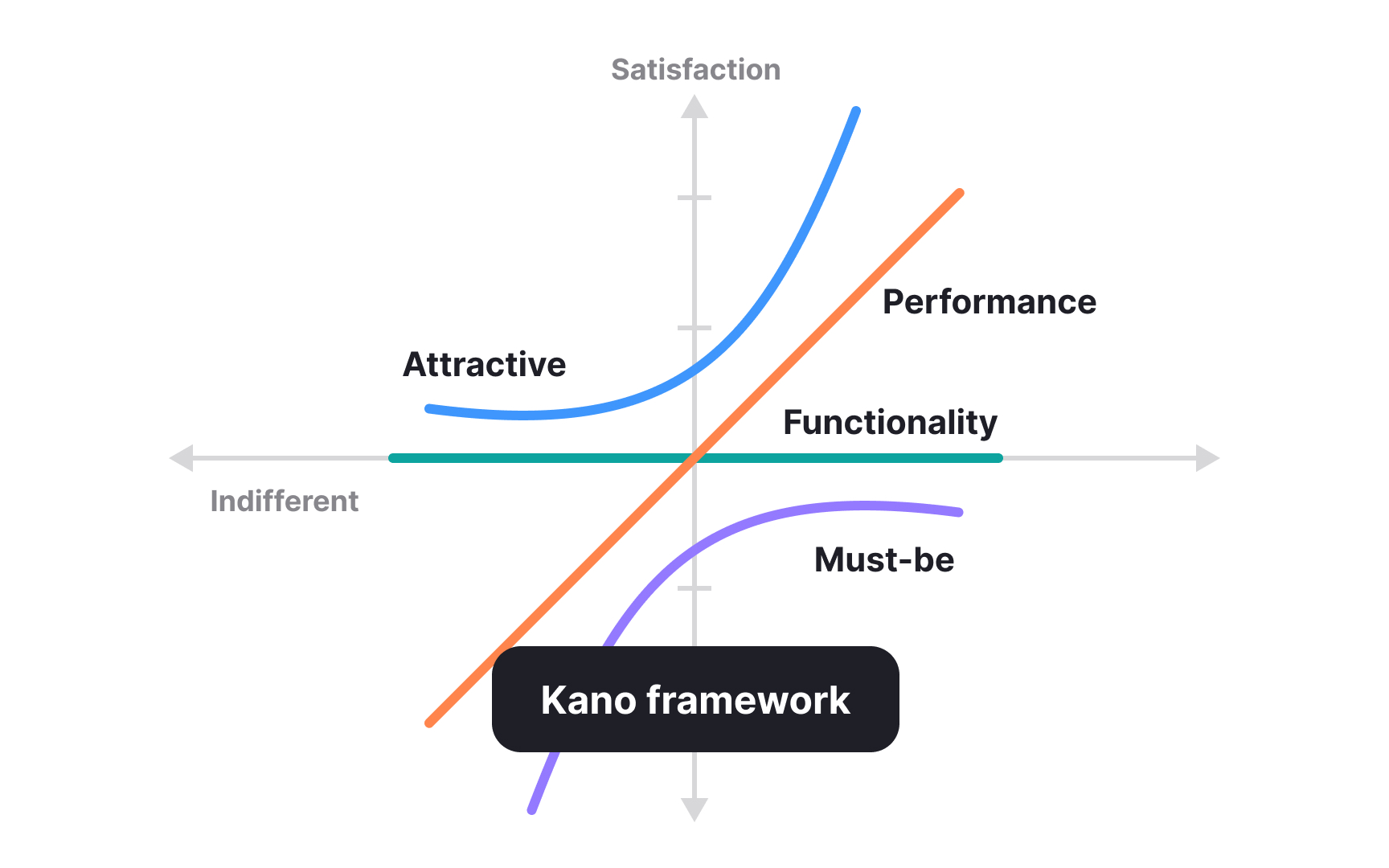

- Kano focuses on user satisfaction by separating baseline expectations from features that create delight.

- MVP feature exclusion keeps early releases focused by defining what not to build in order to validate the core idea.

In case studies, frameworks work best when they are briefly introduced and clearly tied to the decision they enabled. The emphasis stays on why this structure helped the team move forward, not on how the framework works in theory.[2]

Pro Tip! Avoid stacking frameworks in one decision. One well-justified choice is clearer than several lightly explained ones.

Weighing user value against business viability in a case study

Prioritization decisions in case studies often sit between what benefits users and what sustains the business. These forces do not always point in the same direction. A convincing case study shows how teams navigated this tension instead of presenting decisions as naturally aligned.

User value reflects whether a feature meaningfully solves a problem, reduces friction, or improves experience quality. Business viability introduces limits such as revenue goals, scalability, operational cost, or strategic positioning. When one side dominates without explanation, prioritization decisions lose credibility.

Strong case studies make the trade-off explicit. They explain why certain user needs could not be addressed immediately or why business-driven constraints reshaped the solution. By acknowledging both sides and explaining how a balance was reached, teams demonstrate realistic decision-making rather than ideal outcomes.[3]

Pro Tip! If business goals influenced a decision, name them directly. Vague references to “strategy” weaken prioritization logic.

Defining MVP scope through exclusion in a case study

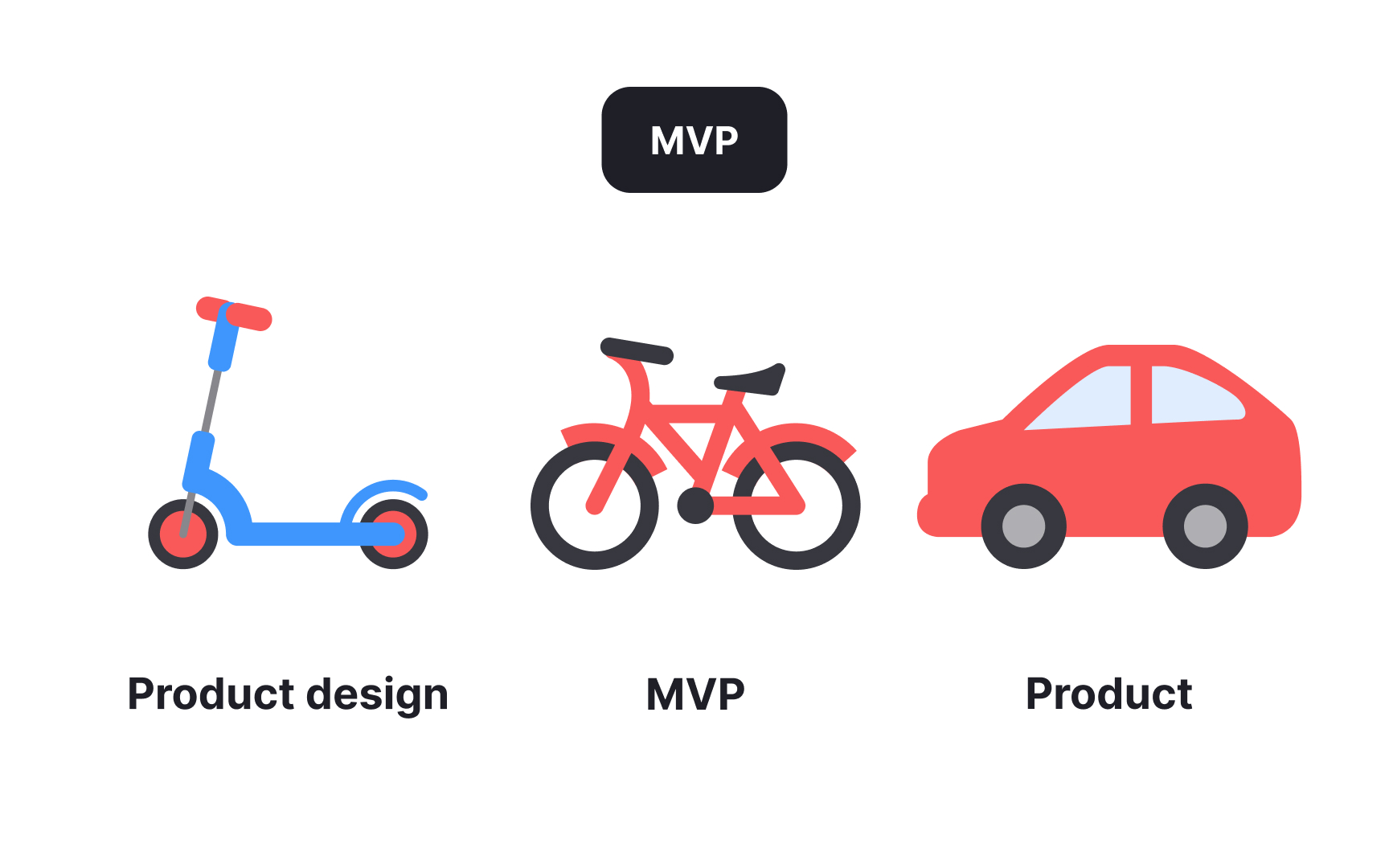

An MVP is not a smaller version of the final product. It is a focused experiment designed to validate a core assumption. In case studies, MVP scope becomes meaningful when teams clearly explain why only a limited set of features was chosen and why others were intentionally excluded.

Deliberate exclusion protects focus. Each additional feature increases development effort, maintenance cost, and cognitive load for users. When teams attempt to include too much, the MVP loses its ability to test a clear hypothesis. Strong case studies show how teams resisted this pressure and kept the scope narrow on purpose.

Clear MVP decisions also connect exclusions to learning goals. Features may be postponed because they do not contribute to validating the main problem, introduce unnecessary technical risk, or delay feedback from users. Explaining these reasons demonstrates that the MVP was shaped around learning, not comfort or completeness.[4]

Pro Tip! If a feature was excluded despite strong stakeholder interest, explain why learning speed mattered more than coverage.

Explaining what not to build and why in a case study

Saying “no” is one of the clearest signals of product judgment in a case study. Decisions gain credibility when teams explain why certain features were rejected, even when those features seemed attractive or commercially tempting.

Clear explanations focus on alignment rather than preference. Features may be excluded because they conflict with the product’s long-term direction, add complexity without solving a shared problem, or serve only a narrow edge case. When this reasoning is made explicit, the decision feels strategic rather than defensive.

Strong case studies also show how teams communicated these decisions. Instead of framing exclusions as limitations, they explain what was protected by saying no. This can include product simplicity, maintainability, or the ability to serve a broader group of users more effectively.

By articulating what was not built and why, teams demonstrate ownership of the product direction. This shows that decisions were guided by principles and priorities, not short-term pressure.

Pro Tip! When explaining a “no,” connect it to a principle the team consistently applied elsewhere in the case study.

Prioritizing conflicting requirements in a case study

Conflicting requirements show up in real product work almost immediately. A sales request may promise revenue but increase complexity. Engineering may flag technical risk while stakeholders push for speed. Users may want flexibility that the system cannot safely support yet. Case studies become stronger when these tensions are named instead of smoothed over.

Prioritization decisions gain clarity when teams first separate the underlying needs from proposed solutions. What sounds like a feature request is often a signal of a deeper problem, such as visibility, control, or trust. Reframing the conflict around the real need helps teams compare options more fairly.

Resolution rarely means making everyone happy. It involves trade-offs that are explained and owned. Teams gather input, challenge assumptions, and assess impact across users, delivery, and long-term direction. The final decision reflects what the product can support now without creating future risk.

By showing how these conflicts were handled, case studies reflect real decision-making under pressure.[5]

Pro Tip! Describe the conflict before the solution. Realistic tension makes the resolution feel earned.

Making trade-offs visible, not implicit in a case study

Trade-offs often exist in case studies, but they are not always visible. When teams move too quickly from decision to outcome, the reasoning behind the choice becomes implied instead of stated. This weakens the credibility of the case study, even if the final solution is strong.

Explicit trade-offs describe what was gained and what was lost. Choosing speed may reduce flexibility. Supporting more users may limit customization. Improving short-term metrics may delay long-term improvements. When these tensions are clearly named, decisions feel intentional rather than convenient.

Strong case studies pause at these moments. They explain why one direction was chosen over another and what risks were accepted as a result. This shows that the team understood the cost of the decision and chose it deliberately, not by default.

Making trade-offs explicit helps readers trust the thinking behind the work. It turns prioritization from a background activity into a visible act of judgment.

Pro Tip! If a decision had no downside, it probably was not a real trade-off. Look for what was sacrificed.

Supporting prioritization decisions with evidence

Prioritization decisions feel fragile when they rely only on opinion. Case studies become more convincing when teams show what informed their choices, even if the data was incomplete or directional.

Evidence can take many forms. Patterns in user feedback, usage trends, known constraints, or observed behavior can all support prioritization. What matters is not perfect certainty, but showing that decisions were grounded in something observable.

Strong case studies explain how evidence influenced the decision. A feature may have been deprioritized because only a small segment needed it. Another may have moved forward because it addressed a repeated pain point. These links between insight and action make prioritization easier to follow.

By connecting decisions to evidence, case studies demonstrate disciplined thinking. They show that choices were reasoned, challenged, and defended, not simply preferred.

Pro Tip! State what evidence was missing as well as what was available. This shows awareness of uncertainty, not weakness.

Stress-testing prioritization logic in a case study

Prioritization decisions in case studies should hold up beyond the written page. Stress-testing logic means checking whether the reasoning would survive direct questions from stakeholders, peers, or interviewers. If a decision falls apart when challenged, it likely needs clearer justification.

Stress-testing focuses on pressure points. Why this feature now and not later. What would change the decision. Which assumption carried the most risk. Strong case studies anticipate these questions and address them proactively instead of waiting for criticism.

This process often reveals weak spots. A decision may rely too heavily on optimistic impact estimates or overlook long-term maintenance costs. Calling out these limitations strengthens credibility. It shows that the team understood uncertainty and still made a reasoned choice.

Pro Tip! If a decision did not affect direction or scope, consider removing it from the case study story. Not every choice deserves equal weight.