Every product manager makes dozens of decisions each week. Most of them are made without a clear framework, driven by habit, pressure, or whoever is loudest in the room. The problem is not a lack of effort. It is a lack of the right tools.

Decision quality is the skill that determines how far a PM goes. Early in a career, the cost of a bad decision is low. A sprint gets wasted. A feature misses the mark. But as responsibility grows, the decisions get costlier, the stakes higher, and the margin for error smaller. The PMs who advance are the ones who train their ability to distinguish signal from noise, move fast on reversible choices, slow down on irreversible ones, and avoid the cognitive traps that derail even experienced teams. This lesson covers the mental models that matter most at the execution level: how to categorize decisions by their reversibility, how to break free from analysis paralysis, why outputs and outcomes are not the same thing, and how to stop defending bad ideas just because you have already invested in them. These are not abstract frameworks. They are practical habits that change how you operate under pressure.

Why decision quality is the real career lever

Product management is often described as a role about building things. But at its core, it is a role about making decisions: what to build, what to stop, what to prioritize, and how to move forward when the data is incomplete. The quality of those decisions compounds over time. A PM who makes consistently sound calls in ambiguous situations grows faster than one who ships more features but makes those calls poorly.

The reason decision quality matters so much is that the cost of decisions scales with seniority. As a PM grows, the number of decisions they make decreases, but the impact of each one rises. A junior PM deciding which bug to fix this sprint carries low stakes. A principal PM deciding which market to enter, or which product to sunset, carries risks measured in months of engineering time and millions of dollars. Every product team faces 4 risks:

- Value risk: will anyone want it?

- Usability risk: can people use it?

- Feasibility risk: can the team build it?

- Viability risk: does it work for the business?

A product manager's job is to reduce these four risks through the quality of decisions made before a single line of code is written.[1]

Pro Tip! Your job is not to guarantee success. It is to make the best possible decision with the information you have, then learn fast.

Reversible and irreversible decisions

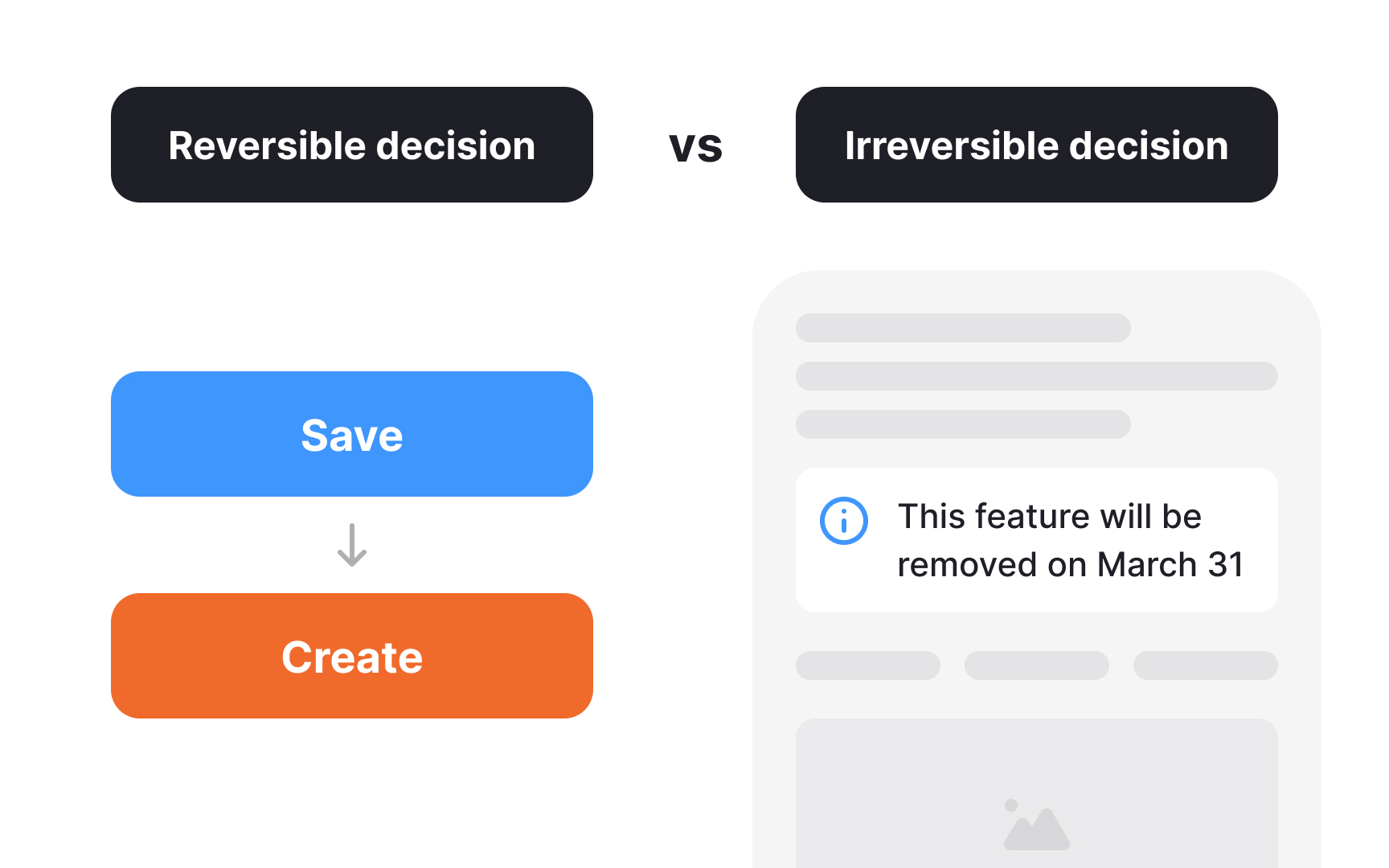

Not all decisions deserve the same amount of time and deliberation. Some decisions are consequential and nearly impossible to undo. Choosing to sunset a product line, re-platforming a core system, or making a public pricing commitment — these are decisions where getting it wrong is costly and hard to reverse. They require careful thinking, broad contribution, and real consultation before acting.

Most decisions are not like that. A feature flag, a copy change, a new onboarding flow, these can be shipped, measured, and rolled back without lasting damage. Treating these reversible choices with the same weight as irreversible ones is how teams become slow, overly cautious, and unable to experiment enough. It confuses the appearance of rigor with actual quality.

The practical question to ask before any decision is: if this turns out to be wrong, what does it cost to undo? The higher the cost, the more care the decision deserves. The lower the cost, the faster it should be made. Most daily product decisions fall into the reversible category. Recognizing that, and acting accordingly, is one of the fastest ways to increase a team's speed without increasing its risk.[2]

Pro Tip! Before any decision, ask: what does it cost to undo this? That answer tells you how much time it deserves.

Make decisions before full certainty

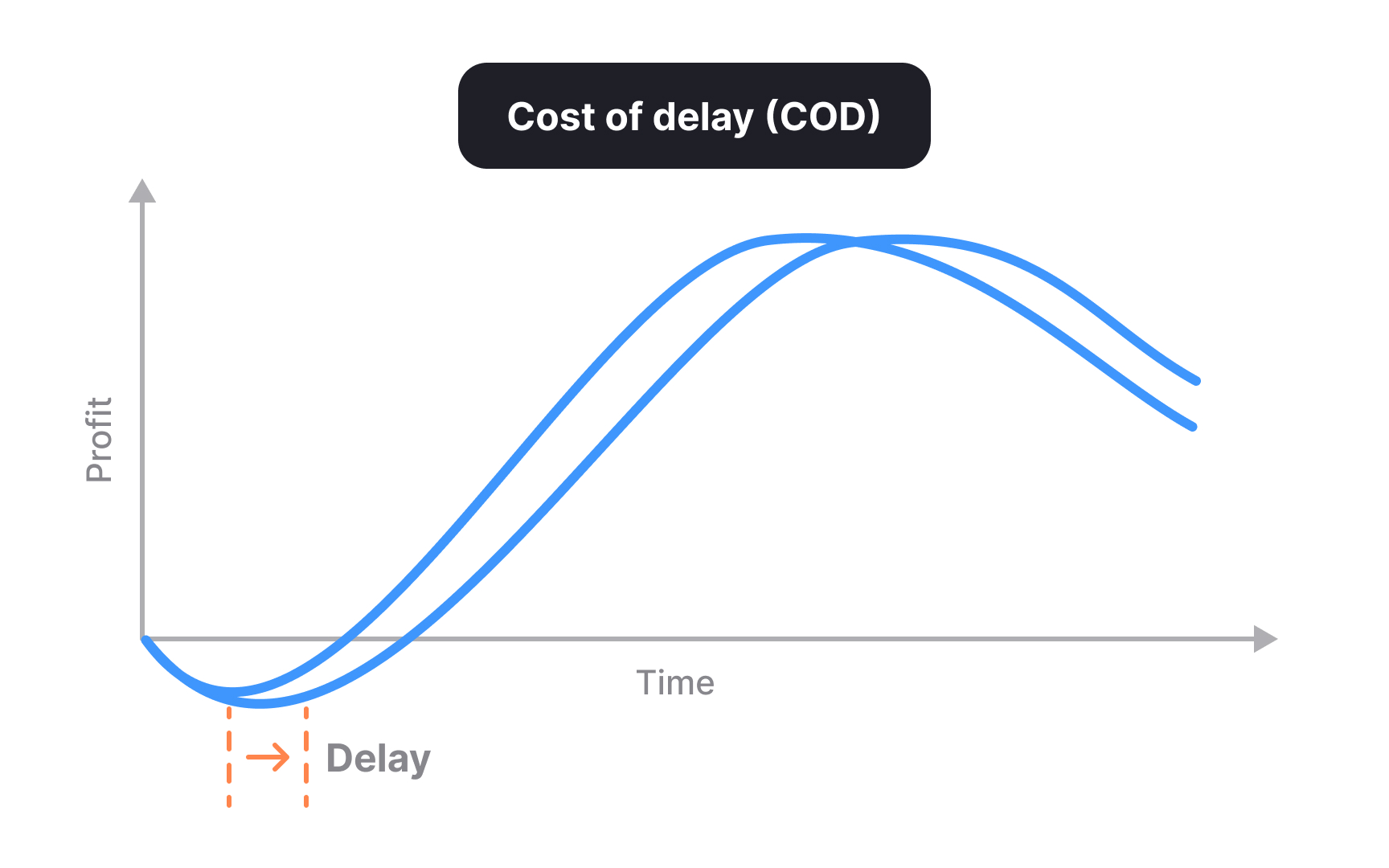

There is a real trade-off between accuracy and speed in decision-making. More time gathering information means more certainty, but it also means slower movement. Past a certain threshold, the extra confidence gained from additional research no longer justifies the cost of the delay. Product teams that wait for near-certainty before acting are usually making the wrong trade-off.

A useful rule of thumb is to make a decision once you are around 70% confident. This is not an excuse to act carelessly. It is a recognition that in competitive product environments, time to market carries a measurable cost. Every week a validated decision sits unacted on is a week of potential value that the team is not capturing.[3]

Cost of Delay puts a number on waiting. Every week a decision stalls, the team is losing value that it could have been capturing. That reframes the question from 'are we confident enough?' to 'what is this delay actually costing us?'[4]

Pro Tip! Slow decisions are not safe decisions. Waiting has a price. Know it before choosing to wait.

Shift focus from outputs to outcomes

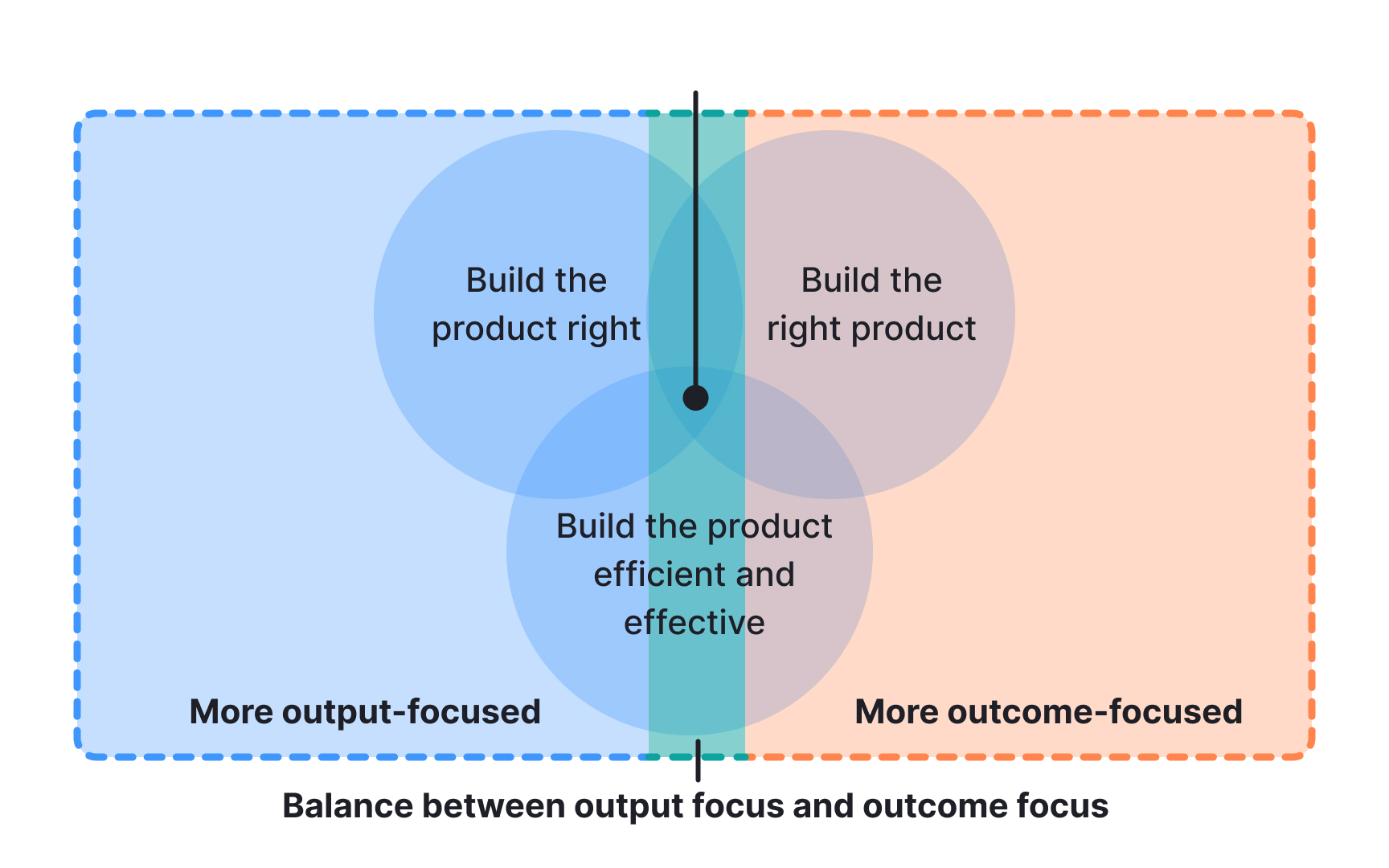

An output is something a team produces: a feature, a screen, a data export. An outcome is a change that creates real value: users retain longer, conversion improves, and support volume drops. Teams that focus on outputs measure success by how much they ship. Teams that focus on outcomes measure success by what changes as a result.

The gap between the two shows up in practice more often than most teams expect. A feature can be well-designed, delivered on time, and technically solid, yet produce no meaningful movement in the metric it was meant to address. When shipping becomes the goal, the question of whether the work is actually solving a problem gets quietly dropped.

Outcome-based thinking changes the starting point. Instead of asking "what are we building?", a PM working from outcomes asks "what behavior do we want to change, and what is the smallest thing we could test to see if we can change it?" That shift is the foundation of continuous discovery. It is also what separates teams that stay busy from teams that build things that matter.

Pro Tip! A feature is done when it changes behavior, not when the code ships.

Use prioritization frameworks as thinking tools, not answers

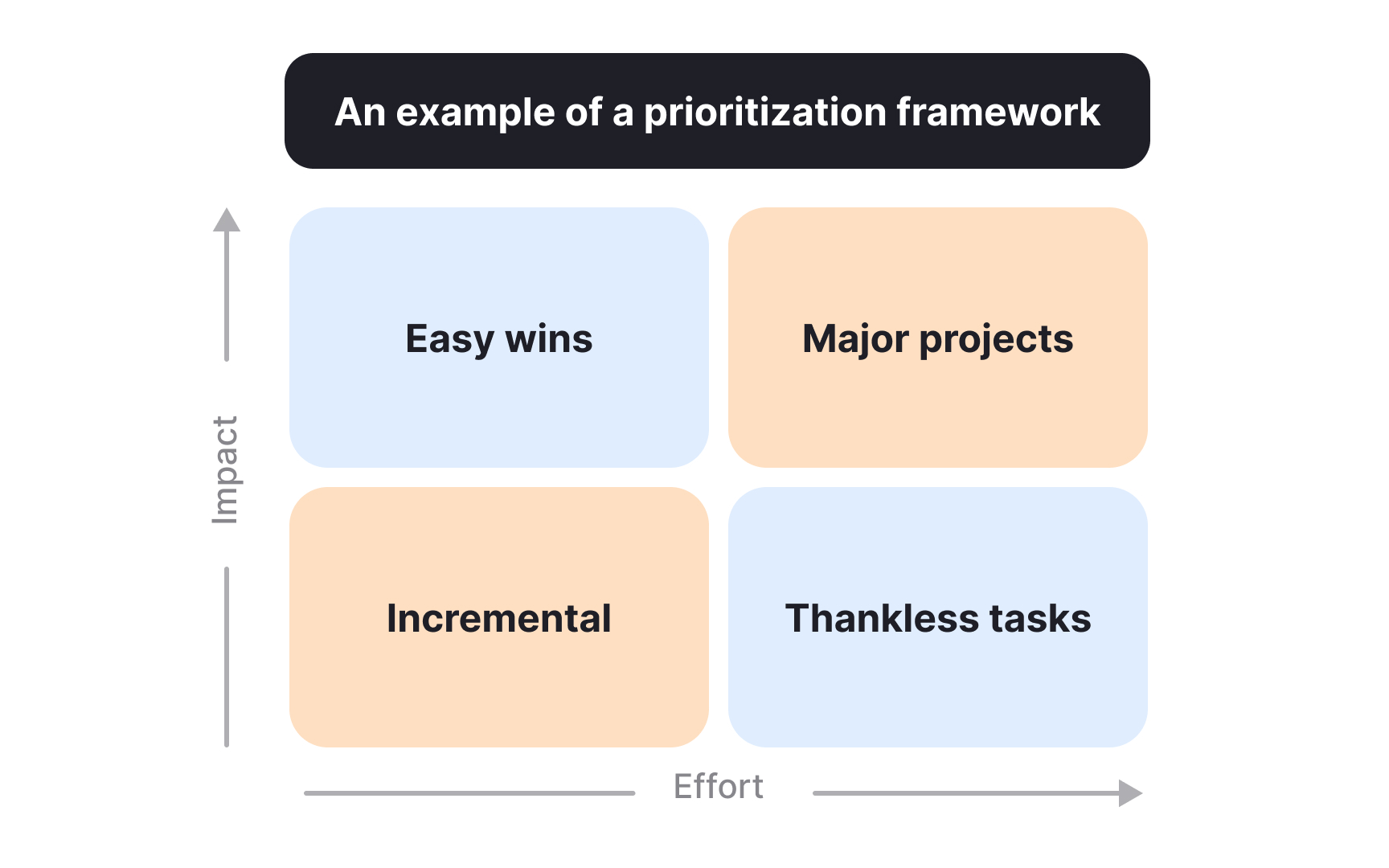

Prioritization frameworks are thinking tools, not decision machines. RICE, Kano, and MoSCoW each have a context where they add value. The problem begins when a PM applies the same framework to every decision regardless of fit, or when filling out a scoring template becomes a substitute for actually understanding the problem.

A common version of this trap is over-scoring. A team assigns numbers to reach, impact, confidence, and effort, adds them up, and treats the output as a decision rather than one input among several. The scores feel precise, but they rest on estimates that are often barely more than guesses. Complexity that has not been resolved through clear thinking does not disappear when it gets a number attached to it.[1]

The real skill is knowing when to set a framework down. Process can create the illusion of rigor without producing it. A PM who follows every step of a scoring template but skips the harder question ("Do we actually understand this problem?") has optimized the process while bypassing the thinking. Principles travel further than any specific method. They work across contexts, adapt to new constraints, and stay useful even when the framework doesn't fit.

The test of a useful framework is simple: does it help you think more clearly about this specific decision? If yes, use it. If the team is spending more time managing the process of using it than actually thinking about the problem, it has stopped being useful.[5]

Know when to drop a framework and just decide

Knowing when a prioritization framework, like RICE or MoSCoW, is helping and when it is getting in the way is one of the harder skills to develop in product management. Less experienced PMs often reach for the same framework regardless of context because it is the tool they know. More experienced ones apply frameworks selectively, pick the one that fits the specific decision, and sometimes choose not to use one at all.

The clearest signal that a framework has stopped earning its place is when the team is spending more energy on the process of using it than on actually thinking about the problem. A scoring table that produces the same ranking the team already assumed going in is not generating insight. It is generating the appearance of rigor.

A useful test before committing to a framework: if you removed it from this decision, would the outcome change? If the answer is no, the framework was confirming a view already held, not forming one. If the answer is yes, it is doing real work. The goal is not to be a PM who uses frameworks well. The goal is to be a PM who makes good decisions. Frameworks are one tool among many. Knowing when to set them down is part of mastering them.

Recognize and escape the sunk cost trap

The sunk cost fallacy is the tendency to keep investing in something because of what has already been spent, even when current evidence points toward stopping. Time, money, and effort already committed cannot be recovered. Rationally, they should not factor into what comes next. In product teams, they often do.

It shows up as a feature that keeps getting resourced because months have already gone into it, despite signals that users do not want it. It appears on roadmaps where projects survive not because they are still the best use of capacity, but because stopping them feels like an admission of failure. It comes up in planning conversations whenever someone says, "We've already built so much of this."[6]

The reframe that breaks the trap is asking: if we were starting from zero today, with no prior investment, would we choose this? That question strips the weight of past commitment from the analysis and focuses the team on future value instead. Leaders who model this approach openly, treating honest reassessment as a sign of good judgment rather than a reversal, make it easier for others to raise the same concerns before more resources are lost.[7]

Pro Tip! Past investment is not a reason to continue. Future value is.

Three tie-breakers for deadlocked product decisions

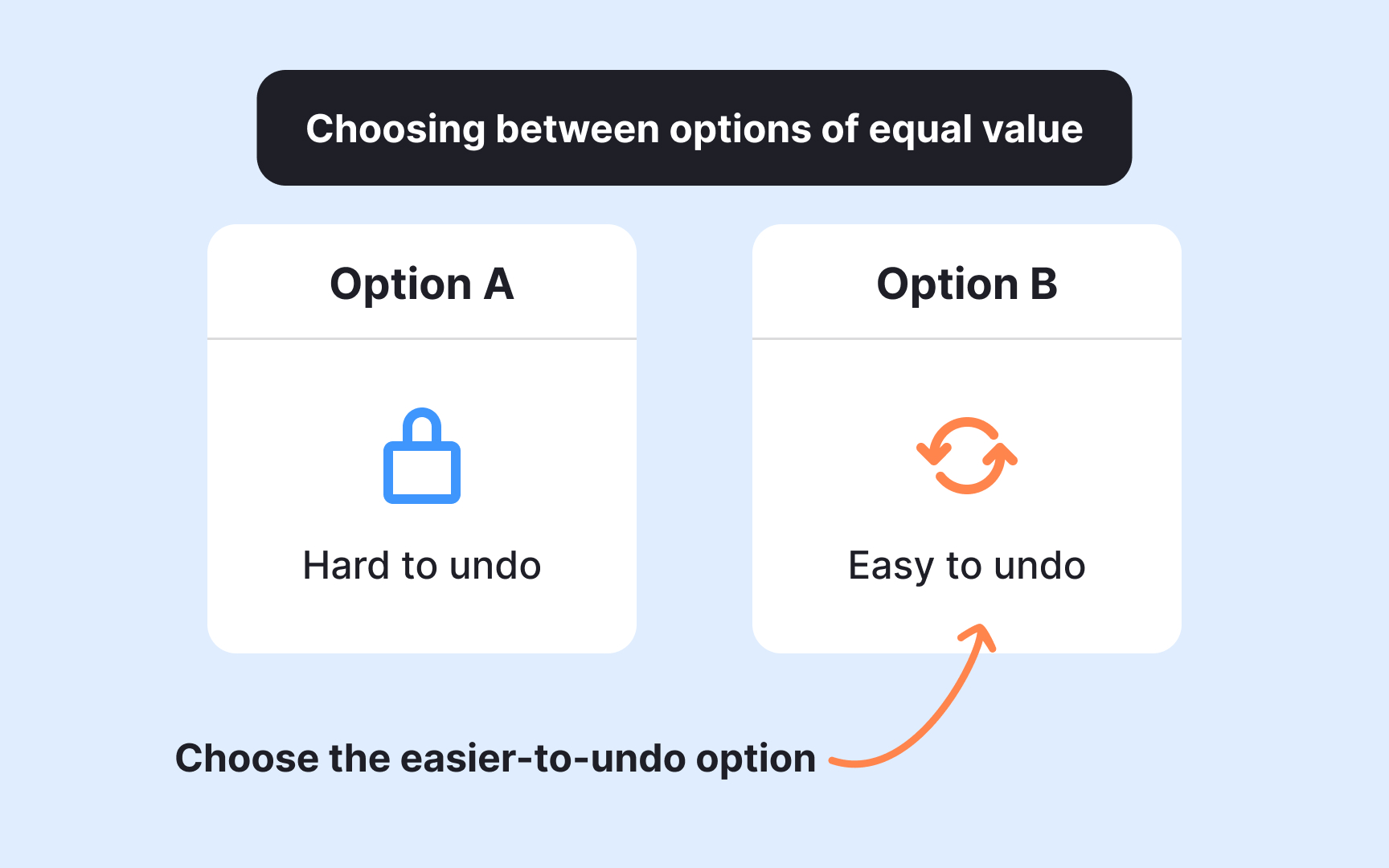

Many product decisions reach a point where two options look equally good. The data support both. The team is split. Seeking more information is tempting, but more information is not always available, and waiting carries its own cost.

Three tie-breakers tend to work well in this situation:

- Reversibility: when two options have similar expected value, choose the one that can be undone more easily. Preserving the ability to course-correct is itself a form of value.[8]

- Alignment and commitment: when more deliberation is unlikely to produce clarity, a decision gets made, and the team commits to it fully. Consensus is not always the goal. Forward momentum often is.

- Strategic fit: when expected returns look equal, ask which option more directly strengthens what makes this product distinct. Numbers can tie. Strategic identity rarely does.

Applying these in sequence, rather than defaulting to the loudest voice or the most senior opinion in the room, keeps tie-breaking grounded in reasoning rather than politics.[9]

Pro Tip! When data is equal, reversibility wins. Pick the door you can walk back through.

Topics

References

- The Four Big Risks - Silicon Valley Product Group | Silicon Valley Product Group

- Reversible and Irreversible Decisions | Farnam Street

- The Sunk Cost Fallacy - The Decision Lab | The Decision Lab

- Sunk Cost Fallacy: Definition, Examples, How to Avoid [2025] • Asana | Asana