UX research involves real people sharing their time, behaviors, and sometimes sensitive personal information. That creates responsibilities beyond good methodology. Researchers need to protect participant safety, handle data carefully, and present findings accurately, even when results contradict a team's assumptions.

Bias is one of the most persistent threats to research integrity. Confirmation bias leads researchers to notice evidence that supports existing beliefs and overlook what does not. Implicit bias shapes who gets recruited and how questions get framed. Recency and primacy biases distort how findings get reported. These effects do not disappear with good intentions, and recognizing them requires deliberate checks at every stage of the process.

Ethics and bias are connected. A researcher who unconsciously favors certain participants, leading questions, or convenient interpretations is not just producing weaker research. They are also failing the people who trusted them with honest participation. Building ethical habits into planning, consent processes, and reporting is what separates rigorous work from research that looks credible but misleads.

Why ethics matter in user research

Any user research involves real people, which means it carries real responsibilities. Unlike academic research, UX research has no strict regulatory framework governing ethical conduct. That means the burden of ethical practice falls directly on the researcher and the team behind the study.

Ethical research rests on 3 pillars:

- Respecting user privacy means collecting only the data you need and storing it securely.

- Obtaining informed consent means participants understand what they're agreeing to before the session begins, including how their data will be used.

- Minimizing bias means designing research that reflects reality rather than confirming what the team already believes.

These aren't just moral considerations. A researcher who shares participant data without consent, or a company that uses manipulative recruitment tactics, risks long-term reputational damage. In the digital age, ethical missteps leave traces that potential users, clients, and partners can find years later. Acting with integrity from the start protects both the people you research and the organization you represent.[1]

How to handle informed consent with participants

Informed consent is more than a signature on a form. It's the foundation of a trust-based relationship between researchers and participants. When users understand what a study is about, how their data will be used, and who will see the results, they tend to engage more openly and share more useful insights.

Start each session by explaining the study's goals, the format of the session, and what happens to the data afterward. A researcher might say: "We're looking at how people navigate our checkout flow. Your responses will be anonymized and shared only with our product team." This kind of transparency makes participants feel respected rather than observed.

That said, full disclosure isn't always possible before a session begins. If revealing the research goals upfront would change how participants behave or answer, researchers can ethically withhold some information temporarily. This is called partial disclosure. When this happens, the researcher must debrief participants immediately after the session, sharing everything that was held back and giving them the opportunity to withdraw their consent and have their data removed.

Partial disclosure is a tool, not a workaround. It should only be used when there's a clear, documented reason, and never as a way to avoid uncomfortable conversations with participants.

Conducting research with sensitive and vulnerable groups

Ethical research means paying attention not just to what users say, but to how they feel during the session. Some participants arrive nervous about being tested or recorded. Others may be reluctant to share personal details with a stranger. This discomfort becomes even more significant when the research involves minorities or vulnerable populations, including people who have experienced trauma, discrimination, or health challenges.

The first step is creating a safe environment before the session even begins. Briefly explain the format, remind participants they can skip any question, and make clear that there are no right or wrong answers. Small reassurances like these lower defenses and lead to more honest, useful responses.

Session design matters too. If your research touches on sensitive topics, such as health conditions, financial hardship, or personal relationships, limit the number of people in the room. A one-on-one session feels safer than speaking in front of a group or a panel of observers. When recruiting a moderator, consider lived experience and cultural proximity. A participant discussing experiences of racial discrimination, for example, will likely open up more with a moderator who shares that background than with someone who doesn't.

Pro Tip! Avoid crowded locations and arrange an interview in a secluded area where participants can feel relaxed and not worry about being overheard.

Avoid indirect harm in user research

Not all research harm is obvious. A study can be technically safe, with no physical risk or sensitive data exposure, and still leave participants worse off than before. Indirect harm includes manipulating emotional states, nudging behavior without consent, or exposing people to distressing content under the guise of observation.

The Facebook emotional contagion study from 2012 is one of the most cited examples. The company altered the news feeds of 689,000 users for a week, showing some groups happier-than-average content and others sadder content, without their knowledge. Users who saw more negative content posted more negative words. Users who saw more positive content did the opposite. The goal was to improve feed relevance, but the method crossed a clear line: participants weren't just observed, they were manipulated. Facebook's data policy at the time didn't mention the word "research" at all, meaning no one had consented to being part of a study.[2]

Before launching any study, run a quick harm check by asking 3 questions:

- Does the study change what participants see, feel, or do rather than just observing them?

- Could participants feel deceived when they learn the full picture?

- Would you be comfortable if the study design were published publicly?

If any answer is yes, the design needs revisiting. For smaller organizations without Facebook's legal resources, the reputational cost of getting this wrong is rarely recoverable.

Safeguard participant data

When participants agree to take part in research, they extend a form of trust. They believe their data will be handled carefully, shared only on the terms they agreed to, and protected from exposure. Honoring that trust is a core part of what makes research ethical.

Anonymization is one of the most practical ways to protect participants. If a user prefers to stay anonymous, replace their name with a pseudonym in all notes, transcripts, and reports. Photos of participants, their homes, or any identifiable context should only appear in deliverables if explicit permission was given. When in doubt, leave it out.

Be equally careful about what you share and with whom:

- Only share raw data, session recordings, and personal details with people who were part of the agreed scope

- Clarify access boundaries with your team before the study begins, and document them clearly

- Delete data that is no longer relevant to the project once the research is complete

- Never hold onto participant information longer than necessary — the longer it sits, the higher the risk of a breach

If sensitive data were stolen and made public, the legal and reputational consequences for your organization could be severe, and participants would bear the personal cost.

Protect user privacy in big data research

Big data refers to large volumes of digital information collected from sources like customer databases, emails, medical records, social networks, clickstream logs, and connected devices. Companies use this data to identify trends, train machine learning models, and improve their products. The problem is that this data is often shared between organizations without users being notified, and most people have little awareness of how their information travels.[3]

Privacy policies are meant to address this, but they rarely do in practice. 65% of users accept terms and conditions without reading them. This means that even when researchers follow legal requirements, participants may never have meaningfully consented to being part of a study. If complaints arise, legal compliance alone won't protect your reputation.[4]

Two risks are easy to underestimate in big data research:

- Identity exposure: combining data points like birth date, gender, location, and digital activity can be enough to identify someone, even when no names are used.

- Misinterpretation: data without context leads to wrong conclusions. A photo posted on Instagram, for example, could represent genuine enthusiasm, irony, social performance, or simple observation.

Drawing behavioral conclusions from it without understanding the context behind it is a common and consequential mistake.

Pro Tip! Develop the code of conduct for your company to provide guidance on using big data.

How biases affect user research outcomes

Researchers are humans, and all humans carry biases: tendencies, inclinations, or prejudices toward or against something or someone that exist below the level of conscious thought. When people encounter unfamiliar situations without enough information, their brains fill the gaps using existing beliefs and assumptions. This mental shortcut helps process information faster, but it also opens the door to stereotypes, snap judgments, and false conclusions.[5]

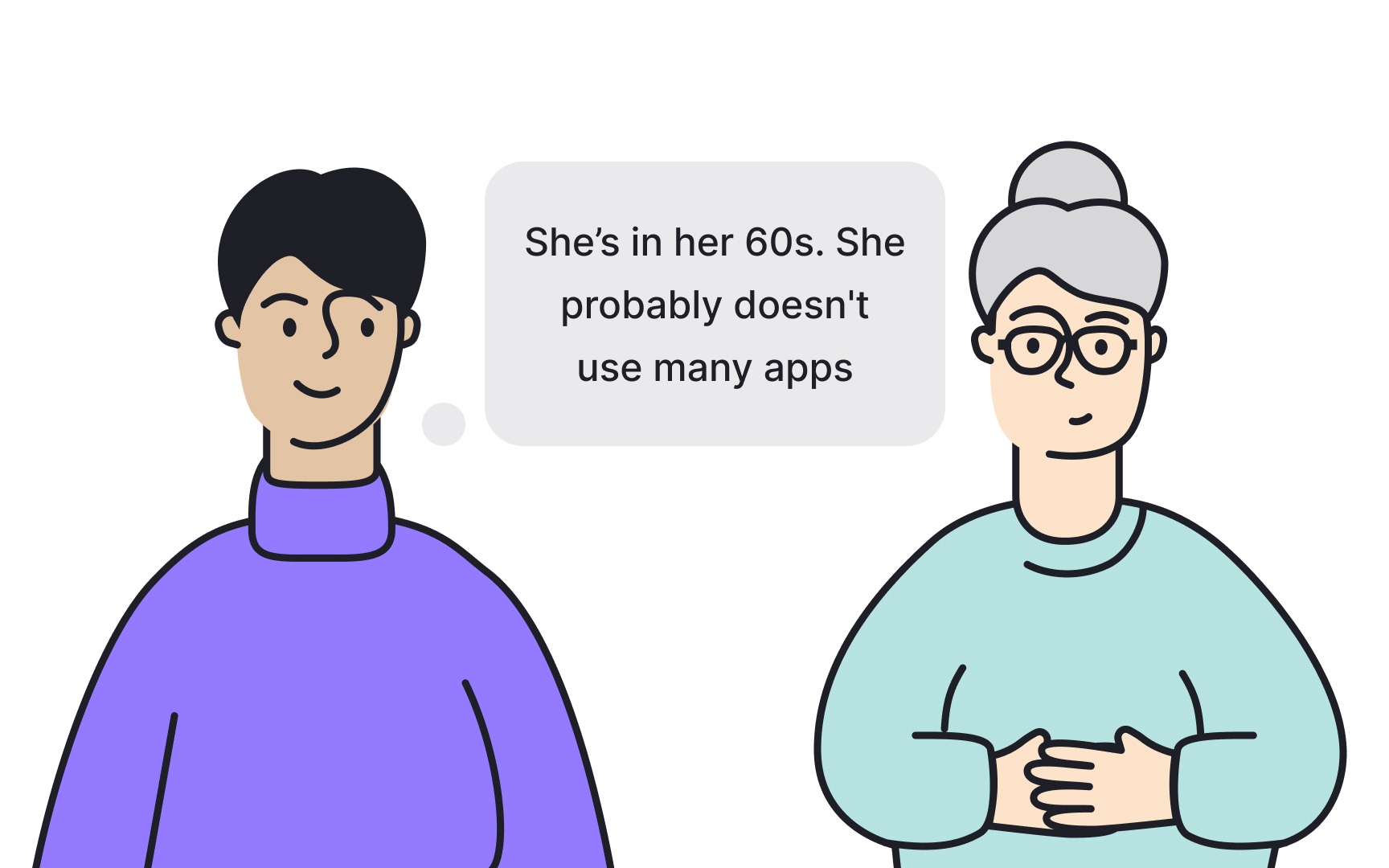

For example, a researcher who assumes that older users struggle with technology may unconsciously simplify their questions for that group, skipping complexity that would have revealed important insights. The bias shapes the study before a single session begins.

In user research, unchecked bias distorts findings at every stage, from who gets recruited to how questions are phrased to how responses are interpreted. One of the most effective ways to counter this is to separate assumptions from questions. Instead of asking "Did you find this easy to use?", which nudges participants toward a positive answer, a researcher might ask "Walk me through what happened when you tried to complete this task." Neutral, open-ended questions give participants room to respond honestly rather than confirm what the researcher hopes to hear.

Confirmation bias in UX research

Confirmation bias happens when researchers focus on evidence that supports their existing assumptions and discount feedback that contradicts them. It's one of the most common biases in UX research because researchers are often close to the product they're studying.

Consider a team that believes their microcopy is clear and professional. When users report that the language feels too technical and hard to follow, a researcher affected by confirmation bias may dismiss that feedback as an outlier rather than a signal. The result is a product that reflects the team's perspective rather than the user's reality.

To avoid confirmation bias in research:

- Ask open-ended questions that give participants room to respond in their own words, rather than confirming or denying a specific assumption

- Avoid questions that embed your hypothesis, such as "Was this easy to use?" which nudges participants toward agreement

- Practice active listening by noting what users say rather than filtering responses through what you hoped to hear

- Include more participants in the study to test whether patterns hold across different people, not just those who agree with your assumptions

False consensus bias in UX research

False consensus bias is the assumption that most people think, feel, or behave the way you do. People who hold this bias tend to see those with different perspectives as outliers rather than as valid data points. In UX research, this can be particularly damaging because design decisions get made based on the researcher's worldview rather than the user's.

A researcher who loves dense, information-rich interfaces may assume most users do too. A product team convinced their navigation pattern feels intuitive may skip usability testing altogether. In both cases, false consensus bias replaces user evidence with personal assumption, and the product pays the price in redesigns, poor adoption, and wasted development cycles.

The most effective way to counter this bias is to treat your assumptions as hypotheses, not facts. Before committing to a design direction, write down what you believe users want and then test those beliefs directly with real or potential users. Ask participants to describe their needs and frustrations in their own words rather than reacting to your ideas. In the early stages of product development especially, this validation step can prevent your team from building something that makes perfect sense internally but solves a problem no one actually has.

Recency bias in UX research

Memory is selective. When people recall an experience, the most recent moments tend to feel the most significant, even when earlier moments were equally or more important. In UX research, this pattern is called recency bias, and it shows up when researchers give more weight to what participants say or do toward the end of a session than to what happened at the beginning.

In practice, this means a participant who struggled with navigation for the first 10 minutes but completed the final task smoothly may leave the researcher with an overly positive impression. The early friction gets forgotten. The smooth ending gets remembered. If this pattern repeats across multiple sessions, the research report ends up reflecting the final few minutes of each conversation rather than the full picture.

A few habits can keep recency bias in check:

- Taking structured notes throughout the session, rather than relying on memory afterward, ensures that early observations are captured with the same detail as later ones.

- Recording sessions with participant consent gives researchers a way to go back and verify their impressions against what actually happened.

- Reviewing notes immediately after each session, before the next one begins, also helps prevent one session's ending from bleeding into the next session's reading.

Pro Tip! You can also prevent recency bias by inviting two UX practitioners to the session. One can guide the session by asking questions and the second can take notes.

Primacy bias in UX research

When people encounter something new and unfamiliar, their brains pay closer attention at the start. First impressions get encoded more deeply, which makes them easier to recall later. In UX research, this tendency is called primacy bias, and it shows up when researchers remember and give more weight to the first participant they spoke with than to those who came after.

Primacy bias is subtle because it doesn't just affect memory. It also shapes how researchers listen to later participants. If the first person in a study described the navigation as confusing, a researcher affected by primacy bias may unconsciously listen for confusion in every session that follows, treating that early response as the benchmark rather than one data point among many.

This is what distinguishes primacy bias from recency bias. Where recency bias pulls attention toward the end of a session, primacy bias anchors it to the beginning of the study. Both distort the full picture in different directions.

Structured note-taking throughout every session, not just the first, keeps each participant's responses equally documented. Reviewing all session notes together before drawing conclusions, rather than relying on what comes to mind first, ensures that early participants don't carry more weight than those who came later.

Pro Tip! Conducting user research sessions can be exhausting. To prevent bias, split sessions over several days so you can rest and remain focused when interviewing people.

Implicit bias in UX research

Implicit bias refers to attitudes or stereotypes we hold about certain groups of people without being aware of them. These associations form around characteristics like race, gender, age, social status, or sexual orientation, and they influence behavior in ways that feel completely natural to the person acting on them.

In UX research, implicit bias shapes how researchers interact with participants before a single question is asked. A researcher who assumes older participants have hearing or cognitive difficulties may speak more slowly or more loudly during the session, making those participants feel patronized rather than respected. A researcher who associates lower social status with limited technical literacy may simplify explanations that didn't need simplifying. In both cases, the participant picks up on the treatment, feels judged, and becomes less likely to respond openly.

Before each session, write down every assumption you hold about the participant group and challenge each one directly. Ask yourself where the assumption comes from and whether you have any real evidence for it. This exercise rarely takes more than a few minutes, but it creates a moment of conscious reflection that implicit bias specifically tries to avoid. The goal is to walk into every session with the same level of curiosity you'd bring to a group you know nothing about.[6]

Sunk cost fallacy in UX research

The sunk cost fallacy is the tendency to keep investing in something because of what has already been spent on it, even when the evidence suggests stopping would be the smarter choice. The logic feels intuitive: walking away means the time, money, or effort already committed was wasted. But continuing in the wrong direction only adds to the cost.[7]

In UX research, this bias often surfaces quietly. A researcher who has spent weeks recruiting participants and designing a study protocol may push forward even when early sessions reveal that the research question was poorly framed. A designer emotionally attached to a concept may commission more research hoping the findings will eventually justify the direction, rather than accepting that the concept needs rethinking. In both cases, the driver isn't evidence. It's the reluctance to account for what was already spent.

Stakeholder pressure makes this harder. Telling a team that 3 weeks of research needs to be redirected is uncomfortable, which is why the guardrails need to be in place before the study begins. Define your research goals clearly upfront and set explicit checkpoints, moments in the process where you assess whether the study is still answering the right question. If a checkpoint reveals you've drifted, treat it as useful information rather than a failure.

Represent user research findings accurately

The way findings are presented can introduce as much bias as the way research is conducted. A researcher who selects only the quotes that support a preferred direction, or softens findings to avoid disappointing stakeholders, is letting bias shape the output even if the sessions themselves were run cleanly.

Honesty in reporting means presenting what the data actually shows, including findings the team didn't want to hear. If participants consistently struggled with a feature the design team spent months building, that finding belongs in the report with the same prominence as positive results. Selective reporting protects short-term comfort but leads to long-term product decisions built on incomplete evidence.

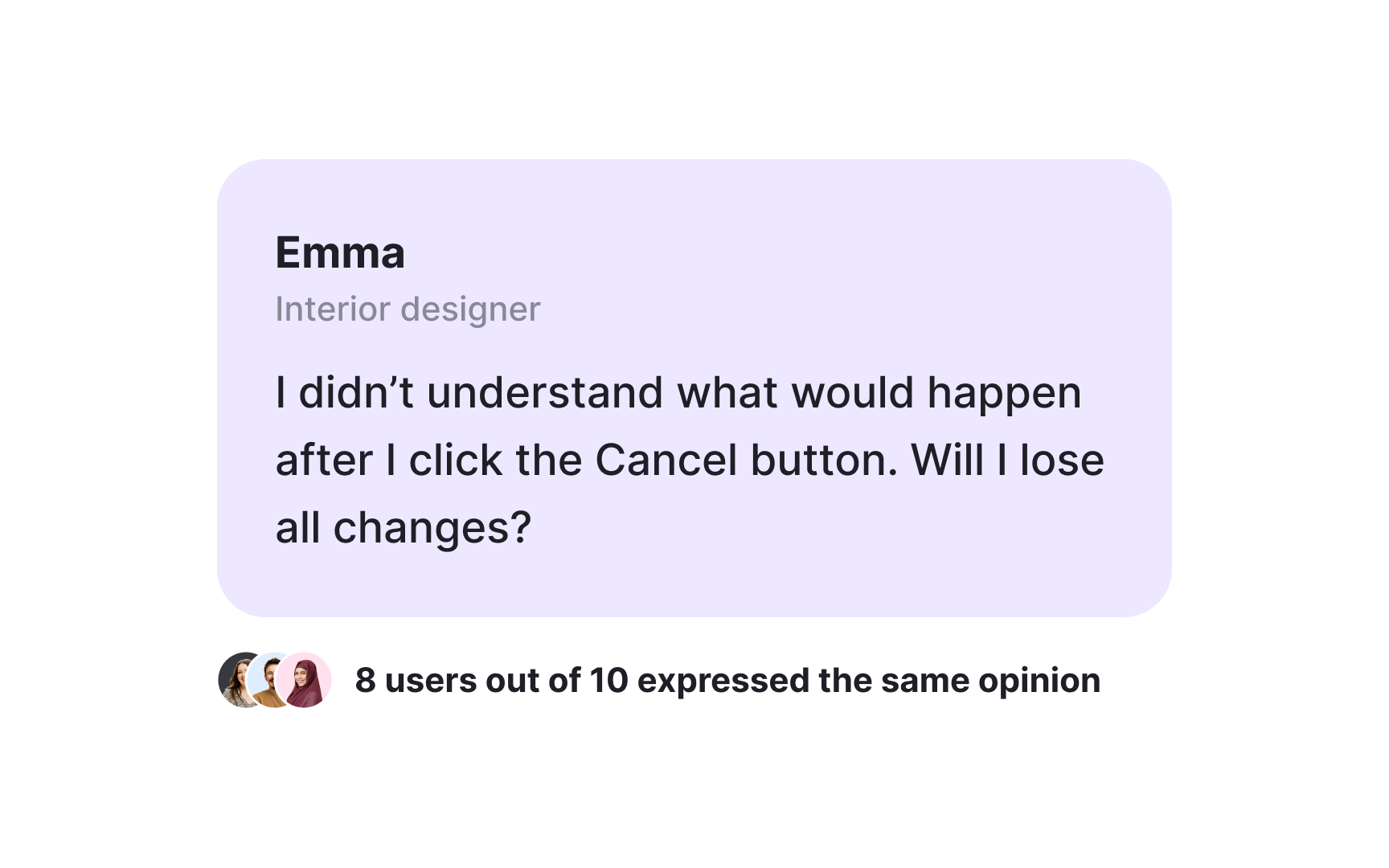

Direct quotes are one of the most effective tools for communicating findings clearly. A participant's own words carry an immediacy that summarized data often lacks. But quotes only work when they are representative. A single quote from one participant is an individual opinion. The same sentiment expressed by 8 out of 12 participants is a pattern worth acting on. When presenting quotes, always pair them with the number of participants who shared that view, so stakeholders can assess the weight of the finding themselves rather than taking the researcher's word for it.

Topics

References

- Conducting Ethical User Research | The Interaction Design Foundation

- Everything We Know About Facebook’s Secret Mood-Manipulation Experiment | The Atlantic

- What is Big Data and Why is it Important? | SearchDataManagement

- Bias in UX research | Medium

- 10 cognitive biases to avoid in User Research (and how to avoid them) | Medium

- The Sunk Cost Fallacy - The Decision Lab | The Decision Lab