The questions a researcher asks determine the quality of what they learn. A well-framed question opens up genuine insight. A poorly framed one leads participants toward expected answers, produces vague responses, or confirms what the team already believes. Research question quality shapes the entire study.

There are two distinct types of questions to get right. Research questions define what the team is trying to learn: what knowledge gaps exist, what decisions the research needs to inform, and what assumptions are worth testing. Interview or survey questions are what participants actually hear, and they require different care. They need to be open-ended, specific to real experiences rather than hypothetical behavior, and free of framing that nudges toward a particular answer.

This lesson covers the craft of writing both types well: how to brainstorm research questions collaboratively, how to sequence interview questions so participants ease into deeper topics, how to follow up without leading, and how to avoid common question patterns that produce little of value.

Define the theme of your research

Every interview study starts with a simple question: what do we actually want to find out? Before you write a single interview question, you need to identify the broad theme of your research. The theme isn't your final question list. It's the lens through which you'll focus the entire study.

Think of it this way. A theme like "how do users shop online" is broad enough to guide your direction but specific enough to rule out unrelated territory. From there, you can narrow it down into sharper, more actionable research questions that will drive your interview guide.

When brainstorming your theme, bring in multiple stakeholders and team members early. A product manager might care about purchase decisions, while a designer might be focused on trust signals. Getting everyone in the room ensures you don't miss a perspective that matters.[1]

Pro Tip! A well-defined theme also works as a filter during analysis. When a participant says something interesting but off-topic, your theme helps you decide whether to follow that thread or stay on course.

Brainstorm interview questions collaboratively

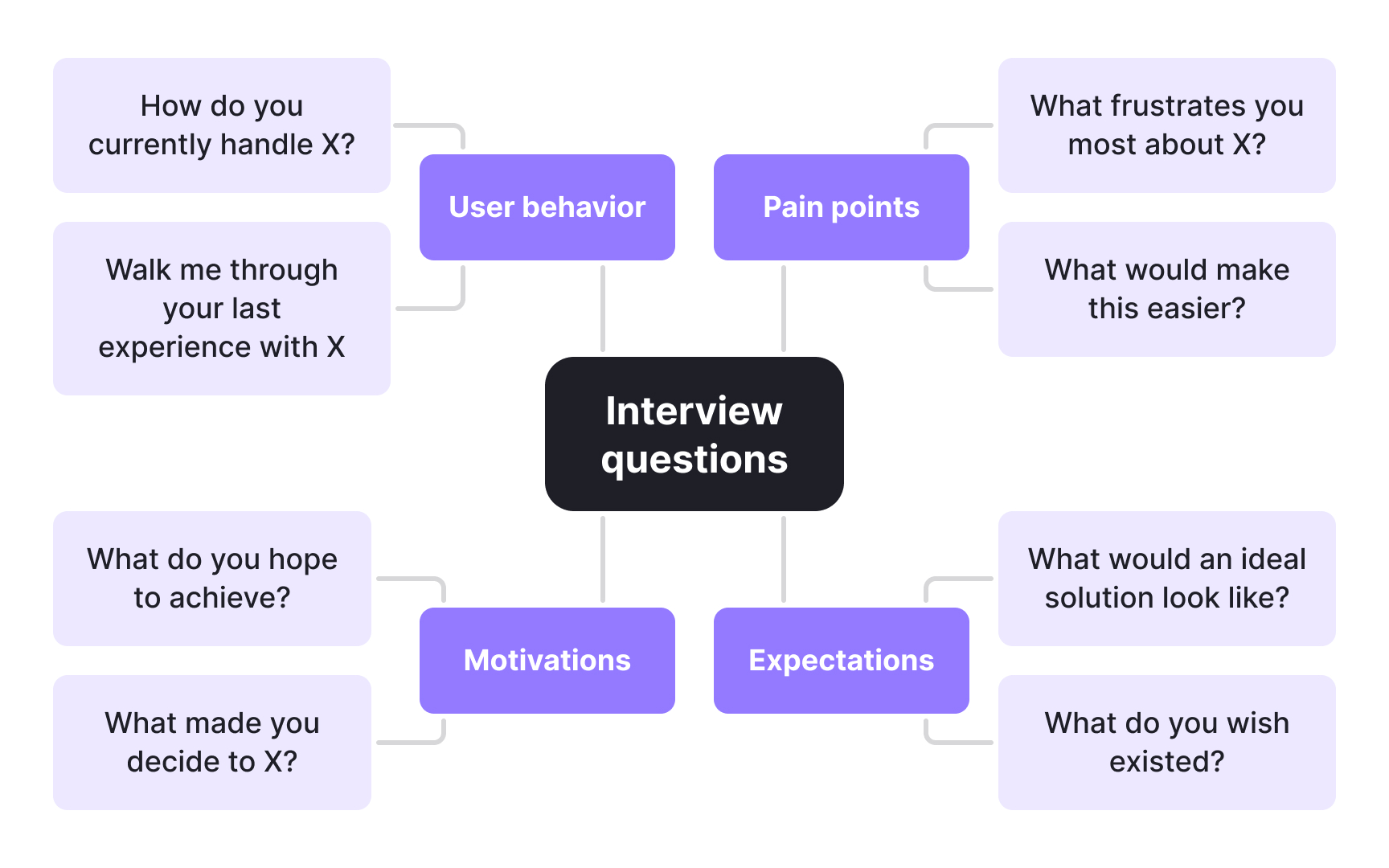

Once you're clear on your research questions, it's time to brainstorm interview questions. Start by writing down everything that comes to mind without filtering. You can always trim the list later by removing questions that don't serve your study goals. Use whatever format helps you think: mind maps, digital whiteboards, or a simple list all work well. The goal at this stage is breadth, not perfection.

Don't brainstorm alone. Bringing in colleagues or other stakeholders helps you spot gaps, challenge assumptions, and surface angles you might have missed on your own. A collaborative session often produces a more balanced set of questions that reflects the full scope of what you need to learn, not just one researcher's perspective.

Pro Tip! When in doubt, keep a question. It's easier to cut during review than to remember a useful question you didn't write down.

Start interviews with warm-up questions

Interviews yield the best results when they build momentum gradually. Jumping straight into complex questions can put participants on the spot before they feel comfortable, and a guarded participant rarely gives you the honest, detailed responses you need. Warm-up questions solve this by easing people into the conversation before the real work begins.

Good warm-up questions share a few qualities. They are easy to answer, free of assumptions, and closely related to the broader topic without being too specific. The goal isn't to gather critical data just yet. It's to establish rapport and signal to participants that this is a conversation, not an interrogation.

For example, if you are studying how users make purchases on an e-commerce app, you might open with questions like:

- How often do you shop on e-commerce websites?

- Which e-commerce apps do you use regularly?

- When was the last time you purchased something through an app?

These questions are familiar enough that participants answer confidently, which loosens them up for the deeper questions that follow. A participant who has already been talking comfortably for a few minutes is far more likely to open up when you ask something more probing, like why they abandoned their last cart or what made them distrust a seller.

Pro Tip! Keep warm-up questions open-ended wherever possible and engage the participant in storytelling mode before the core questions begin.

Ask open-ended questions in user interviews

The format of your questions shapes the quality of your data. Closed questions, those that can be answered with "yes," "no," or a single word, cut conversations short before participants have a chance to share what actually matters. Open-ended questions do the opposite. They invite participants to explain, reflect, and tell stories, which is where the real insights live.

Open-ended questions typically start with "who," "what," "when," "where," "why," or "how." These prompts signal to participants that there is no right or wrong answer and that you want to hear their experience in full. A question like "What feature do you find most useful and why?" opens the door to unexpected motivations, workarounds, and frustrations that a yes/no question would never surface.

Closed questions are not useless on their own. They work well for gathering quick clarifications or specific details during follow-up. The problem arises when an interview relies on them too heavily.

Consider the difference: "Do you like our product?" tells you very little. "What has your experience with our product been like?" gives participants room to surface the details that matter most.

Ask about specific events, not general habits in user interviews

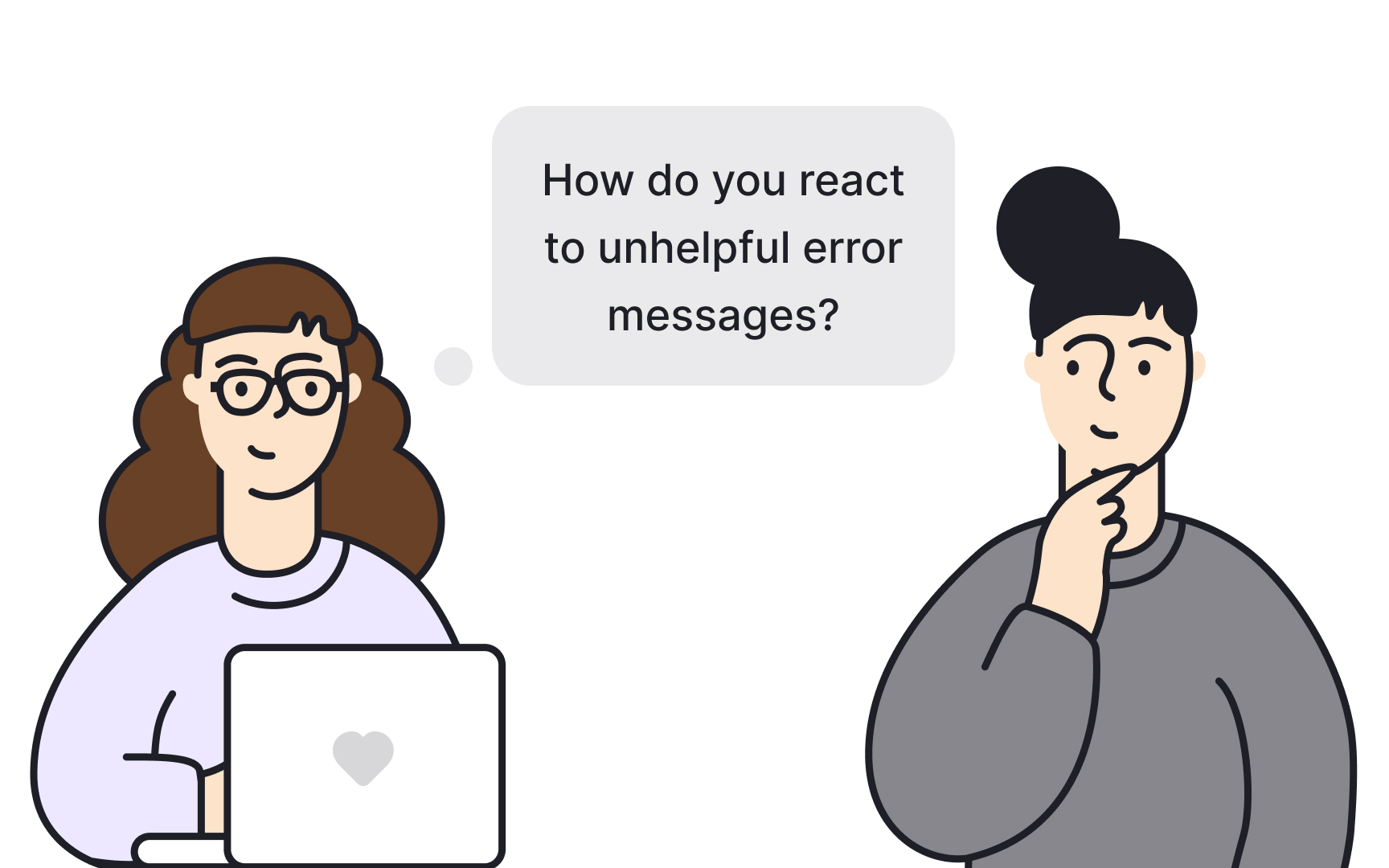

Open-ended questions get participants talking, but the specificity of your questions determines how useful those answers actually are. Ask users a broad question like "How do you react to unhelpful error messages?" and you will likely get a broad answer. Participants will describe what they think they do, or what they feel they should do, rather than what they actually did. That gap between perceived and real behavior is where research goes wrong.

The reason is simple: human memory is imperfect. Participants struggle to summarize patterns across many experiences, especially under the mild pressure of an interview. They tend to forget details, smooth over friction, and present a tidier version of their behavior than reality supports.

The fix is to anchor your questions to specific, concrete events. Instead of asking users how they generally behave, ask them to walk you through the last time something happened. "What did you do the last time you came across an unhelpful 404 page?" forces participants to retrieve a real memory, with real context, real emotions, and real decisions attached to it. That specificity surfaces details that no general question could.

This technique works because recalling a particular incident activates far more detail than trying to summarize a habit. Phrases like "Tell me about the last time you…" or "Walk me through what happened when…" give participants a concrete starting point and reduce the mental effort of reconstructing an experience from scratch.[2]

Probe deeper with follow-up questions

The first answer a participant gives you is rarely the most useful one. It is usually the most accessible one, the thought that surfaces quickest under mild social pressure. First answers tend to be polished, abbreviated, or shaped by what participants think you want to hear. The real insight often sits one or two layers deeper.

Follow-up questions are how you get there. Simple probes like "Why did that happen?", "What do you mean by that?" or "Can you walk me through an example?" signal to participants that you want more than a summary. They encourage participants to slow down, reflect, and share the reasoning and context behind their initial response.

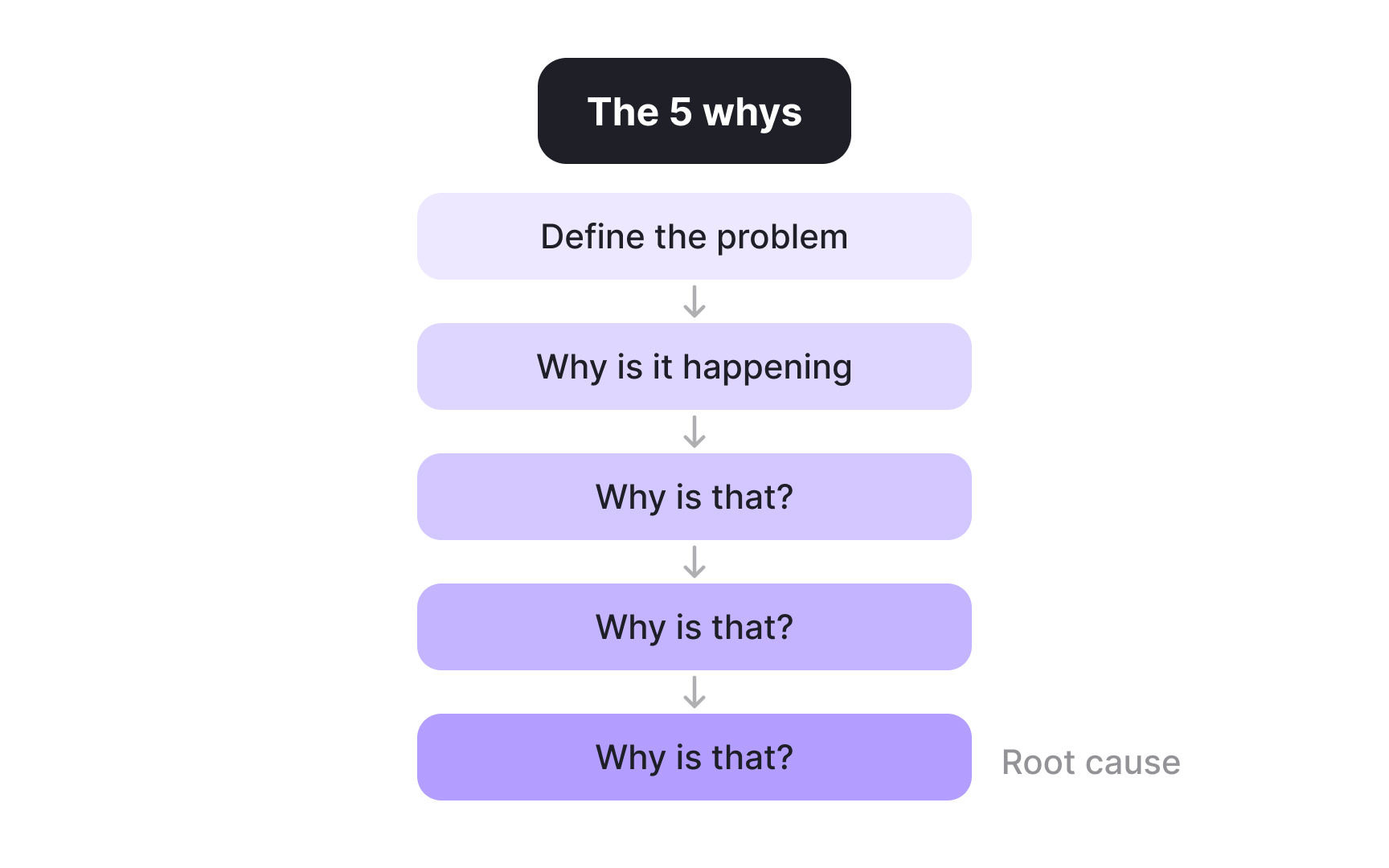

One structured approach to this is the Five Whys technique, originally developed at Toyota and widely used in UX research. The idea is to follow each answer with another "why" question, repeating the process up to five times until you reach the root cause of a behavior or attitude. For example, if a participant says they abandoned a checkout flow, asking "why" repeatedly might reveal not just frustration with the form, but a deeper distrust of how the site handles payment data. That is the kind of insight that changes product decisions.

5 is a guideline, not a rule. Some threads resolve in 3 questions. Others need more. The goal is to keep going until the answers stop revealing something new.

Pro Tip! If a participant gives a vague answer, resist the urge to interpret it yourself. Ask "What do you mean by that?" instead. Paraphrasing their answer back as a question is another way to prompt elaboration without leading them anywhere.

Avoid leading questions in user interviews

Getting honest answers from participants starts before the interview does. It starts with how you write your questions. Leading questions embed an assumption, an opinion, or an expected answer directly into the phrasing, which makes it hard for participants to respond with anything else. Even when they disagree, many will go along with what the question implies rather than push back against the person running the session.

A question is leading when it contains your own judgment:

- "What about this UI is beautiful?" assumes the UI is beautiful and steers participants toward confirming that. Instead, ask "How would you describe this UI?"

- "How frustrated did this flow make you feel?" primes participants to report frustration even if they felt something more neutral. Instead, ask "How did this flow feel to use?"

These neutral versions leave participants free to land wherever their experience actually takes them, including places you did not anticipate.

This matters because leading questions do not just produce inaccurate data. They can actively mislead design decisions. A team that consistently hears what they want to hear will build what they already believe in, rather than what users actually need.

Open interviews with user introduction questions

Demographic data tells you who your participants are on paper. It does not tell you how they live, what they care about, or how their daily context might shape their relationship with your product. User introduction questions fill that gap. They are the early questions in an interview that help you build a fuller picture of the person in front of you before the core research begins.

These questions serve two purposes at once:

- They give you contextual background that makes the rest of the interview more meaningful. A participant's lifestyle, routines, and habits often explain behaviors that would otherwise look random in the data.

- They ease participants into the conversation. Answering questions about their own life is comfortable and familiar, which helps them open up before the more focused questions arrive.

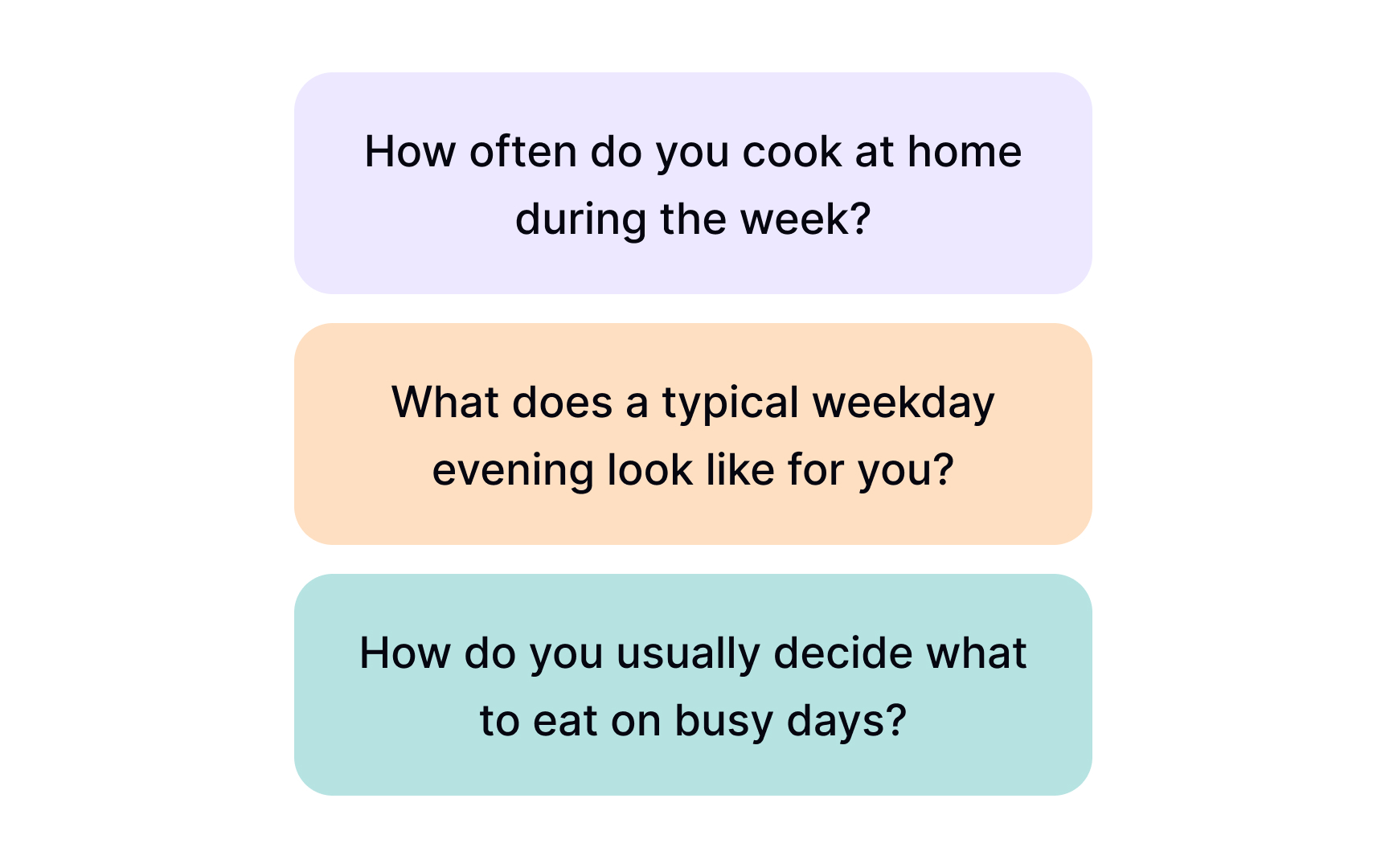

The right introduction questions depend entirely on your research topic. For a food delivery app, you might ask about cooking habits and how participants typically organize their week. For an online dating app, questions about how they spend free time, how they currently meet people, and what their social life looks like would give you the context you need. The goal is to choose questions that are adjacent to your research area without tipping directly into it too early.[3]

Pro Tip! Keep these questions open-ended and conversational. They are not an interrogation of facts but an invitation for participants to describe their world in their own words.

Ask topic-specific questions to uncover user needs

Once the conversation is flowing, topic-specific questions move the interview into its most valuable territory. These are the questions directly tied to your research goals. They are designed to surface how users currently behave, what gets in their way, and how much those friction points actually matter to them in practice.

Well-crafted topic-specific questions go beyond surface complaints. They help you understand the full context of a user's experience: what they are trying to accomplish, how they go about it today, where the process breaks down, and what a better solution would mean for their daily life. This combination of behavioral and motivational insight is what turns interview data into decisions teams can act on.

The key is to keep these questions open-ended and grounded in real experience rather than hypotheticals. They give participants a concrete starting point and reduce the risk of receiving generalized answers that are hard to interpret.

For example, if you are researching a task management tool, your topic-specific questions might look like:

- How do you currently keep track of tasks across your workday?

- Walk me through the last time a task slipped through the cracks. What happened?

- What does your process look like when you're juggling multiple deadlines at once?

- How does that affect the rest of your work when things go wrong?

- What would a better experience look like for you?

Use concept testing questions to validate product value

Before investing heavily in development, most teams want to know whether their product concept actually resonates with the people it is designed for. Concept testing questions are how you find out. Shown to participants after a brief demo or early prototype walkthrough, these questions reveal how users perceive the value of what you are building, where they see potential, and what gives them pause.

The goal is not to get a thumbs up or down. It is to understand the reasoning behind participants' reactions. A participant who says they would not use a product is far more valuable when they explain why. That explanation might reveal a gap in positioning, a missing feature, or a problem your product does not actually solve for them. Equally, a participant who expresses enthusiasm can tell you exactly what clicked and why, giving you language and insight you can carry into product decisions.

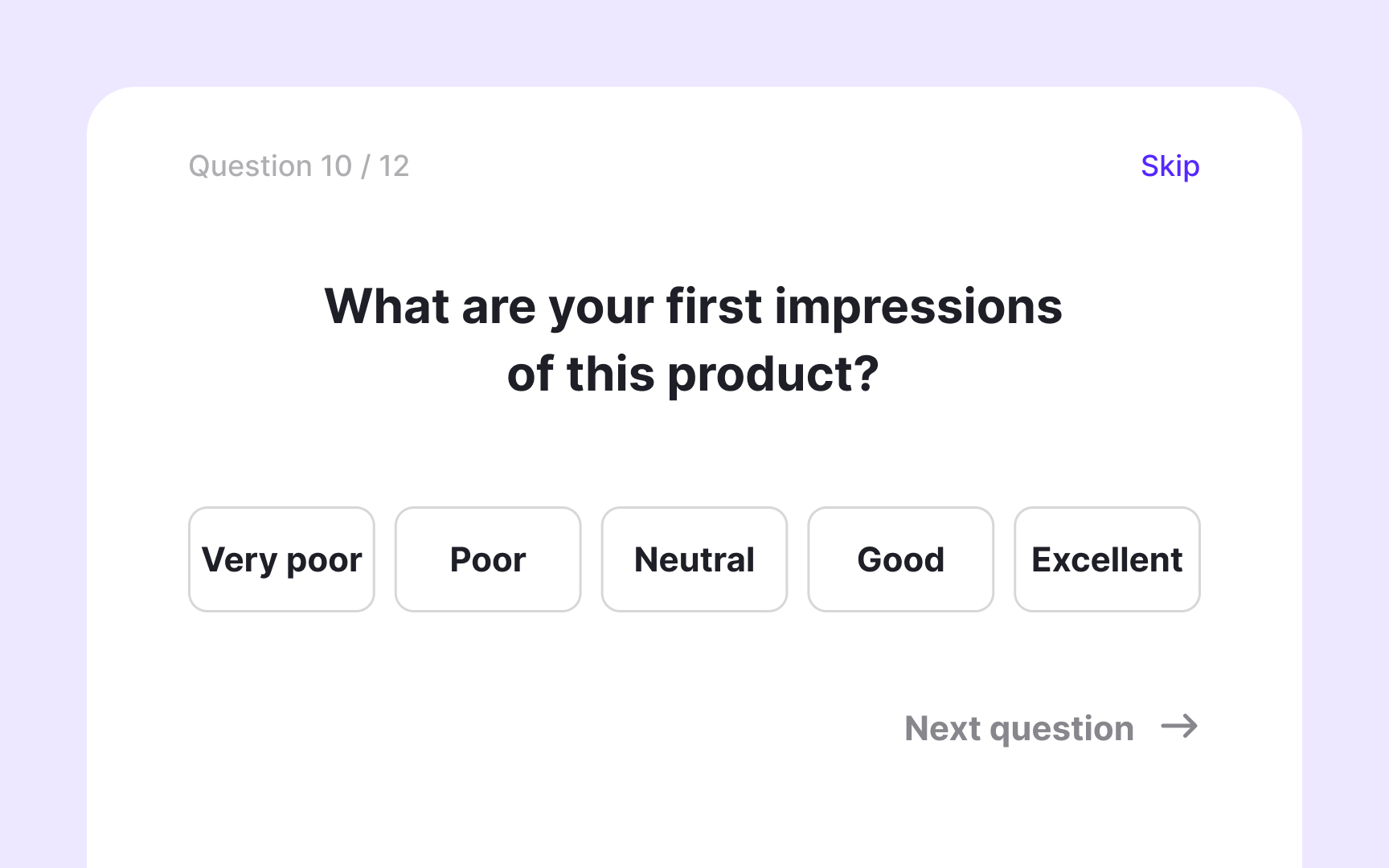

These questions work best when kept open-ended. Closed questions like "Would you use this?" produce 'yes' or 'no' answers that tell you very little.

For example, if you are testing an early concept for a budgeting app, your concept testing questions might include:

- What are your first impressions of this product?

- What would you expect to be able to do with it?

- What, if anything, would make you hesitant to use it?

- How does this compare to how you currently handle this problem?

- What would need to change for this to feel useful to you?

Pro Tip! This kind of question is only relevant if your participants have interacted with your product at least once.

Gather product reactions to surface improvement opportunities

Once participants have spent real time with your product, whether during a beta period or months of regular use, you have an opening to ask a different kind of question. Product reaction questions shift the focus from hypothetical impressions to lived experience. Instead of asking what users expect or imagine, you are asking what they have actually encountered.

These questions serve two purposes. They reveal what is working, giving you evidence to protect and build on. And they surface friction and unmet expectations, giving your team a basis for prioritization. Both matter. Knowing what users love helps you avoid accidentally removing it. Knowing what frustrates them tells you where to focus next.

The key is keeping questions open-ended and grounded in real experience. Asking users to recall a specific moment tends to produce richer responses than abstract prompts like "What do you think overall?"

For example, if you are interviewing long-term users of a project management tool, your reaction questions might include:

- What part of this product do you rely on most?

- Where has the product surprised you, positively or negatively?

- Tell me about a time when the product didn't do what you expected.

- What would you change if you could change one thing?