Decision quality is not the same as outcome quality. That gap is one of the most expensive thinking errors product teams make. Most product reviews focus on what happened: the feature flopped, the launch underdelivered, the metric did not move. Rarely do they ask the harder question: was the decision itself sound, given what the team knew at the time? Conflating these two things leads to bad learning. Teams reward lucky guesses and punish good judgment that ran into bad luck. Over time, that pattern degrades the very process you rely on. The hard science of how the brain processes uncertainty matters here. Cognitive biases quietly sabotage product teams, starting with resulting and hindsight bias, then moving into prospect theory, which explains why users hate losing a feature far more than they love gaining a new one.

Running pre-mortems surfaces failure scenarios before they happen, and probability calibration replaces vague gut feelings with explicit confidence levels. The goal is a repeatable discipline: judge product decisions by the quality of your thinking, not the luck of your outcomes. Awareness is the first step. Structural guardrails are the second.

How cognitive bias distorts product decisions

The human brain is not designed for truth. It is designed for survival. The mental shortcuts that helped humans act fast in dangerous, unpredictable environments are the same shortcuts that make product decisions feel more certain than they are. Cognitive biases are systematic patterns of thinking that cause people to deviate from rational judgment, not because they are careless, but because it is how human cognition works by default. For product managers, this matters a great deal. You work in conditions of high uncertainty, partial data, and social pressure from stakeholders. Those conditions are exactly when bias does its most damage. A PM analyzing feature adoption might unconsciously give more weight to data that supports a decision already made. A team reviewing a failed launch might reconstruct the timeline to make the outcome feel inevitable. These are not failures of intelligence; they are failures of process.

The antidote is not to "try harder to be objective." It is to build structural guardrails into how your team makes decisions: checklists, pre-mortems, explicit confidence levels, and retrospectives that separate process from outcome. Awareness is the first step. Guardrails are the second.[1]

Pro Tip! High conviction in an idea is not evidence that the idea is good. Treat strong gut confidence as a signal to stress-test harder, not a reason to skip validation.

How resulting bias distorts product retrospectives

Resulting is the cognitive error of evaluating a decision's quality based on what happened next. If the feature shipped and metrics went up, the decision was good. If it flopped, the decision was bad. That logic feels sensible. It is also deeply flawed.

Outcomes are shaped by many forces the PM had no control over: a competitor's launch, a platform change, a shift in user behavior, or simple timing. A product manager who made a careful, well-researched decision can still face a bad outcome. And a PM who guessed and got lucky can sail through a review looking brilliant. If the team draws lessons from the outcome rather than the process, it reinforces the wrong habits in both cases.

The right way to evaluate a decision is to ask: given what was known at the time, was this the best reasoning available? An outcome is just one data point. A process is what repeats.[2]

Pro Tip! Before a retrospective, write down what was known and what the team reasoned at decision time. Evaluate the process before the outcome recolors the story.

How the process-outcome matrix separates luck from skill

A two-by-two matrix that maps process quality against outcome quality is one of the clearest tools for evaluating decisions fairly. Each quadrant tells a different story, and only two of them are what they appear to be.

- Good process + good outcome: deserved success. The reasoning was solid, and it worked. This is the only quadrant where the result accurately reflects the quality of the decision.

- Bad process + bad outcome: deserved failure. No surprises here. The shortcut didn't pay off, and the lesson is clear.

- Good process + bad outcome: bad luck. The team researched carefully, ran experiments, and validated assumptions. Then a competitor launched first, or a key customer churned for unrelated reasons. Firing the PM here is a mistake that teaches the team not to do rigorous work.

- Bad process + good outcome: dumb luck. The team skipped discovery, moved on to intuition, and shipped something that happened to land. This is the most dangerous quadrant. The team now believes the shortcut works and will repeat it.

Product leaders who only track outcomes will over-rotate on lucky wins and under-invest in the disciplined processes that produce consistent results over time.

Pro Tip! In retrospectives, ask the team to rate process quality before discussing the outcome. It keeps rigorous PMs from being judged on factors they could not control.

How hindsight bias distorts product retrospectives

Hindsight bias is the tendency to believe, after an outcome is known, that it was predictable all along. A feature fails, and suddenly everyone sees the warning signs. A competitor wins market share, and the team reconstructs a narrative in which they should have seen it coming. This "I knew it all along" feeling is not memory. It is reconstruction. The brain quietly fills in a causal story after the fact and presents it as prior knowledge.

For product teams, hindsight bias is expensive in two ways. First, it creates false confidence in forecasting ability. If the team believes past failures were predictable, they will trust their instincts on future calls more than the evidence warrants. Second, it distorts retrospectives. When teams rewrite the past to make the outcome feel inevitable, they cannot accurately identify the actual failure points or decision moments that mattered.

The counter is documentation. Write down your assumptions, your confidence levels, and your reasoning before outcomes are known. A decision journal, kept honestly, gives the team a way to evaluate their thinking against the actual state of knowledge at decision time, not the version reconstructed afterward.[3]

Pro Tip! At project kickoff, ask each person to write the most likely reason it will fail. Open those answers at the retrospective to check against actual beliefs.

Prospect theory and loss aversion in product decisions

Prospect theory, developed by Daniel Kahneman and Amos Tversky, describes how people actually evaluate gains and losses compared to a reference point. The core finding is asymmetric: losses feel roughly twice as painful as equivalent gains feel good. Losing $100 is not the psychological mirror image of gaining $100. The loss registers far more powerfully.

This has a direct implication for product work. Users do not evaluate features on an absolute scale of value. They evaluate them relative to what they already have. A new feature might add real utility, but if it replaces something familiar, the loss of the old behavior often dominates the user's emotional response, regardless of the net improvement. This is why sunsetting a feature with low usage still generates disproportionate anger from the small group that relied on it.

The theory also explains why it is so hard to get users to switch from a competitor. To overcome the switching cost, which is experienced as a loss of familiarity, a new product typically needs to offer dramatically more value, not just a marginal improvement. A 10% better product rarely converts a loyal user. The bar is much higher.[4]

Pro Tip! When sunsetting a feature, acknowledge the loss explicitly. Users who feel heard about what they are losing are significantly more forgiving than those who are not.

How loss aversion shapes product pricing decisions

Pricing is one of the clearest places where loss aversion plays out in product decisions. Raising prices triggers a loss response that is much stronger than the satisfaction a discount creates. A 20% price increase feels more significant than a 20% discount feels generous, even though the numbers are identical. This asymmetry is not about logic. It is about how people experience change relative to a reference point they already consider theirs.

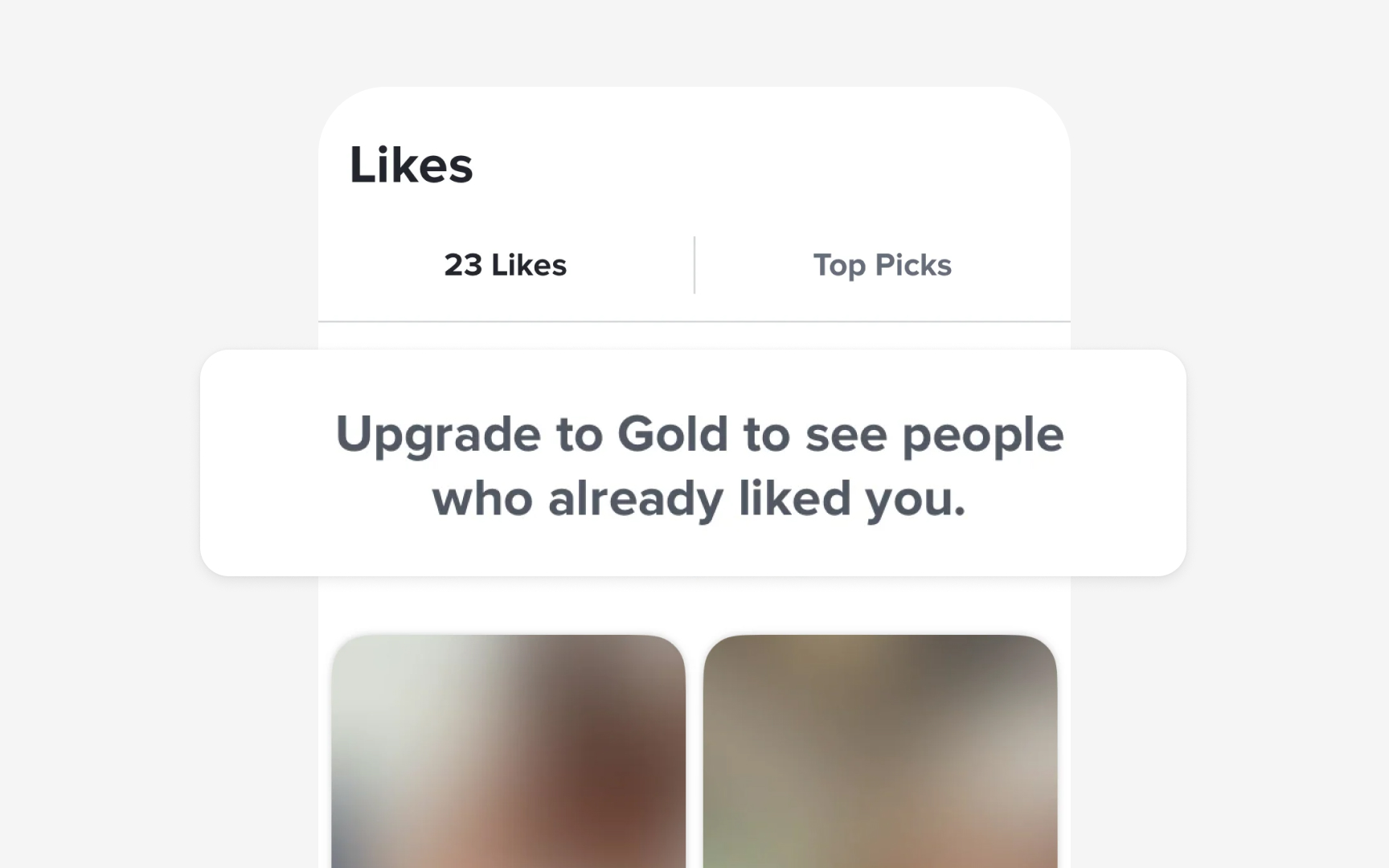

This has a direct implication for how product teams structure pricing changes. Introducing a new tier at a higher price point is less painful for users than raising the price of an existing plan. The existing plan has become the reference point. Changing it feels like taking something away. A new tier, by contrast, is an addition, and users who ignore it lose nothing.

The same logic applies to freemium upgrades. Framing a paid plan as unlocking more value lands differently than framing it as losing access to features on the free tier. The content is identical. The psychological experience is not.[5]

Probability calibration: thinking in confidence levels

One of the quietest and most damaging habits in product planning is binary thinking. A feature will work, or it will not. A bet is good, or it is bad. That framing eliminates nuance at exactly the moment nuance matters most. Every product decision is a forecast about an uncertain future, and the honest way to treat a forecast is as a probability, not a verdict.

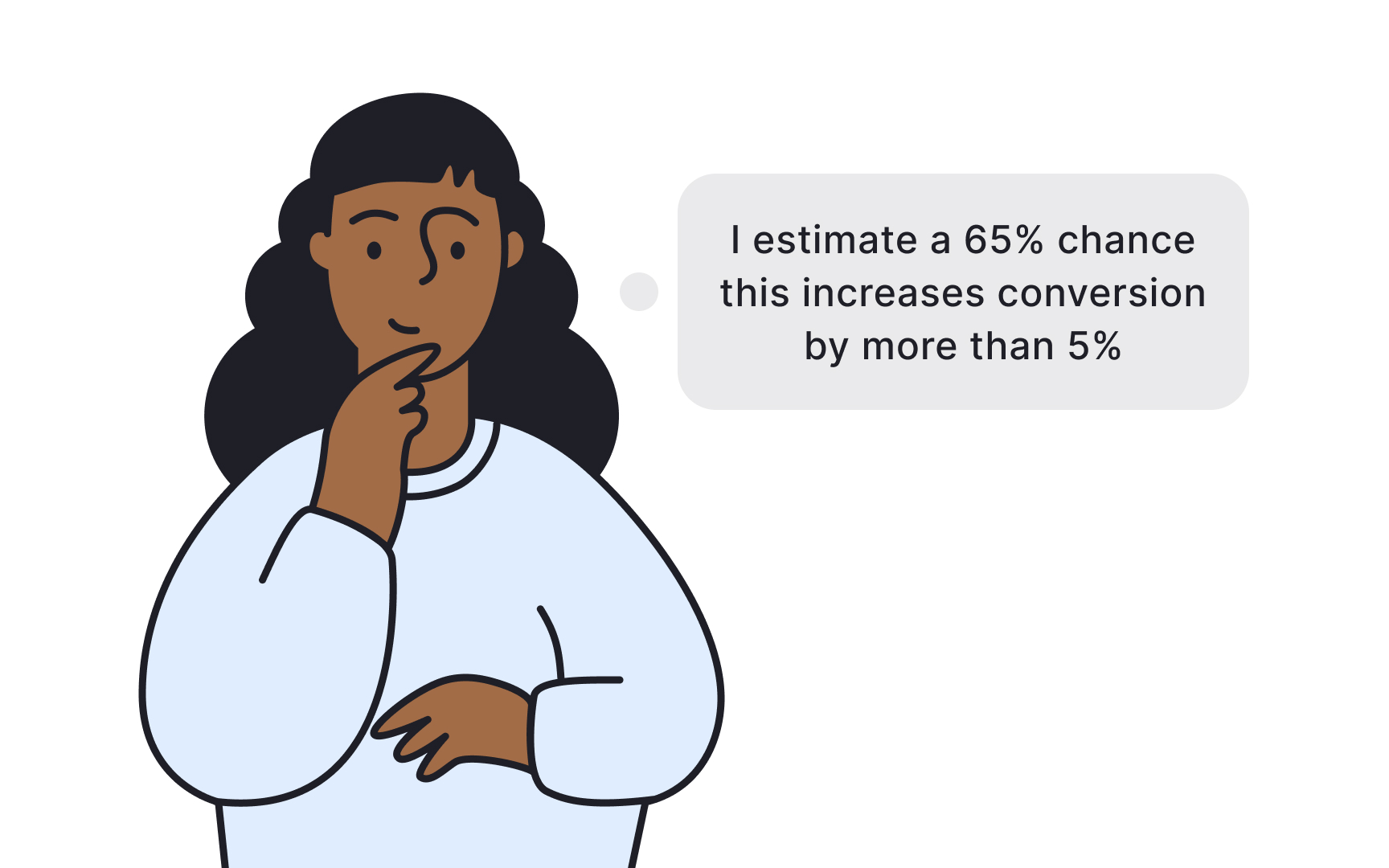

Probability calibration is the practice of attaching explicit confidence levels to your beliefs and then tracking how those beliefs perform over time. Instead of saying "I think this will increase conversion," a calibrated PM says "I estimate a 65% chance this increases conversion by more than 5%, with a wide range of uncertainty."

That phrasing forces two things: an acknowledgment that the outcome is uncertain, and a specific enough claim that you can actually evaluate it later.

Over time, calibration improves through feedback. If you say you are 80% confident about 10 decisions and only 4 of them land, your confidence is miscalibrated, and that is valuable information about your own reasoning. Teams that practice calibration together also communicate more precisely about uncertainty, replacing vague language like "probably" with ranges and explicit confidence levels that everyone means the same way.[6]

Run a pre-mortem before you launch

A standard risk assessment has a social problem: nobody wants to be the person who kills the CEO's idea. When the team gathers to discuss what might go wrong with a high-profile initiative, the culture of optimism and hierarchy suppresses honest input. The result is a sanitized risk register that misses the real failure modes. The pre-mortem, a technique developed by research psychologist Gary Klein, solves this by flipping the frame. Instead of asking "what might go wrong?", a pre-mortem starts with a hypothetical: "It is one year from now. This initiative has failed badly. What happened?" That reframe works because it gives the team permission to be pessimistic. They are not expressing doubt about a live decision.

They are doing imaginative scenario analysis about an imagined failure.

In practice, the team spends 10 to 15 minutes individually writing down their most realistic failure scenarios, then shares and clusters the results. The exercise consistently surfaces risks that were known to individuals but had never been said out loud, either because they seemed too obvious, too sensitive, or too much like disloyalty. Once named, those risks can be prioritized and mitigated.[7]

Topics

References

- The Effect of Cognitive Bias on Decisions and Product | Pragmatic Institute - Resources | Pragmatic Institute - Resources

- Resulting Fallacy

- Prospect Theory - The Decision Lab | The Decision Lab

- Prospect Theory and Loss Aversion: How Users Make Decisions | Nielsen Norman Group

- Prospect Theory and Loss Aversion: How Users Make Decisions | Nielsen Norman Group

- https://www.schroders.com/en-gb/uk/intermediary/insights/probability-and-decision-making--with-annie-duke-part-1/

- Premortems in product management; why pessimism pays | Mind the Product