Once specifications are written, their accuracy and usability must be tested before development begins. Reviewing and validating specifications is where ideas meet reality. A clear spec ensures feasibility, but only validation reveals if the proposed solution truly works for users.

This stage connects documentation with experience. Teams build low-fidelity prototypes to test how real users interact with the proposed features. By observing what feels intuitive and what causes friction, product teams identify missing details, unclear requirements, or unnecessary complexity. These insights guide precise adjustments to the specification, preventing costly rework later.

Validation does not replace review. It works alongside it to ensure both accuracy, relevance, internal clarity, consistency, and feasibility. It also checks real-world desirability and usability. Together, they close the loop between writing and building, helping teams confirm that they’re not only building the product right, but also the right product.

Defining review vs validation

Reviewing and validating a specification serve different but complementary purposes in the product development process.

A review focuses on internal quality. It checks whether every section of the document is clear, consistent, and technically feasible. This is typically done with the engineering, UX, and business teams, who look for missing details, conflicting descriptions, and assumptions that could lead to confusion later. The goal is to confirm that requirements are complete, measurable, and aligned with the project's objectives before any design or build work begins.

Validation examines whether the proposed solution will work for real users. When possible, it is done externally through prototype testing or user interviews. That said, external validation is not always feasible. It can be expensive, and for lower-risk decisions, the effort may not be justified. In those cases, internal sessions or peer reviews can serve as a practical alternative, still surfacing blind spots and challenging assumptions before development begins.

A validated specification gives evidence that what is planned is not only technically sound but also valuable and usable, confirming that the team is building the right product, not just building it correctly.

Preparing the specification for review

Before reviewing a product specification, it must be clear, organized, and detailed enough to guide discussion effectively. Always open with the problem or opportunity and why it matters now, even if everyone in the room already knows the project. Taking that moment to step back and align on the why sets the right foundation for everything that follows.

Preparing the right people is just as important as preparing the document. Missing key stakeholders can block decisions. Too many people can turn a review into a presentation where no one raises concerns. Before the session, talk with owners of areas where you have dependencies: other product managers, architects, designers, and engineering teams. Small working sessions ahead of the review help surface dependencies, shape the solution, and ensure the right voices are in the room when it counts.

A good review session is not just a presentation. Structure it to also:

- Identify risks and open questions

- Get different teams talking to each other

- Surface concerns that only emerge when the right people are in the same conversation

The document itself should follow a logical order with consistent language, linking related elements such as user stories and acceptance criteria to show how each feature connects to business or user outcomes. Clear definitions of goals, user needs, and constraints reduce ambiguity and give reviewers the measurable criteria they need to evaluate completeness and feasibility.[1]

Running a structured peer review

A peer review transforms specification writing from an isolated task into a collaborative quality check. The process brings together team members from product, design, and engineering to assess whether each requirement is clear, testable, and aligned with overall goals.

During the session, create space for questions and discussion. Not every topic needs to be resolved in the room. Some conversations add value for everyone present, such as discussing how the current architecture can support a specific experience. Others are better handled separately:

- Designers and developers debating a component's specific behavior

- Developers from different teams aligning on an API response

- Any discussion that goes too deep for the broader group to contribute to

Knowing when to redirect a conversation to a smaller follow-up meeting keeps the review focused and productive. If the scope is large, schedule more than one session rather than rushing through it.

Make sure functionalities are reviewed end-to-end and that the owners of each area are fully aware of all the details. A structured checklist helps reviewers evaluate completeness, accuracy, and traceability, while walkthrough sessions allow participants to question assumptions and clarify the reasoning behind design or technical decisions.

By combining different perspectives, teams strengthen the specification's quality and prevent issues that often surface only during development.

Pro Tip! Use checklists and cross-functional walkthroughs to detect unclear logic or missing links before building anything.

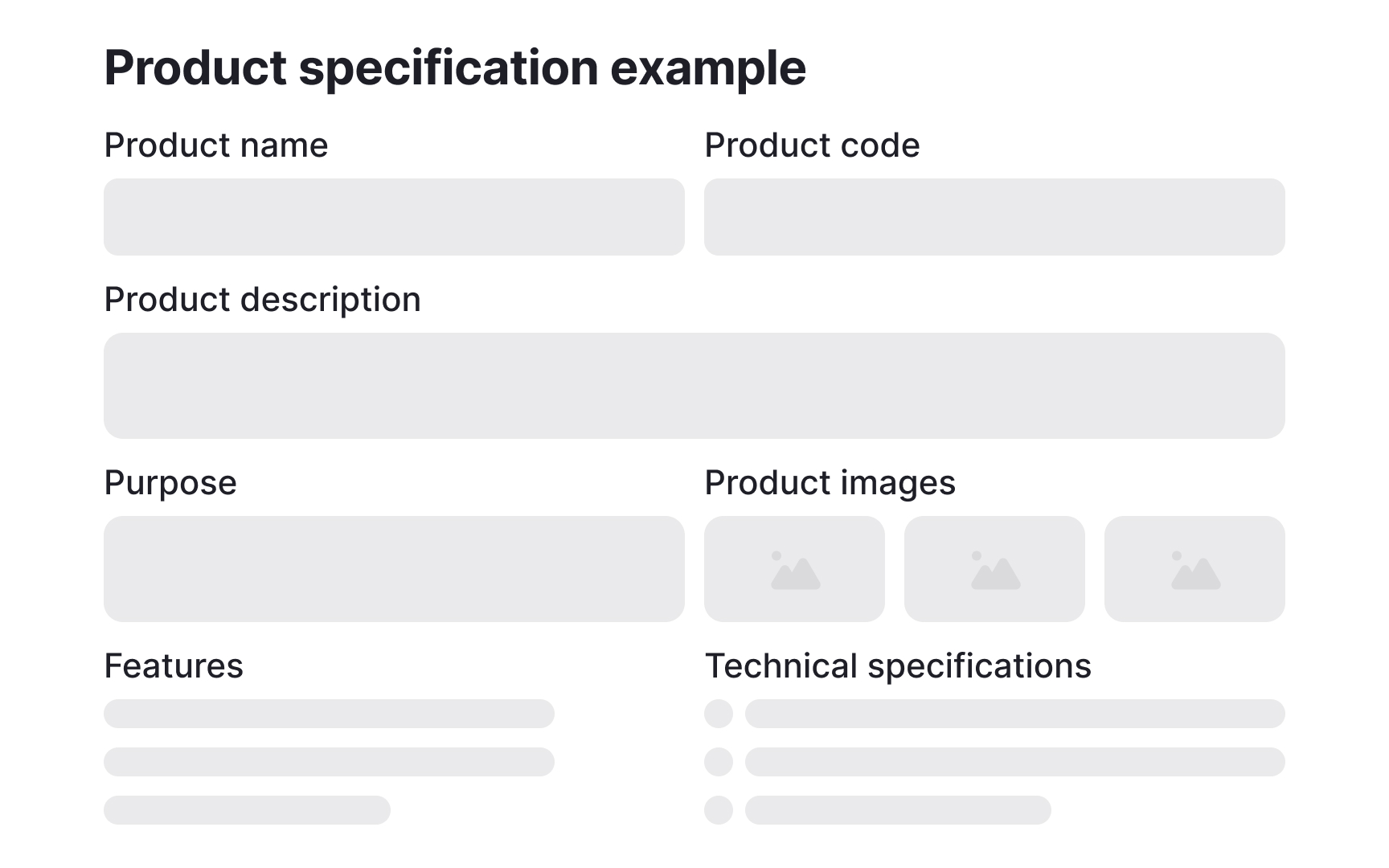

Creating a prototype from a specification

Prototyping transforms written requirements into something tangible that users can interact with. Once the core features are defined, teams can create a basic clickable model using tools like Figma or Miro. With AI tools like Lovable, functional prototypes are now within reach even at early stages. If there is significant uncertainty about what is being built, creating a Proof of Concept and testing it with users is a valuable option. When feasible, running it in parallel with the existing product can surface real-world insights without disrupting what is already live.

Not every functionality has a user interface to prototype. For backend features like pricing logic or order flow changes, creating detailed flows or use cases and walking through them with the involved stakeholders serves the same purpose. It makes abstract requirements concrete and gives teams a shared reference to validate before development begins.

Either way, the goal is the same. A checkout process that looks straightforward in writing might reveal friction when users struggle to find the "Continue as guest" option. These insights highlight where requirements need clarification, where steps can be simplified, or where functionality is missing altogether. Testing at this stage ensures that the product spec evolves from assumptions into verified directions, making development smoother and more aligned with real-world use.

Pro Tip! When testing a prototype, stay silent. Watching users struggle or succeed without guidance reveals the most authentic insights.

Conducting user validation sessions

User validation sessions help teams see whether the product specification reflects real user behavior, but timing matters. These sessions should happen before the specs are written and before the review. The insights they generate are what inform the specification, not the other way around.

UX designers are a natural fit to help run these sessions. When possible, have one person facilitate while another takes notes, and record the session so nothing is missed.

The sessions themselves are usually short and focused. A facilitator introduces the goals while a participant completes predefined tasks using a prototype, such as creating an account, finding a product, or completing checkout. Participants are encouraged to think aloud, describing what they expect to happen and what confuses them. Subtle cues like hesitation, scanning, or backtracking often reveal unclear logic or missing guidance that would never surface from reading the spec alone.

Afterward, all observations are logged and connected to specific requirements or user stories, identifying exactly where the spec needs to be refined. A short session with 5 to 7 participants is often enough to uncover major usability issues. When repeated throughout development, user validation creates a reliable loop between design intent and user reality.

Pro Tip! Focus on watching, not explaining. The best usability insights appear when users try to figure things out on their own.

Collecting and synthesizing feedback

After validation sessions, teams must translate observations into structured insights. Raw notes, quotes, and metrics become useful only when organized and analyzed systematically. Start by clustering feedback into themes such as "navigation confusion" or "missing features," then link each cluster to the relevant section of the specification. This makes it clear which requirements need revision and which work as expected.

Prioritization is essential. Not every comment should trigger a change. Focus first on issues that block task completion or contradict user goals. If multiple users say that the "Apply filter" button feels unnecessary, that might be a minor preference. But if users repeatedly fail to notice the "Add to cart" button because it looks inactive, that points to a critical usability problem that requires updating both the interface and its related specification.

Use qualitative data like user quotes alongside quantitative indicators like task completion rates to form a balanced view of what needs improvement.

Once the analysis is complete, share the insights broadly, especially with other product managers and designers. Validation findings rarely affect just one area of the product. Getting the right people aligned on what was learned ensures that spec refinements are consistent, well-informed, and connected to decisions being made across the team.[2]

Pro Tip! Always link feedback to specific parts of the spec. It prevents insights from getting lost and turns opinions into actionable changes.

Refining requirements based on findings

Once validation and feedback analysis are complete, the next step is to refine the specification so that it accurately reflects what users need and understand. Every change should be based on real evidence, not preference.

Start by revisiting the assumptions that were not confirmed during validation. These are often where the most significant spec changes come from. For example, the goal was to increase usage of a feature by placing it on the homepage, but validation revealed that users are typically on a different page when they need it. Then, the placement assumption was wrong, and the spec needs to reflect that.

Refinement also involves removing unnecessary details that add noise without helping the product perform better. Keeping sections focused helps team members stay on track. Implementation specifics belong in related design or technical documents, not in the functional spec.

Document all changes directly in the specification, linking each edit to the validation results that informed it. This keeps the document transparent and traceable. If the changes are significant, schedule a new review session to share the findings and updated specs with the relevant teams. A refined specification captures not only what was planned, but what was actually proven to work for users.

Ensuring testability of revised specs

After updates are made, each requirement should be clear enough to test in practice. A testable specification gives QA the information needed to create test cases. If there is no QA on the team, the goal is not to write the tests yourself, but to make sure all the information needed to test is available in the spec.

To make a requirement measurable, describe what success looks like in specific terms. Instead of saying "The page should load quickly," define a limit such as "The page loads in under three seconds on mobile." Make sure to describe the full flow, not just what users see. For example, after users click "Create document," a draft is created. Users can edit it, but it only becomes saved after a name and folder are defined.

Testability applies to different types of requirements:

- Functional: Confirm whether a user action triggers the right response, for example, "When clicking 'Save,' a confirmation appears within two seconds."

- Usability: Measure how easily users complete key tasks, like "80% of participants complete checkout without asking for help."

- Performance: Track system efficiency through metrics such as load time, frame rate, or error rate

- Accessibility: Verify that color contrast, keyboard navigation, and screen reader labels meet standards

Creating a short table that links each requirement to its testing method, such as a usability test, QA script, or analytics metric, helps ensure accountability and avoids confusion during development.

Pro Tip! Don't think only about what you see. The product also has backend logic that needs to be considered and tested.

Stakeholder review and alignment

After internal peer reviews and user validation, the specification needs a final alignment check with stakeholders. This stage is less about polishing the document and more about confirming that its contents match broader business and technical goals.

However, not everything needs to be aligned with everyone. Business stakeholders need to understand how a functionality works at a high level, but the specific APIs being used or the detailed interaction specifications are unlikely to be relevant to them. What matters is that what is being passed to development and UX teams is consistent with what business stakeholders are expecting, and that more technical stakeholders and different teams are aligned with each other.

During this review, teams walk through major sections such as features, timelines, and dependencies, and discuss trade-offs. If a validated feature increases development time, stakeholders may decide to postpone it or release it in a later iteration. The goal is to reach consensus on what moves forward, what waits, and what needs scope adjustment. Recording key decisions directly in the document ensures visibility and accountability for all involved.

This step transforms the specification from a working draft into an agreed roadmap for development. Once approved, it becomes the shared reference point for execution.

Establishing continuous validation practices

Validation is not a one-time step. As projects evolve, specifications should evolve too. Treat the product spec as a living document that reflects what has been learned through new data, user feedback, or technical constraints. Schedule regular checkpoints, such as after sprint reviews or feature releases, to revisit whether the documented requirements still describe how the product works.

To make validation continuous, teams can:

- Reconnect user testing or analytics data back to specific requirements in the spec.

- Document every change, along with its reason and the evidence behind it.

- Use collaborative tools that store version history, comments, and updates in one place.

Continuous validation helps catch early deviations between what was planned and what is being delivered. Over time, this practice builds a specification that stays accurate, reliable, and reflective of real product behavior.

Pro Tip! Schedule lightweight spec reviews after every major product release. Staying updated takes less time than catching up later.