Choosing the right research method is one of the most consequential decisions a UX researcher makes. The wrong choice does not just waste time and budget. It produces findings that answer the wrong question and can push a design in the wrong direction.

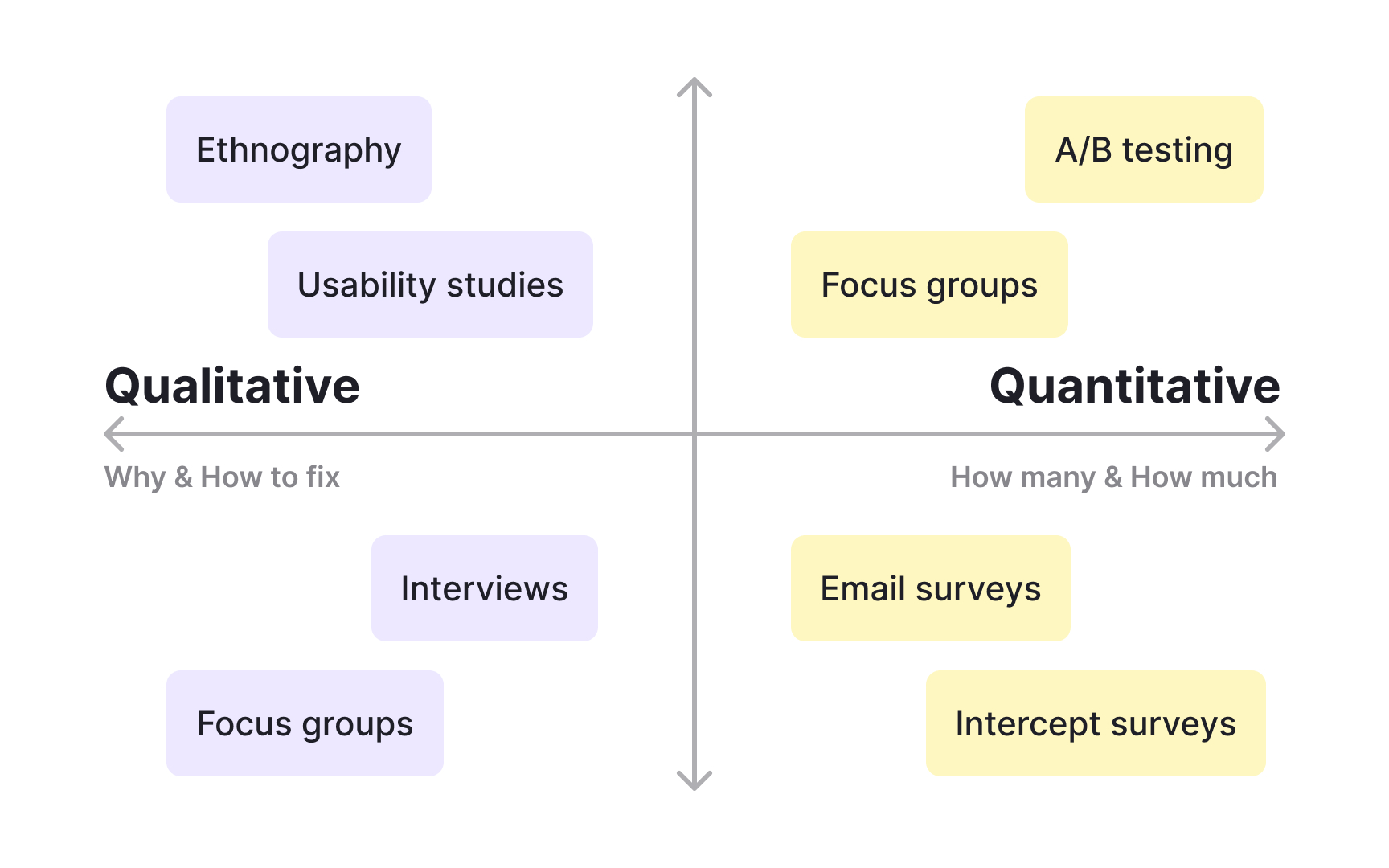

The decision starts with what you already know and what you still need to find out. Attitudinal methods capture what users think and feel. Behavioral methods capture what they actually do. Qualitative techniques answer why-questions and suit early-stage exploration. Quantitative methods answer what, how many, and how often, making them better for validation and measurement. These distinctions narrow the field quickly once a research goal is clearly defined.

Context matters too. The product's development stage, the size of the available participant pool, and team capacity all shape which methods are realistic. A researcher working alone before a launch decision needs different tools than a team running a full discovery sprint. This lesson covers the main dimensions for evaluating research methods so teams can match the approach to the situation.

Define your research goal

Before you choose a research method, you need to know what you're trying to learn. The goal you set at the start broadly determines which methods are worth considering, so it's worth getting this right before moving forward.

Start with two questions: "What do I want to learn?" and "Why do I want to learn it?" Your answers will reveal what you already know about your users and, more importantly, what knowledge you're missing. Some common gaps teams discover at this stage include:

- Who their users actually are

- How users currently interact with the product

- Whether users enjoy using the product at all

Once you've identified the gap, you can think about how to fill it. It also helps to consider which broad question your study needs to answer:

- What do users need?

- What do users want?

- Can they use the product?

Pro Tip! Resist the urge to skip straight to methods. Teams that define their goal first rarely end up running studies that answer questions no one asked.

Match the research methods to design phases

The choice of research methods often depends on which phase of the product design process you're in. Is it a new product, or does it already exist on the market? Some methods, like surveys, can be used at any stage, while others, like usability tests, can only be run once you have a prototype.

Where you are in the process shapes what you're trying to find out:

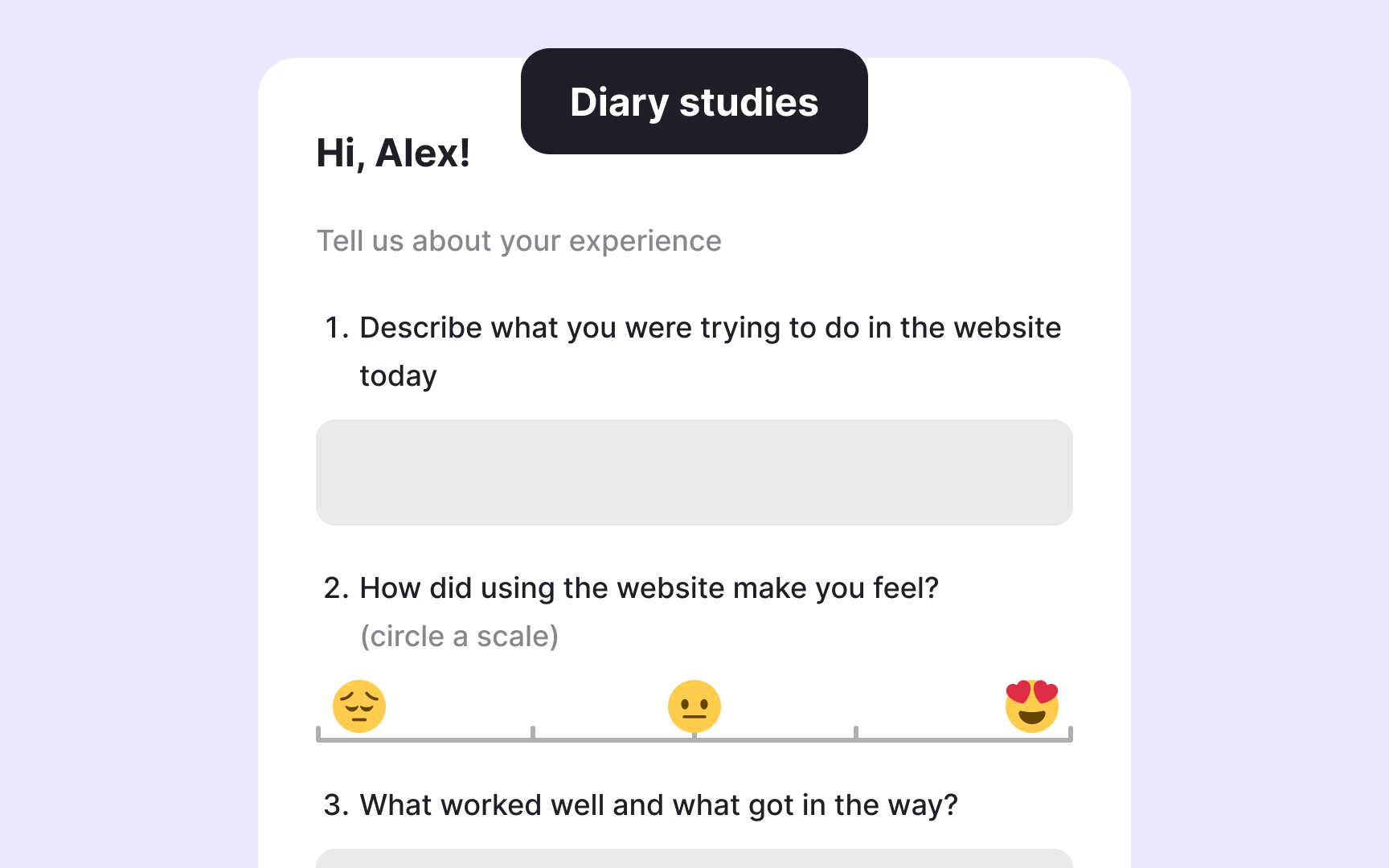

- Early in the process: You're still discovering user needs and motivations, generating ideas, and figuring out which direction to take. Good methods for this stage include field studies, diary studies, interviews, surveys, participatory design, and concept testing.

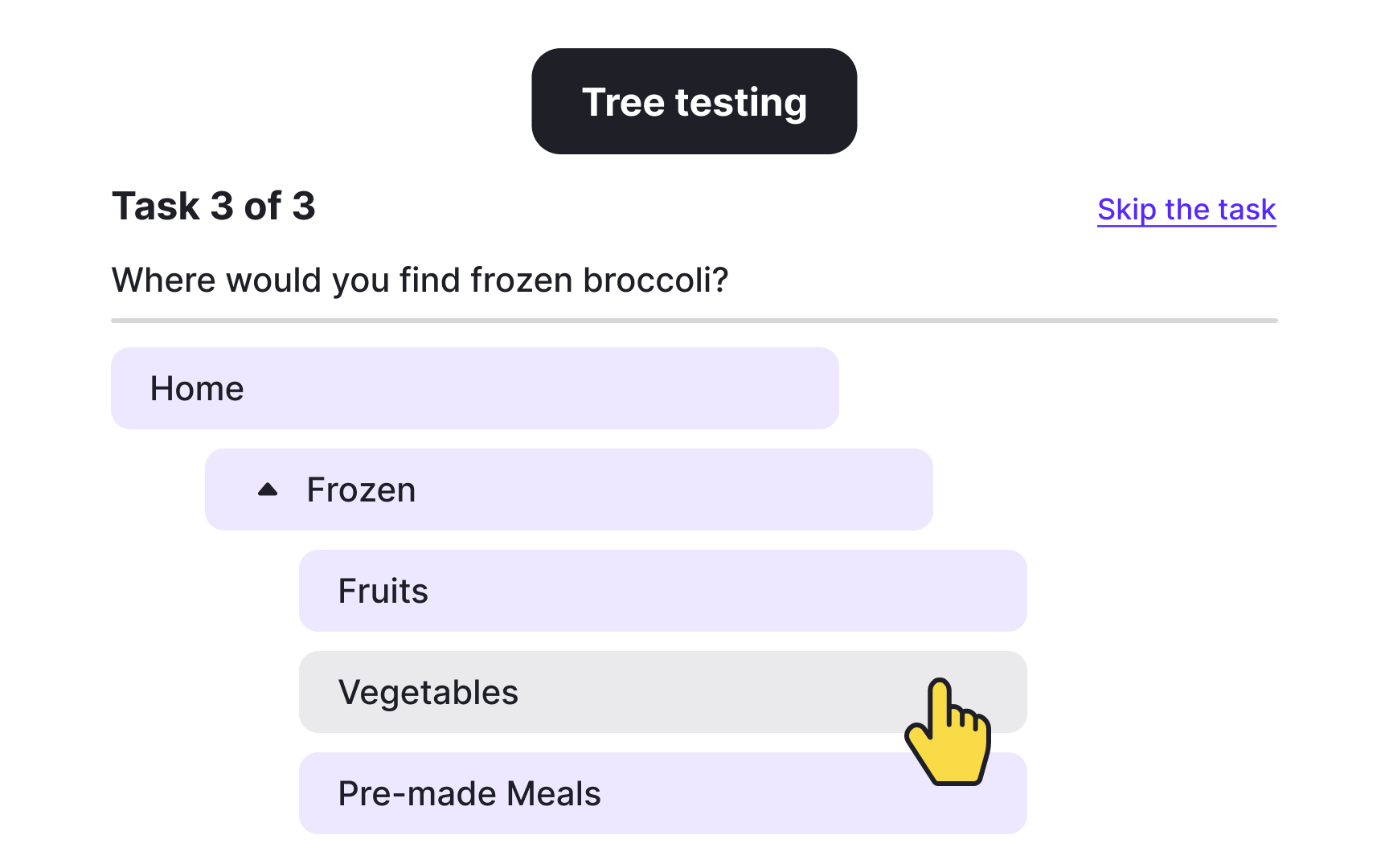

- During the design stage: The focus moves to refining and improving what you're building. Methods like card sorting, tree testing, usability testing, and moderated or unmoderated remote testing work well here.

- After release: You have real users interacting with a live product. This is your opportunity to assess how it performs against earlier versions or competitors using methods like usability benchmarking, unmoderated UX testing, A/B testing, analytics, and surveys.[1]

Qualitative vs. quantitative research methods

Researchers use qualitative methods to understand users in depth. These methods answer open-ended "why" questions: "Why do users behave this way?" or "Why does this part of the experience feel frustrating?" Data is collected by observing or speaking directly with users. In a contextual inquiry, for example, a researcher watches users interact with a product and asks follow-up questions to understand what's driving their actions. The analysis looks for patterns in behavior and feedback rather than numbers.

Qualitative methods are especially valuable when you're trying to understand why a problem exists or how to approach solving it. The tradeoff is that results can be influenced by poor questions, misunderstandings, or researcher bias.

Quantitative research takes a different angle. It collects numerical data and analyzes it using statistics, measuring user behavior and attitudes indirectly through surveys, experiments, or analytics. These methods answer questions like "What do users do?", "How many people use this feature?", or "How often does this error occur?" Quantitative data is strong for spotting trends, testing hypotheses, and comparing options at scale. What it can't do is explain the reasoning behind user behavior, and that's where qualitative methods come back in.

Pro Tip! Neither method is complete on its own. Qualitative research can reveal what to measure, and quantitative research can confirm whether the pattern is widespread. The most reliable research programs use both.

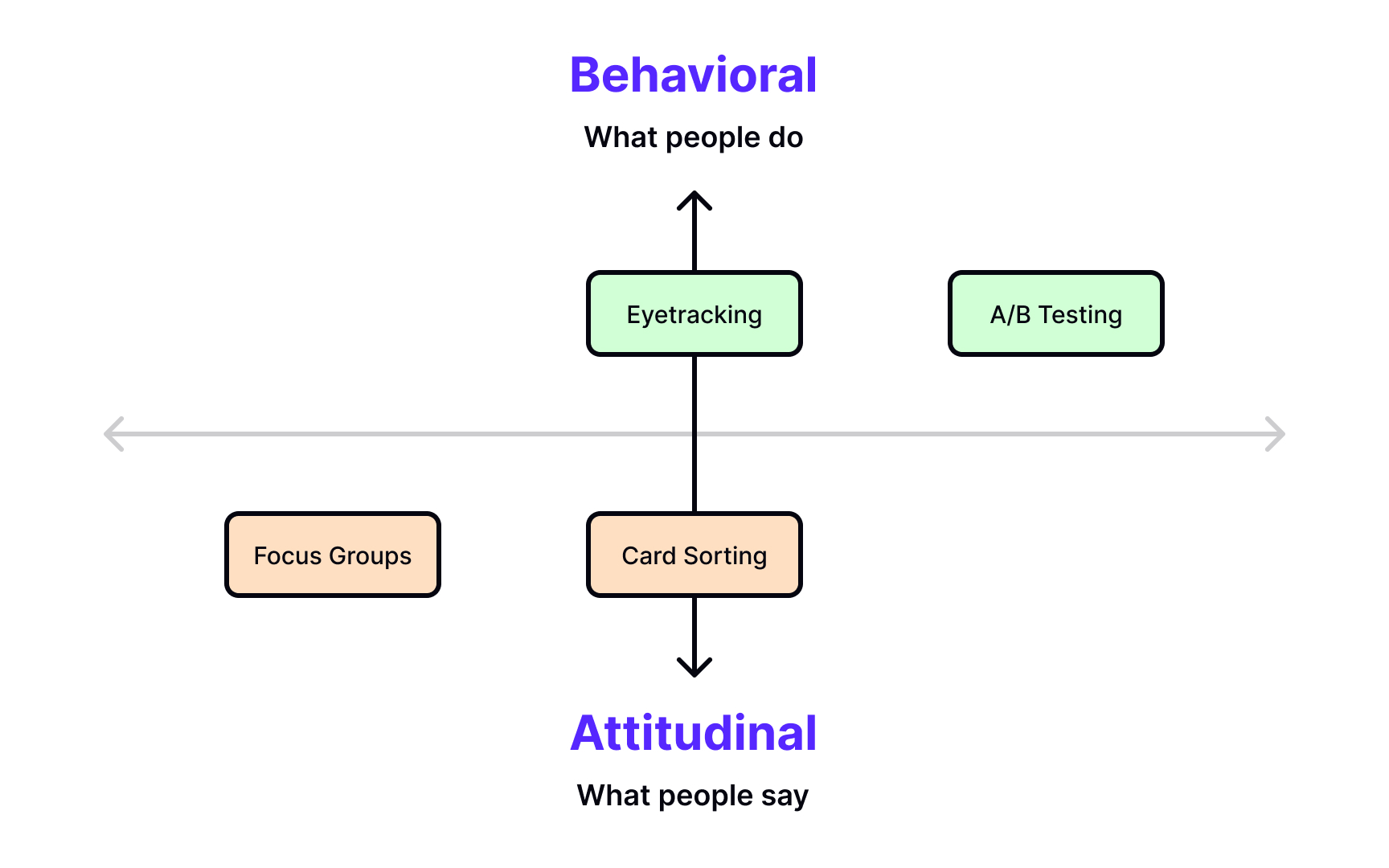

Attitudinal vs. behavioral research methods

Attitudinal research helps you understand users' mental models and opinions: what they believe about a product, how they expect it to work, and what they think about their experience. Card sorting, for example, can reveal how users mentally categorize information, which directly informs your information architecture decisions. Surveys help track attitudes and opinions over time, while focus groups surface top-of-mind reactions to concepts or designs.

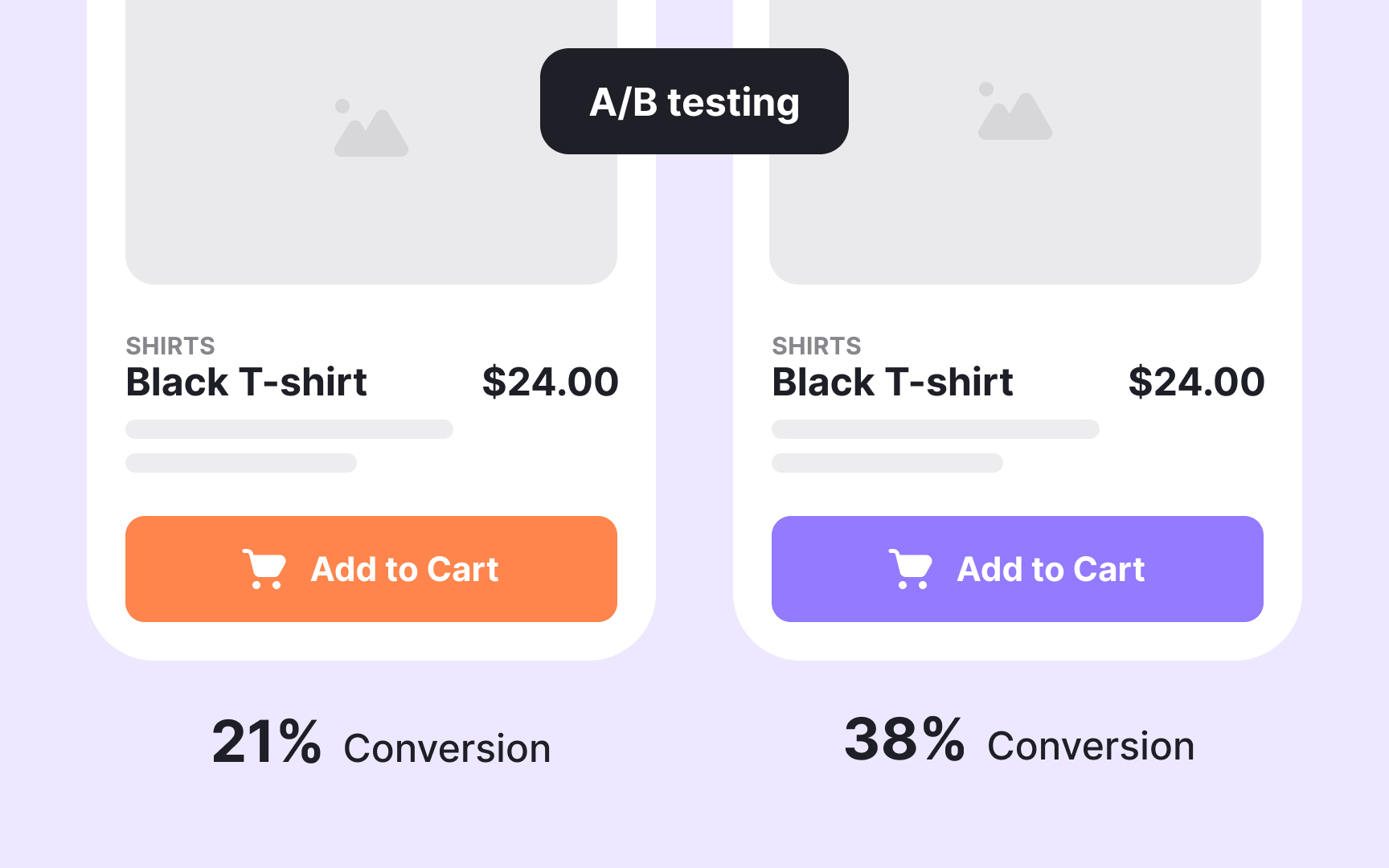

Behavioral research focuses on what users actually do when they interact with a product or service. A/B testing lets you compare how different design variations affect real user actions, while eye tracking shows where users look and how they navigate a design visually. Behavioral data is particularly valuable because it captures actions rather than intentions, and the two don't always match.

In practice, most research methods blend both types to some degree. Two commonly used examples are usability testing and field studies, which combine observed behavior with self-reported feedback. A participant in a usability test might complete a task successfully while also explaining what confused them along the way. That combination of what they did and what they said gives you a fuller picture than either type alone.

Pro Tip! When attitudinal and behavioral data contradict each other, pay attention. Users saying they love a feature while rarely using it is a signal worth investigating, not averaging out.

Choose research methods by usage context

Another lens for choosing research methods is usage context: how much interaction with the product the study actually involves. This shapes how much control you have over what you learn and how naturally users behave during the session.

There are 4 main contexts to consider:

- Natural use: Users interact with the product on their own terms, with minimal interference from the researcher. You get authentic behavior, but less control over which topics surface. Ethnographic field studies, intercept surveys, and analytics techniques fall into this category.

- Scripted use: The researcher guides participants through specific areas of the product. A benchmarking study, for example, is usually tightly scripted to ensure every participant completes the same tasks under comparable conditions.

- Limited use: Participants engage with one specific function or aspect of the experience rather than the product as a whole. Participatory design, concept testing, and desirability studies are common examples.

- Not using the product: Some studies remove the product entirely and focus on broader perceptions, associations, or mental models. Card sorting, for example, reveals how users naturally categorize information without any product interaction influencing their thinking. Concept testing and studies exploring aesthetic or emotional responses to design styles also fall here.

Find the right method for user needs research

One of the 3 broad questions UX research can answer is "What do people need?" Unlike wants or usability issues, needs are often things users can't easily articulate. They emerge through observation, conversation, and time. The methods below are particularly well suited to uncovering them.

- Contextual inquiry and ethnography: Observing users in their natural environment lets you see how they accomplish tasks, what workarounds they've developed, and where they struggle. Because you're watching real behavior rather than asking users to recall it, these methods often surface needs users wouldn't think to mention.

- Interviews: Direct conversation with users gives you space to explore not just what they do, but why. Unlike surveys, interviews let you follow unexpected threads and ask follow-up questions when something interesting comes up.

- Surveys and questionnaires: Surveys can reach a much larger group of users than interviews, making them useful for identifying patterns across a broad audience. The tradeoff is less depth. You can spot a trend, but you'll often need another method to understand what's behind it.

- Diary studies: Participants log their experiences over days or weeks, capturing how their needs and behaviors evolve over time. This is especially valuable when the experience you're studying doesn't happen in a single sitting or changes with repeated use.

Pro Tip! If users struggle to explain what they need when asked directly, that's a signal to observe rather than interview. People are better at showing needs than describing them.

Find the right method for user wants research

Another broad question UX research can answer is "What do people want?" This is distinct from what users need. Wants are often tied to preferences, expectations, and desirability rather than core functionality. The methods below are well suited to surfacing them.

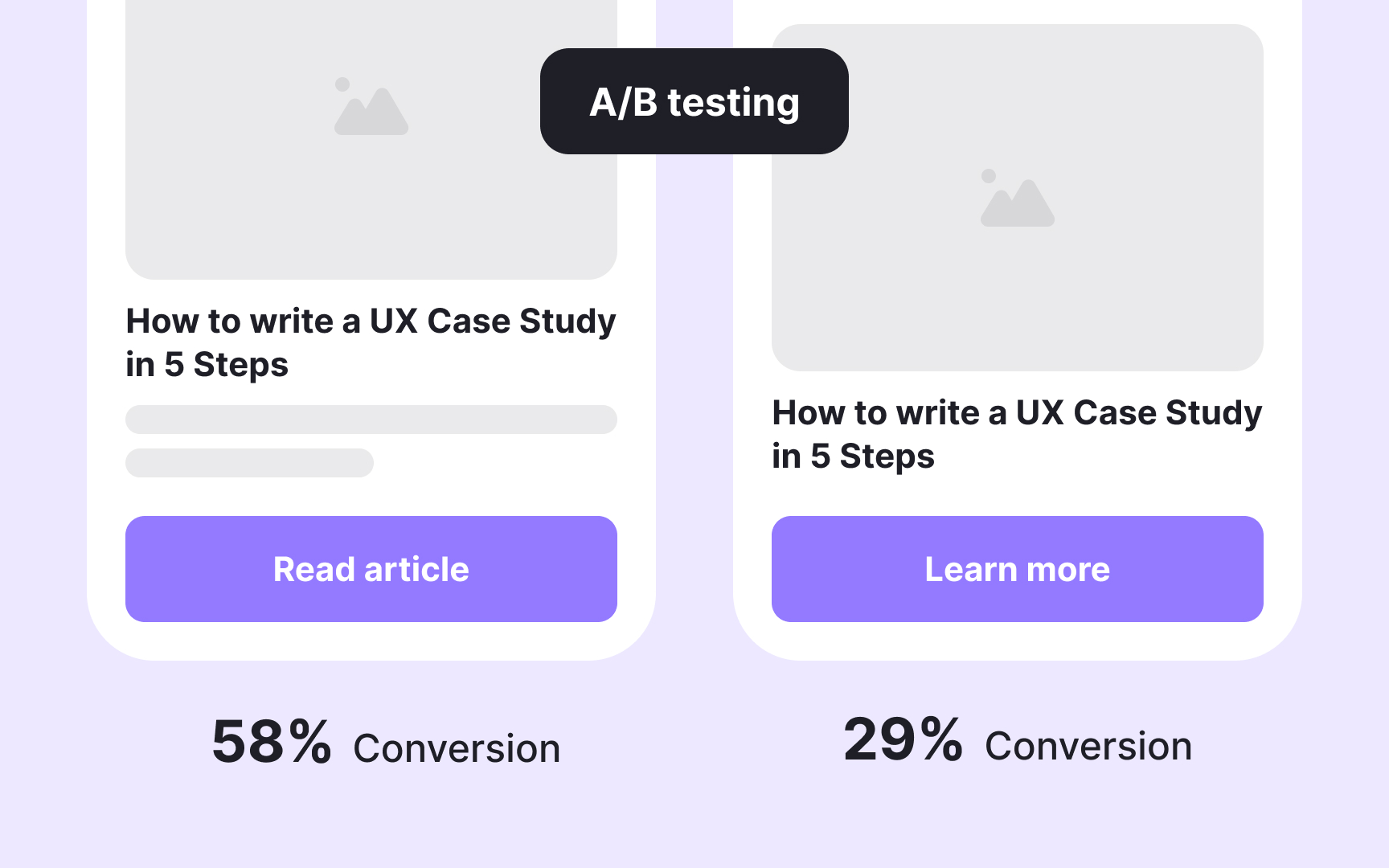

- A/B testing: By showing different versions of a design to different user groups, A/B testing reveals which option users respond to more favorably. You can test variations in calls to action, color, imagery, or layout to see what drives engagement. The results reflect actual user behavior rather than stated preferences, which makes them particularly reliable.

- Focus groups: When facilitated well, focus groups do more than collect opinions. They create a space where participants build on each other's ideas, surfacing language, associations, and reactions that might not emerge in a one-on-one interview. Pay attention to the words users reach for naturally — they often reveal how people think about your product better than any survey question could.

- Rapid prototyping: This group of techniques helps you quickly build and validate design concepts before committing to a direction. Used early in the process, rapid prototyping lets you test whether a design direction resonates with users before investing significant time and resources. It turns abstract ideas into something tangible users can actually respond to.[2]

Find the right method for usability research

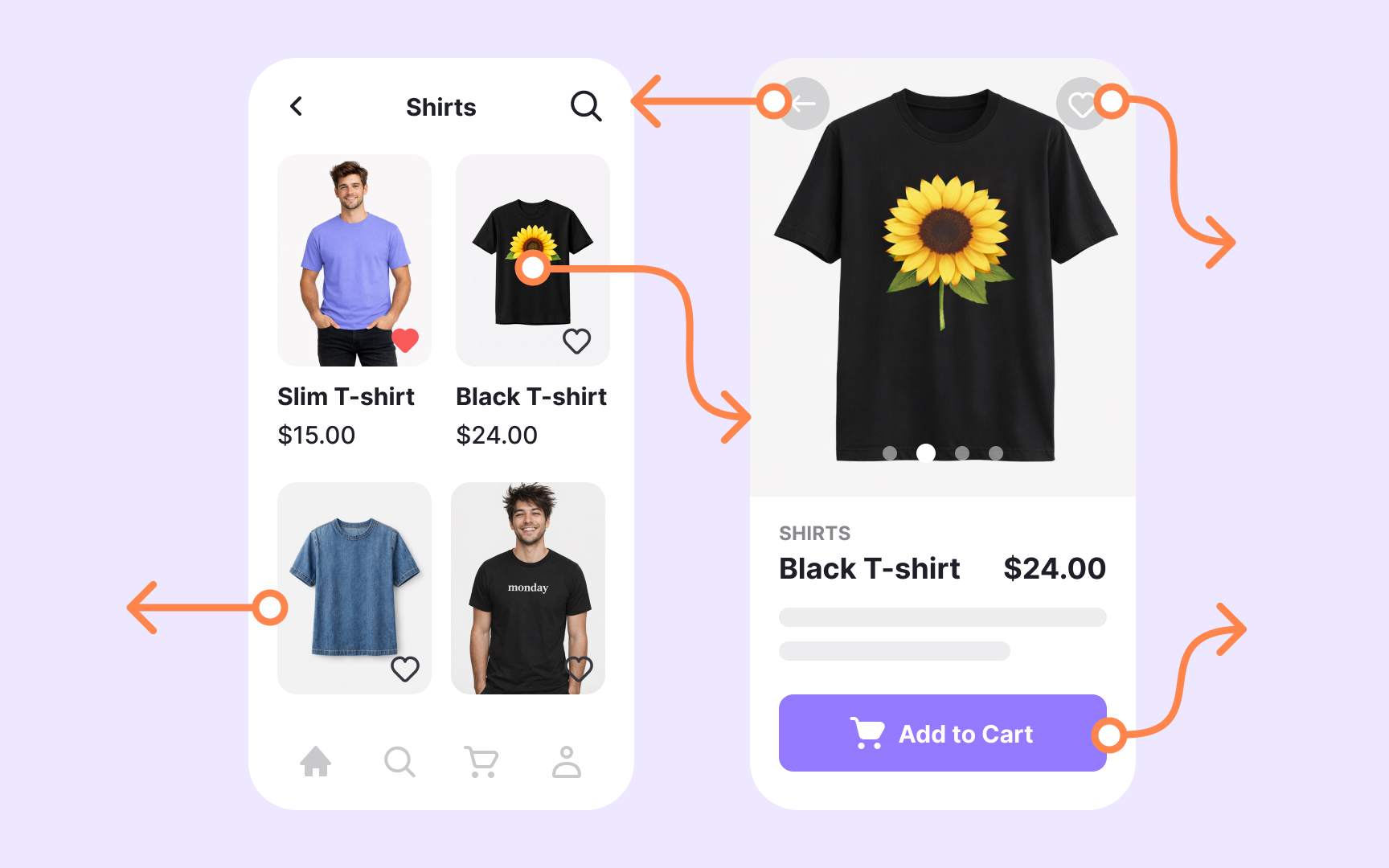

Once you have a functional prototype, the research question changes. You're no longer asking what users need or want. You're asking whether they can actually use what you've built. This stage is about identifying friction, confusion, and failure points before they make it into the final product.

- Usability testing: One of the most direct methods for identifying usability issues, usability testing involves observing real users as they attempt to complete tasks with your prototype. It surfaces problems that are easy to miss when you're close to the design, from confusing navigation to unclear labels to unexpected user mental models.

- Card sorting: Best used before or alongside prototype development, card sorting helps establish information architecture by asking users to group and label content in ways that make sense to them. The results inform website structure, menu organization, and content grouping in a way that reflects how users actually think rather than how the team assumes they do.

- Tree testing: Once your information architecture is in place, tree testing helps you validate it. If users aren't reaching an important page, a tree test can tell you whether the problem lies with the structure itself or with how individual items are labeled.

Pro Tip! Run card sorting before you commit to a structure, then use tree testing to validate it. The two methods work best in sequence, not in isolation.

Topics

References

- How to Choose the Right User Research Method | Boldist

- Design Better And Faster With Rapid Prototyping — Smashing Magazine | Smashing Magazine